52896WA Advanced Diploma of Civil and Structural Engineering (Materials Testing)

Investigation of the properties of construction materials, the principles which…Read moreGraduate Diploma of Engineering (Safety, Risk and Reliability)

The Graduate Diploma of Engineering (Safety, Risk and Reliability) program…Read moreProfessional Certificate of Competency in Fundamentals of Electric Vehicles

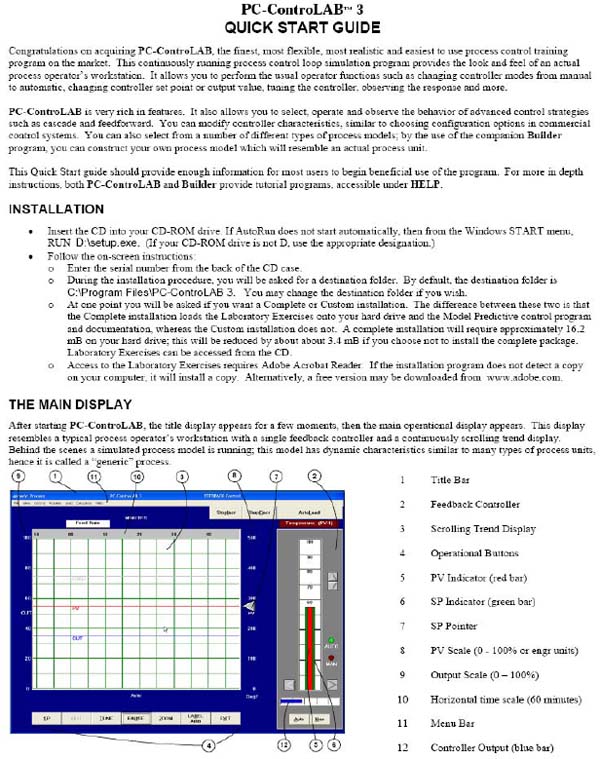

Learn the fundamentals of building an electric vehicle, the components…Read moreProfessional Certificate of Competency in 5G Technology and Services Overview

Learn 5G network applications and uses, network overview and new…Read moreProfessional Certificate of Competency in Clean Fuel Technology - Ultra Low Sulphur Fuels

Learn the fundamentals of Clean Fuel Technology - Ultra Low…Read moreProfessional Certificate of Competency in Battery Energy Storage and Applications

Through a scientific and practical approach, the Battery Energy Storage…Read more52910WA Graduate Certificate in Hydrogen Engineering and Management

Hydrogen has become a significant player in energy production and…Read moreProfessional Certificate of Competency in Hydrogen Powered Vehicles

This course is designed for engineers and professionals who are…Read more

This book will give you a strong understanding of the key underpinning concepts from a very simple understandable point of view.

Revision 5

Website: www.idc-online.com

E-mail: idc@idc-online.com

IDC Technologies Pty Ltd

PO Box 1093, West Perth, Western Australia 6872

Offices in Australia, New Zealand, Singapore, United Kingdom, Ireland, Malaysia, Poland, United States of America, Canada, South Africa and India

Copyright © IDC Technologies 2011. All rights reserved.

First published 2011

All rights to this publication, associated software and workshop are reserved. No part of this publication may be reproduced, stored in a retrieval system or transmitted in any form or by any means electronic, mechanical, photocopying, recording or otherwise without the prior written permission of the publisher. All enquiries should be made to the publisher at the address above.

Disclaimer

Whilst all reasonable care has been taken to ensure that the descriptions, opinions, programs, listings, software and diagrams are accurate and workable, IDC Technologies do not accept any legal responsibility or liability to any person, organization or other entity for any direct loss, consequential loss or damage, however caused, that may be suffered as a result of the use of this publication or the associated workshop and software.

In case of any uncertainty, we recommend that you contact IDC Technologies for clarification or assistance.

Trademarks

All logos and trademarks belong to, and are copyrighted to, their companies respectively.

Acknowledgements

IDC Technologies expresses its sincere thanks to all those engineers and technicians on our training workshops who freely made available their expertise in preparing this manual.

Preface

Have you ever wondered about getting a thorough introduction to the fundamentals of instrumentation, industrial automation and control; thus allowing you to work and perform simple tasks in this key area? In reading this manual and attending the associated course, this could be the opportunity to walk out with a great grounding in the basics of this exciting field which is rapidly changing the way all plants operate. The constant drive to cut costs means that as an operator you will be increasingly having to have more skills and know-how in the plant instrumentation and process control area.

This manual and associated course represents a tremendous opportunity to gain expertise in all the key areas of the fast growing area of industrial automation in two days. Presented by an expert in the area but who is passionate with getting the key chunks of know-how and expertise across to you in a simple understandable manner which you can immediately apply to your job. This is most definitely not a boring lecture style presentation but an intensive learning experience where you will walk away with real skills as a result of the hands-on practical exercises, calculations, case studies and group sessions to ensure an understanding of the concepts and ideas discussed. You will undertake practical sessions at approximately 20 to 30 minute intervals to maximise the absorption rate.

The topics covered commence with an introduction to instrumentation and measurement ranging from pressure, level, temperature and flow devices followed by a review of process control including the all important topic of PID loop tuning. Furthermore, PLC and SCADA systems are covered and the important topics of industrial data communication networks are also examined – again from a very simple understandable point of view. Finally, the manual and course are rounded off with a hands-on review of reading and interpreting simple plant documentation such as P&ID’s so that you can see and understand the operation of the plant through the documentation.

You will gain a strong understanding of the key concepts in instrumentation, process control, SCADA and PLCs.

WHAT YOU WILL LEARN:

- ♦ The fundamentals of instrumentation and process control

- ♦ The basics of PLC’s and SCADA systems

- ♦ An ability to troubleshoot simple problems with instruments, PLC’s and SCADA systems

- ♦ An ability to understand simple plant documentation such as P&ID’s

- ♦ How to work effectively with your instrumentation plant colleagues

PREQUISITES:

The manual and associated course is presented in easy to understand practical language. All you need to benefit from this is a very basic understanding of mathematics and some electrical theory. Contact us for comprehensive pre-course reading and preparation if you are unsure about your level of understanding.

WHO SHOULD READ THIS MANUAL / ATTEND THE COURSE?

Anybody with an interest in gaining know-how in the full range of instrumentation, process control, PLC’s, SCADA and P&ID documentation, this will range from operators, trades personnel, procurement staff, sales staff, technicians and engineers from other backgrounds/disciplines, such as mechanical, electrical and civil. Even the plant secretary who is keen to have a good understanding of the key concepts would benefit. Managers who are keen to understand the key workings and the future of their plants would also benefit from this.

CONTENT SUMMARY

INSTRUMENTATION AND PROCESS CONTROL

INTRODUCTION

- ♦ Overview of instrumentation and control

- ♦ Key building blocks of PLC’s and SCADA systems

- ♦ Outline of the workshop

INTRODUCTION TO PROCESS MEASUREMENT

- ♦ Basic measurement concepts

- ♦ Definition of terminology

- ♦ Measuring instruments and control valves as part of the overall control system

Practical session

PRESSURE MEASUREMENT

- ♦ Principle of pressure measurement

- ♦ Pressure transducers and elements

Practical session

LEVEL MEASUREMENT

- ♦ Principles of level measurement

- ♦ Simple sight glasses

- ♦ Hydrostatic pressure

- ♦ Ultrasonic measurement

- ♦ Electrical measurement

- ♦ Density measurement

Practical session

TEMPERATURE MEASUREMENT

- ♦ Principles of temperature measurement

- ♦ Thermocouples

- ♦ Resistance Temperature Detectors (RTD’s)

- ♦ Thermistors

Practical session

FLOW MEASUREMENT

- ♦ Principles of flow measurement

- ♦ Open channel flow measurement

- ♦ Oscillatory flow measurement

- ♦ Magnetic flow measurement

- ♦ Positive displacement

- ♦ Ultrasonic flow measurement

- ♦ Mass flow measurement

Practical session

FUNDAMENTALS OF PROCESS LOOP TUNING

- ♦ Processes, controllers and tuning

- ♦ PID controllers

- ♦ Gain, dead time and time constants

- ♦ Process noise

- ♦ General purpose closed loop tuning method

Practical session

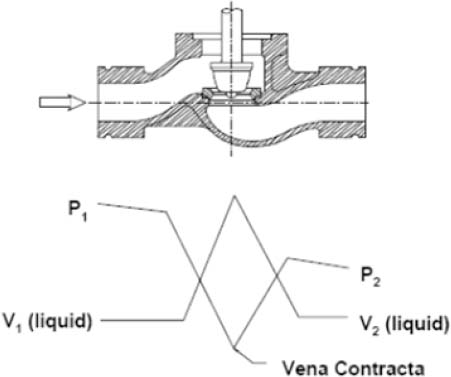

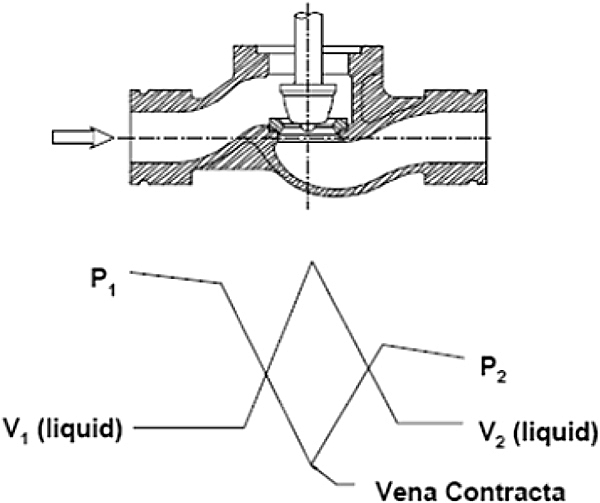

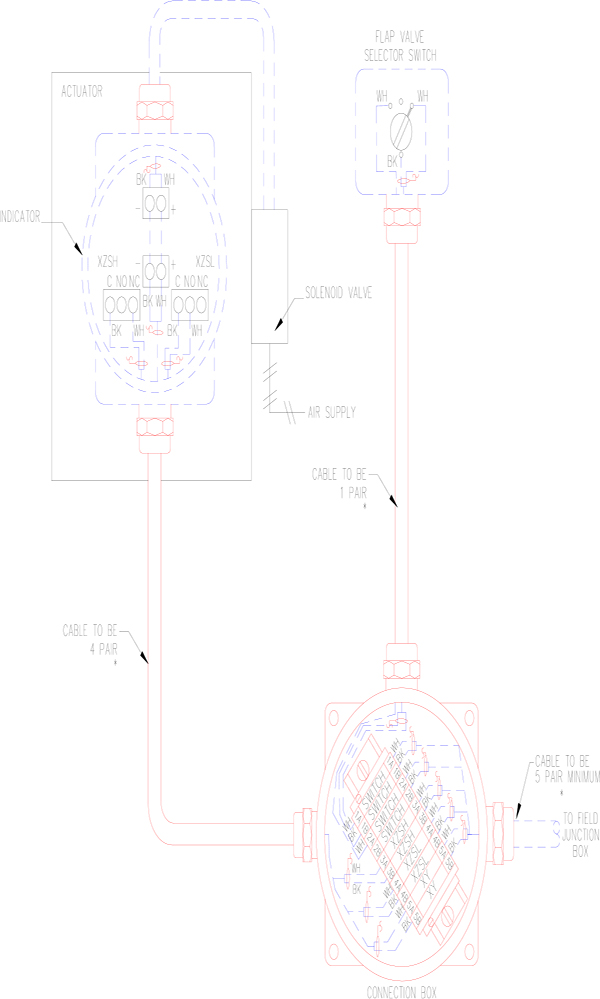

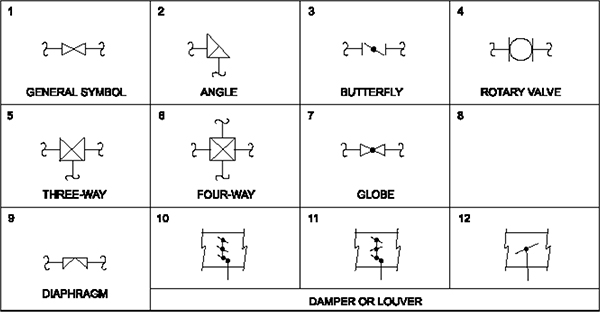

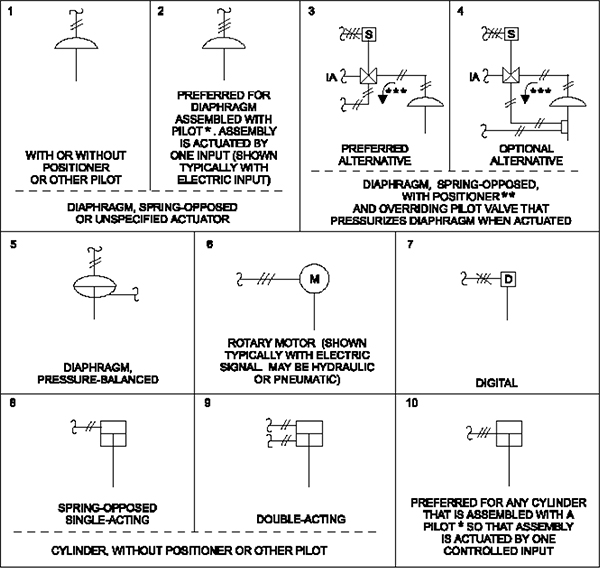

INTRODUCTION TO CONTROL VALVES

- ♦ Introduction

- ♦ Definition of a control valve

- ♦ Cavitation

- ♦ Flashing

Practical session

DIFFERENT TYPES OF CONTROL VALVES

- ♦ Globe valves

- ♦ Butterfly

- ♦ Eccentric disk

- ♦ Ball

- ♦ Rotary plug

- ♦ Diaphragm and pinch

Practical session

PLC’S, SCADA, AND COMMUNICATIONS

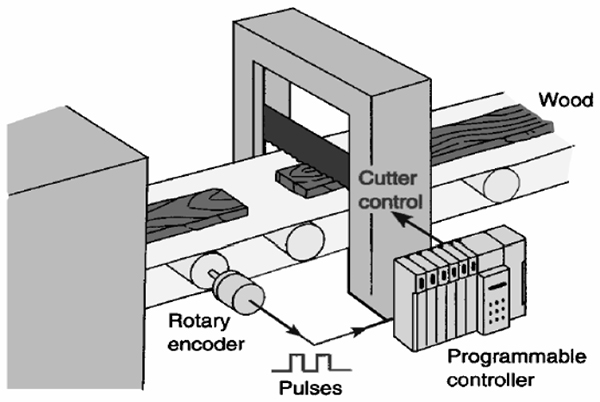

FUNDAMENTALS OF PLCS

- ♦ Introduction to PLC’s

- ♦ Alternative control systems – where do PLC’s fit in?

- ♦ Why PLC’s have become so widely accepted

Practical session

FUNDAMENTALS OF PLC HARDWARE

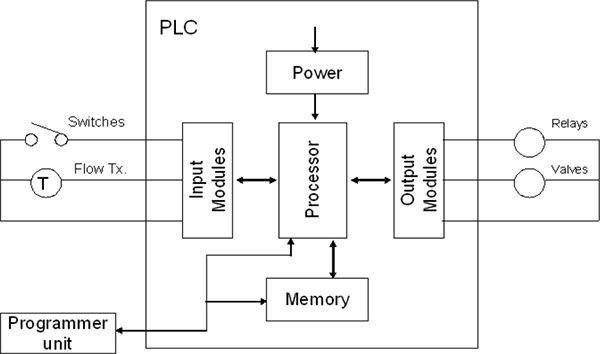

- ♦ Block diagram of typical PLC

- ♦ PLC processor module – memory organization

- ♦ Input / output section – module types

- ♦ Power supplies

Practical session

FUNDAMENTALS OF PLC SOFTWARE

- ♦ Methods of representing Logic

- ♦ Ladder Logic basics

- ♦ The basic rules for programming

- ♦ Simple PLC programs

Practical session

INTRODUCTION TO SCADA SYSTEMS

- ♦ Fundamentals

- ♦ Comparison of SCADA, DCS, PLC and smart instruments

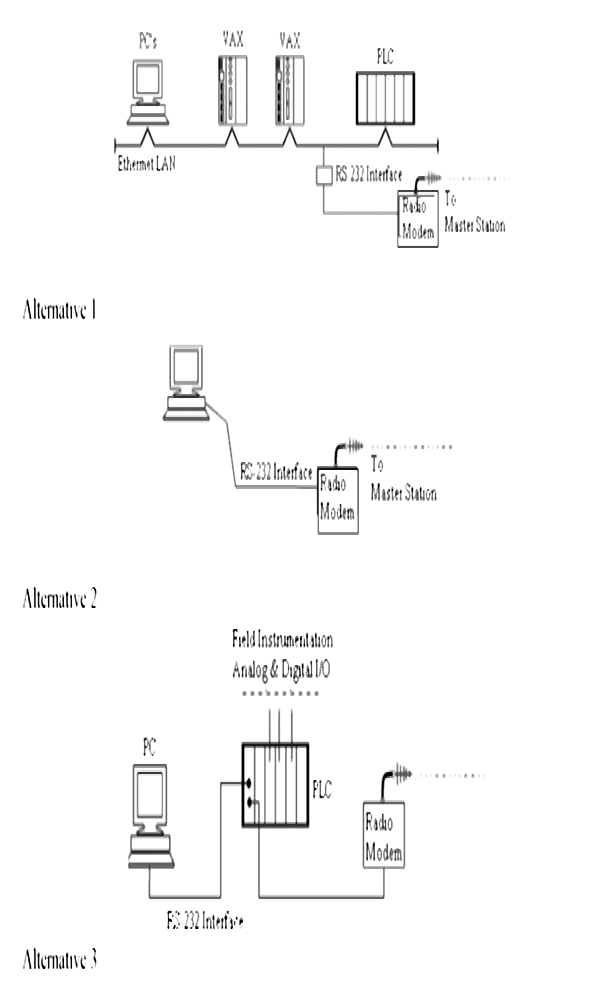

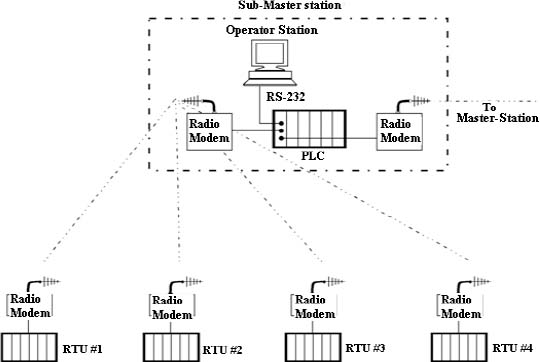

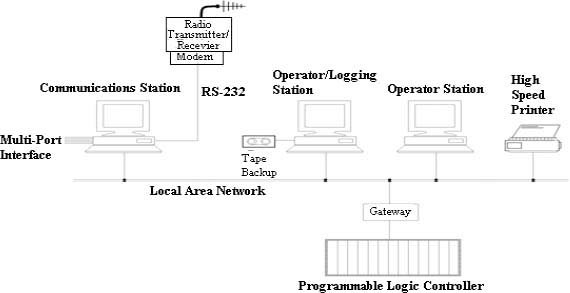

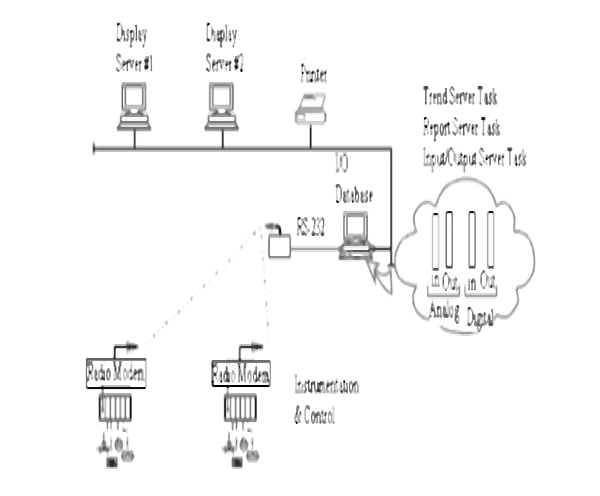

- ♦ Typical SCADA installations

- ♦ Definition of terms

Practical session

SCADA SYSTEMS HARDWARE

- ♦ Remote Terminal Unit (RTU) structure

- ♦ Analog and digital input/output modules

- ♦ Master site structure

Practical session

SCADA SYSTEMS SOFTWARE

- ♦ Fundamentals

- ♦ Components of a SCADA system

- ♦ Software – design of SCADA packages

- ♦ Configuration of SCADA systems

- ♦ Building the user interface

Practical session

BASICS OF DATA COMMUNICATIONS BETWEEN PLC AND SCADA SYSTEMS

- ♦ Twisted pair cables

- ♦ Fibre optic cables

- ♦ Public network provided services

- ♦ Industrial Ethernet

- ♦ TCP/IP

- ♦ Fieldbus

- ♦ Modbus

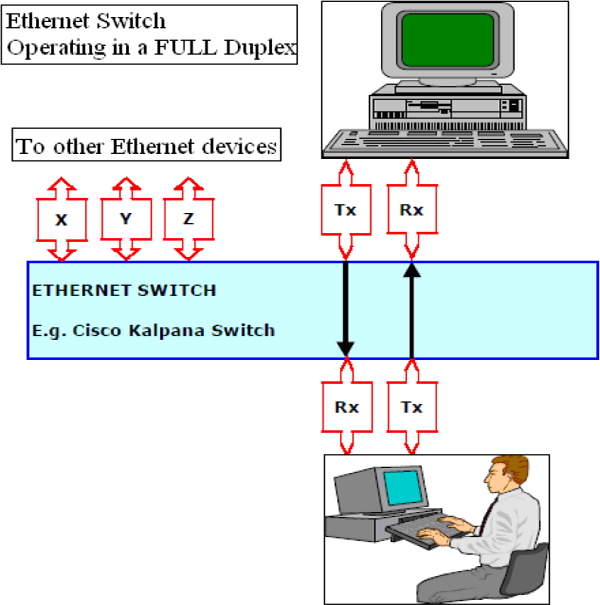

- ♦ LAN connectivity: bridges, routers and switches

- ♦ SCADA network security

Practical session

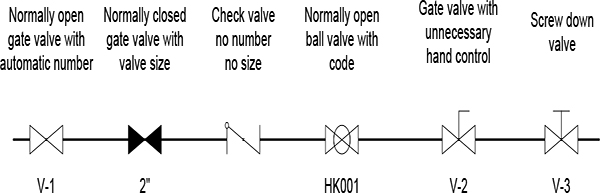

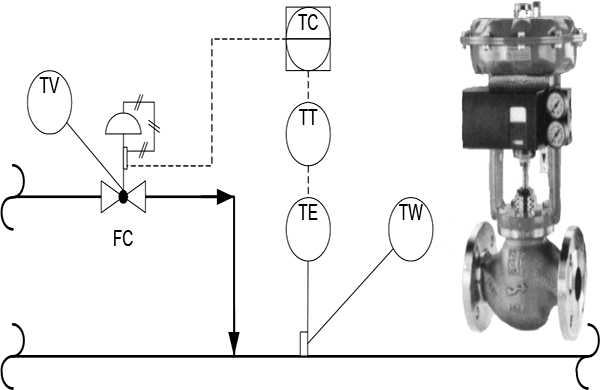

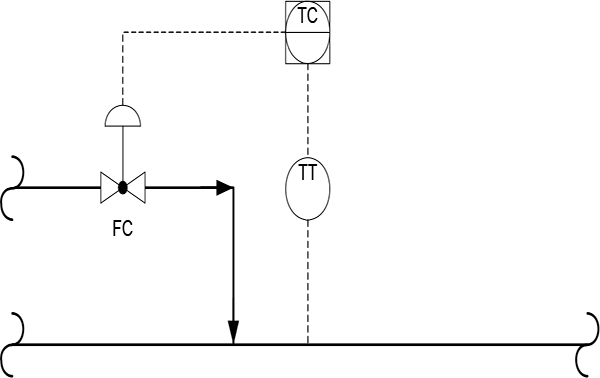

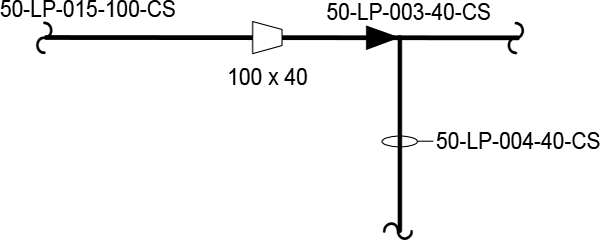

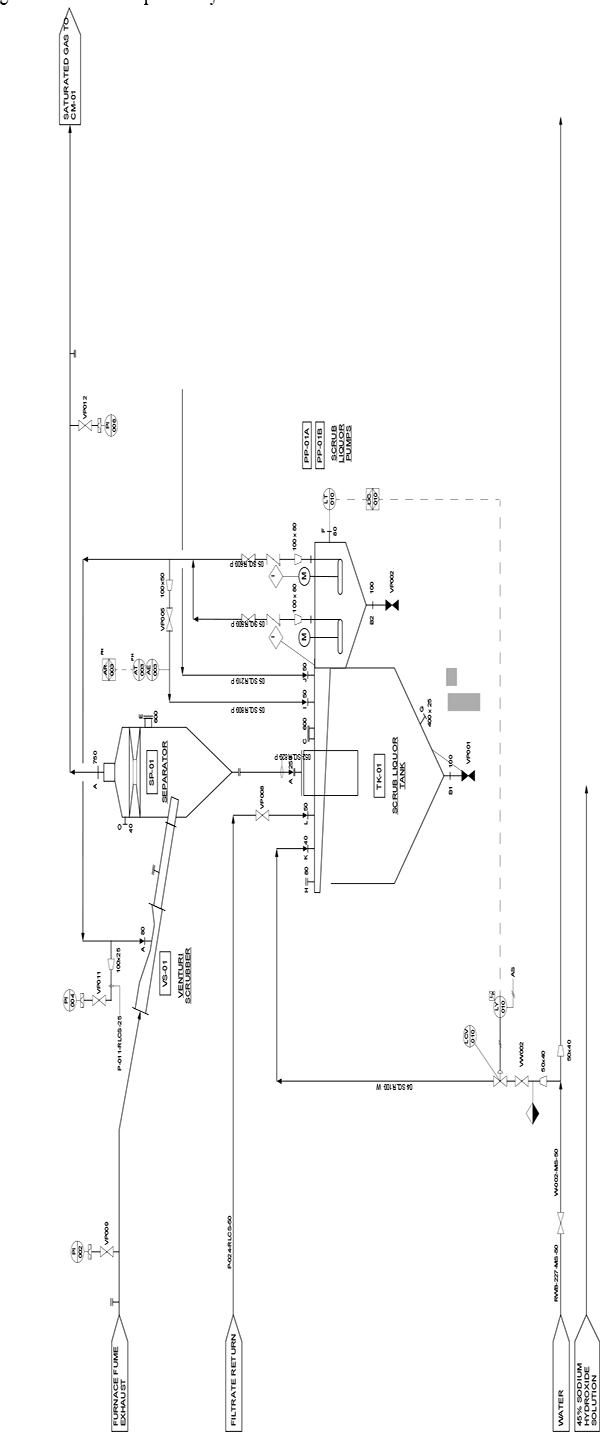

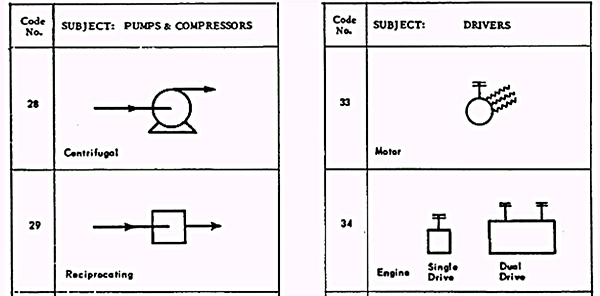

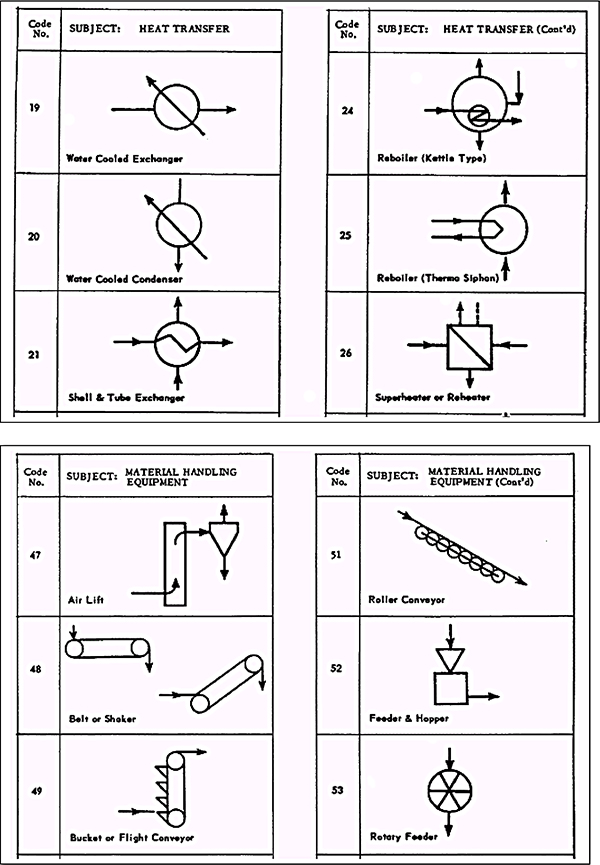

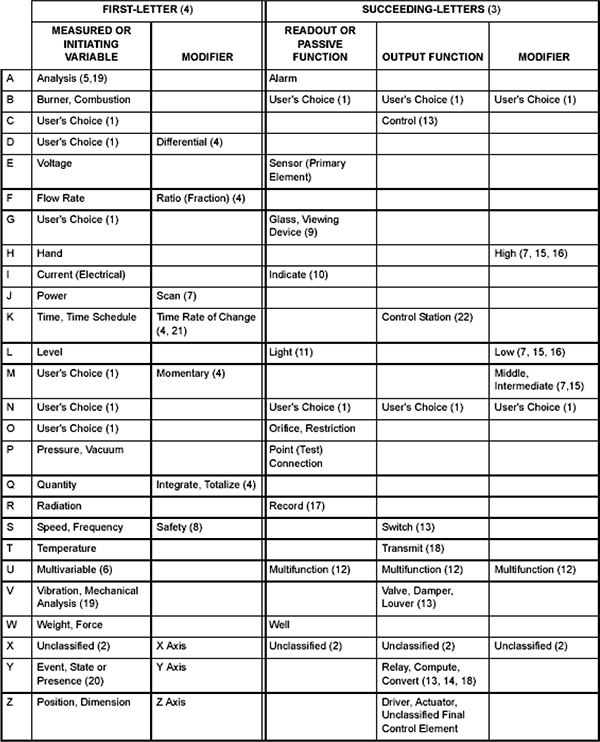

DRAWING TYPES AND STANDARDS

- ♦ Understanding diagram layouts and formats

- ♦ Cross references

- ♦ P&ID’s fundamentals

Practical session

CONCLUSION

- ♦ Summing up and revision of key concepts

- ♦ The future

Contents

1 Introduction 1

1.1 Overview of Instrumentation and control 1

1.2 Key building blocks of PLCs and SCADA systems 2

1.3 Outline of the course 3

2 Introduction to Process Measurement 5

2.1 Basic measurements and control concepts 5

2.2 Definition of terminology 9

2.5 Measuring instruments and control valves as part of the overall control system 11

3 Pressure Measurement 13

3.1 Principles of pressure measurement 13

3.2 Pressure transducers and elements 14

4 Level Measurement 27

4.1 Principles of level measurement 27

4.2 Simple sight glasses 28

4.3 Hydro pressure 29

4.4 Ultrasonic measurement 36

4.5 Electrical measurement 39

4.6 Density measurement 44

5 Temperature Measurement 47

5.1 Principles of temperature measurement 47

5.2 Thermocouples 48

5.3 Resistance Temperature Detectors (RTDs) 49

5.4 Thermistors 52

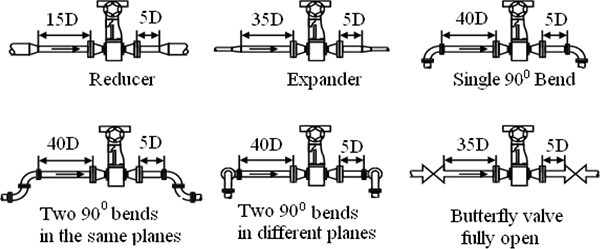

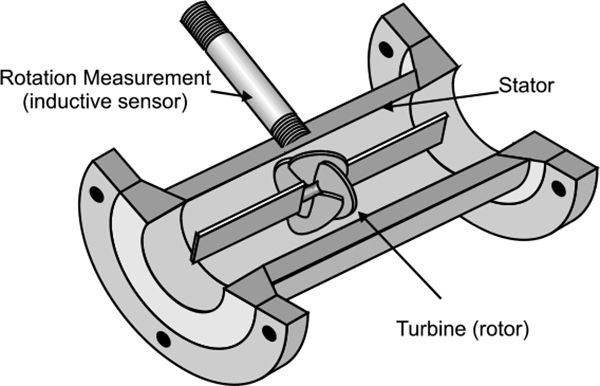

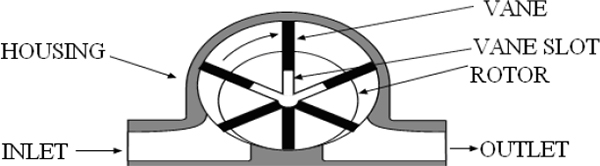

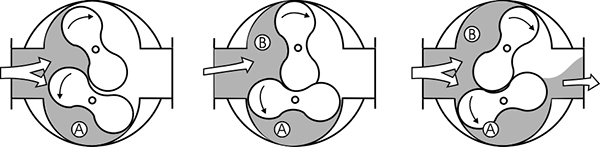

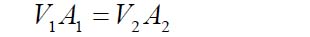

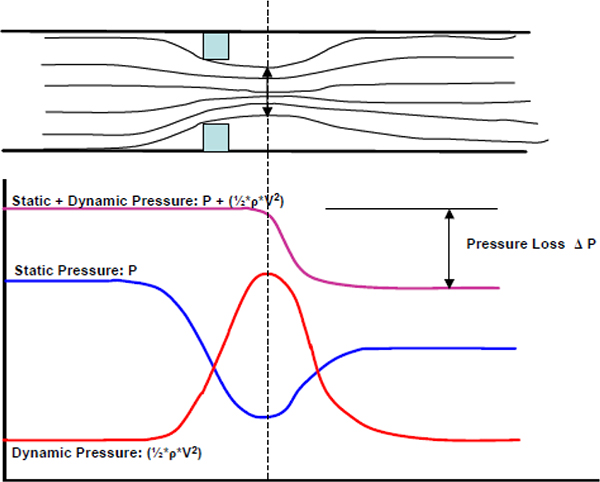

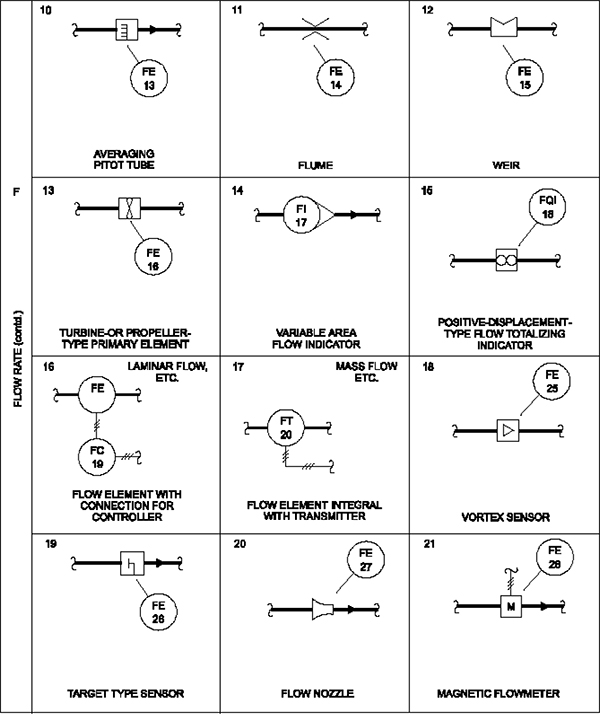

6 Flow Measurement 53

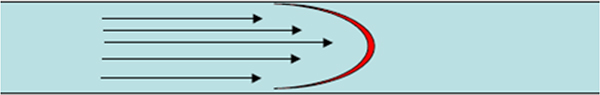

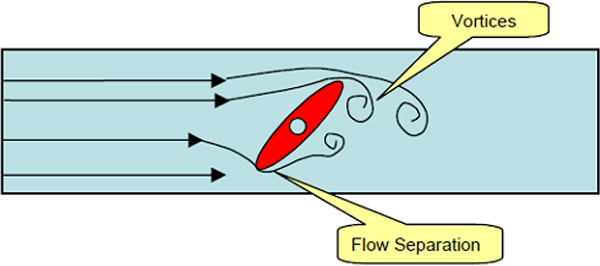

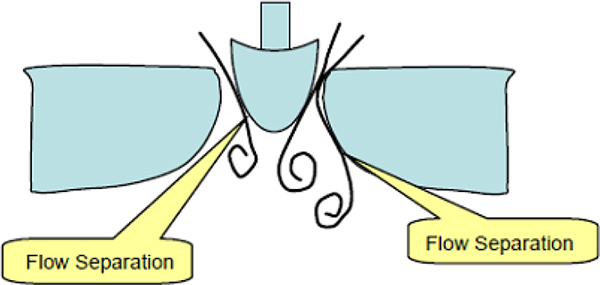

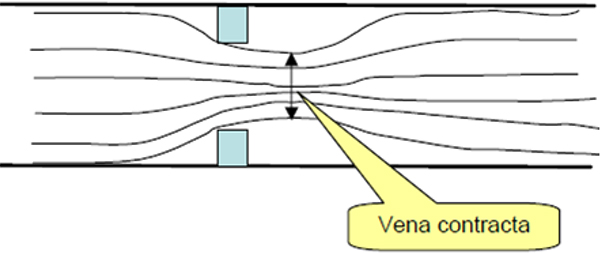

6.1 Principles of flow measurement 53

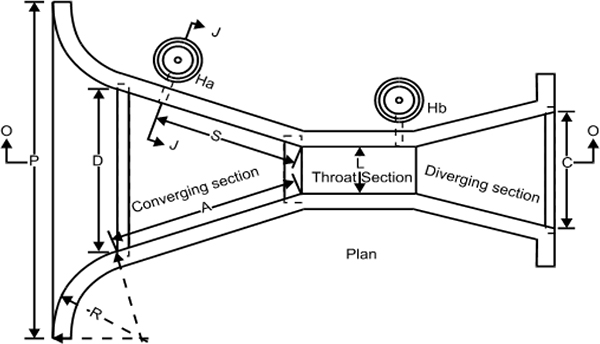

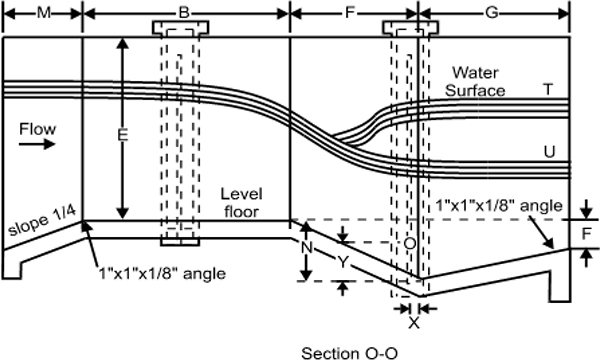

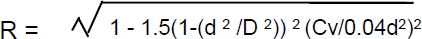

6.2 Open channel flow measurement 57

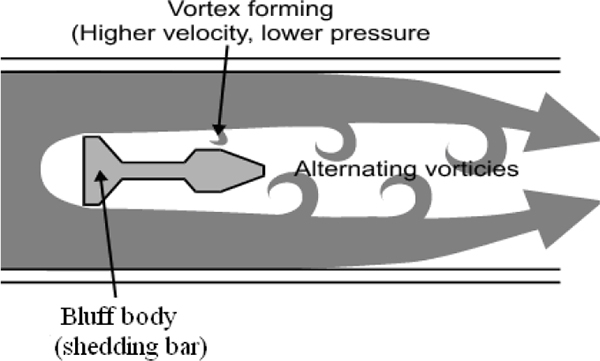

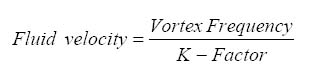

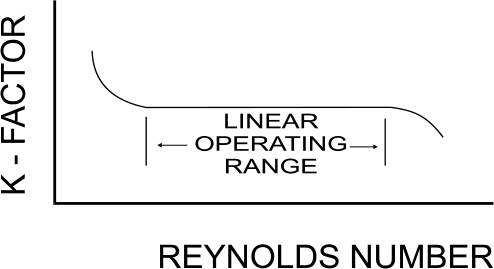

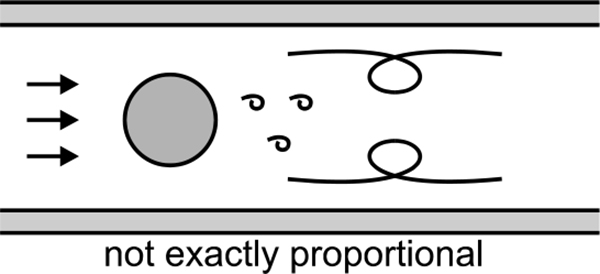

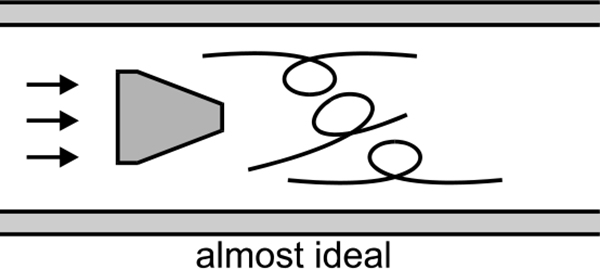

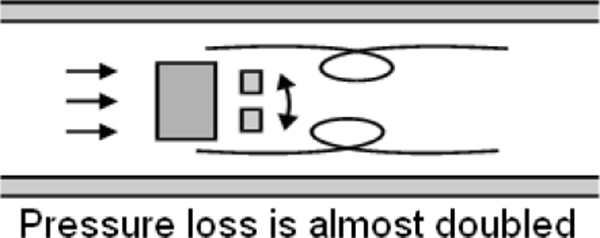

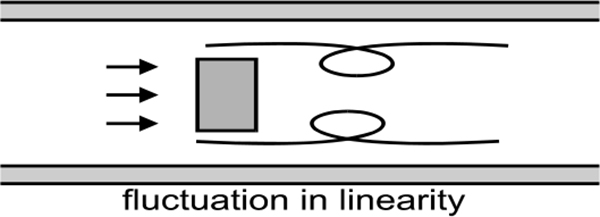

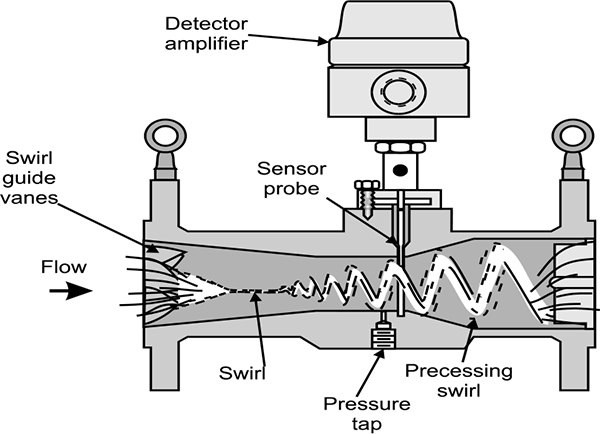

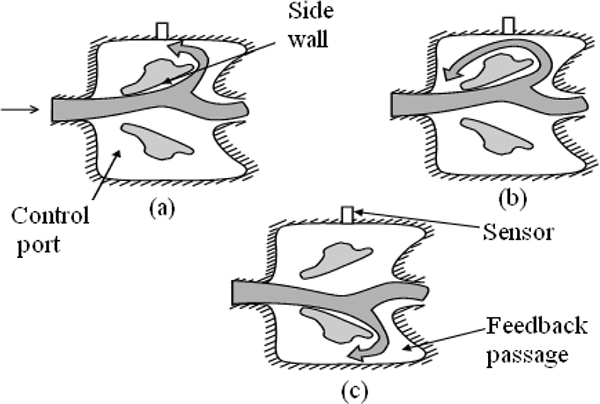

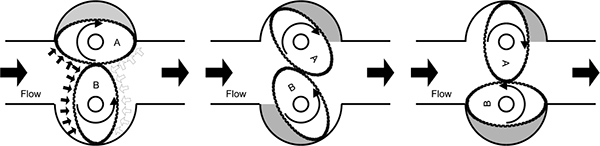

6.3 Oscillatory flow measurement 60

6.4 Magnetic flow meters 67

6.5 Positive displacement 68

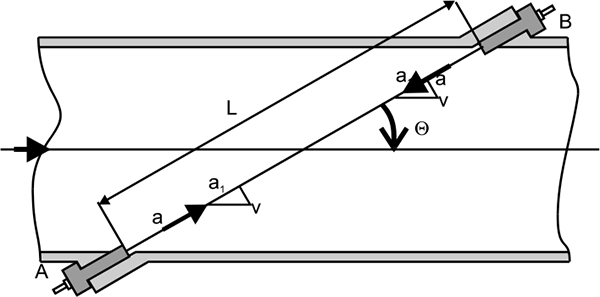

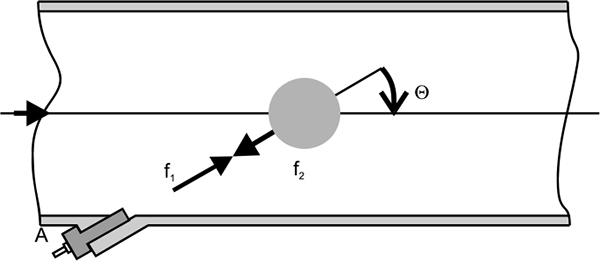

6.6 Ultrasonic flow measurement 70

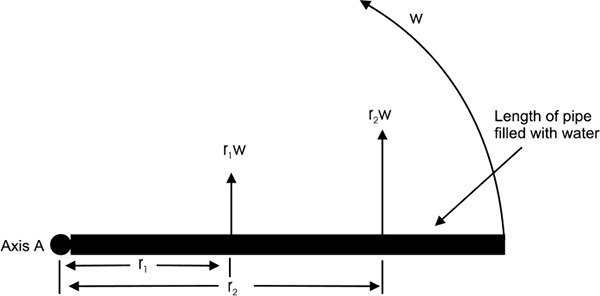

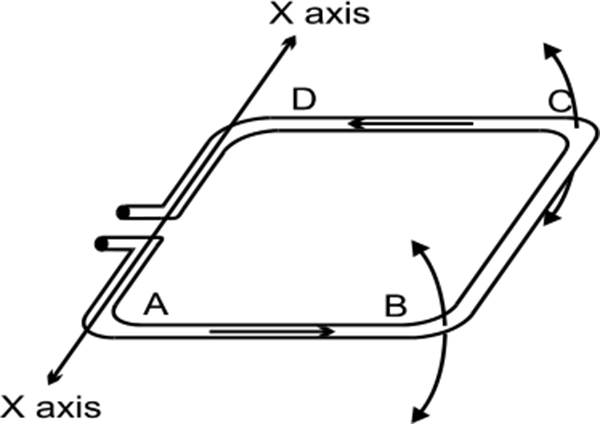

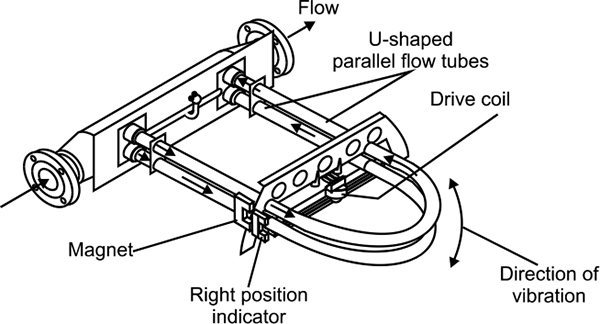

6.7 Mass flow meters 72

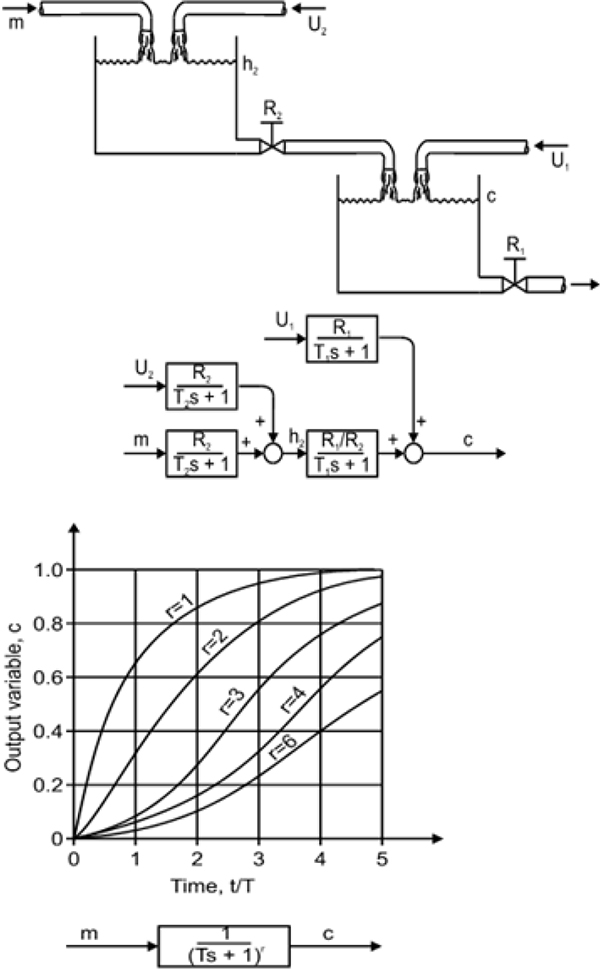

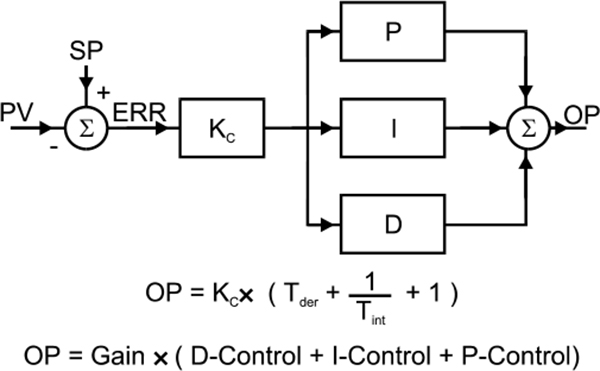

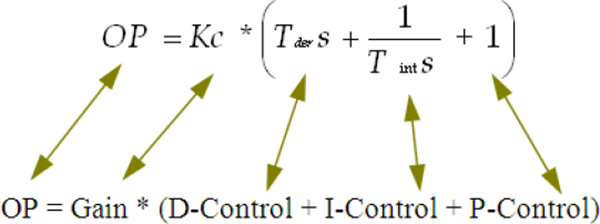

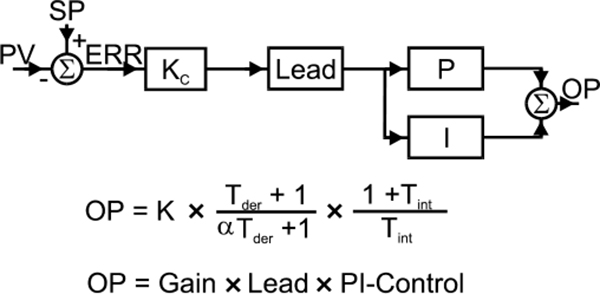

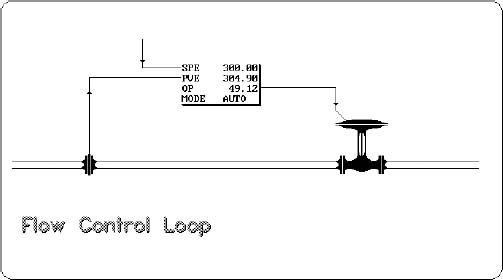

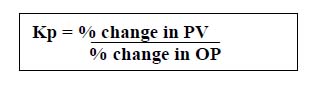

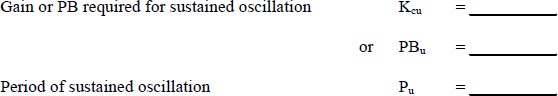

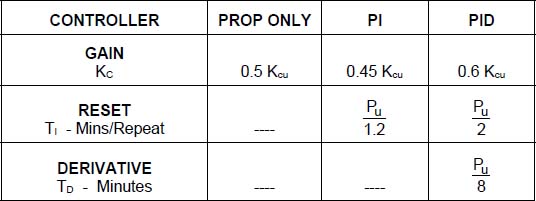

7 Fundamentals of Process Loop Tuning 75

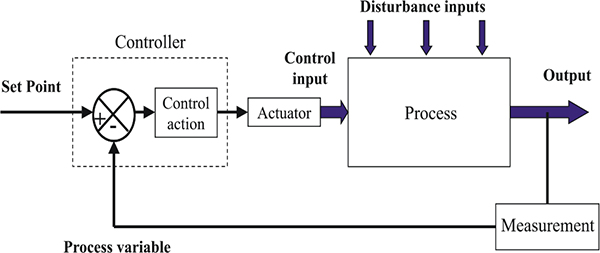

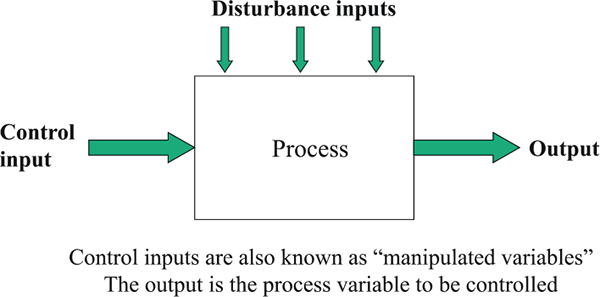

7.1 Processes, Controllers and Tuning 75

7.2 Proportional-Integral-Derivative (PID) Controllers 84

7.3 Gain, Dead Time and Time Constants 86

7.4 Process Noise 88

7.5 General Purpose Closed Loop Tuning Method 88

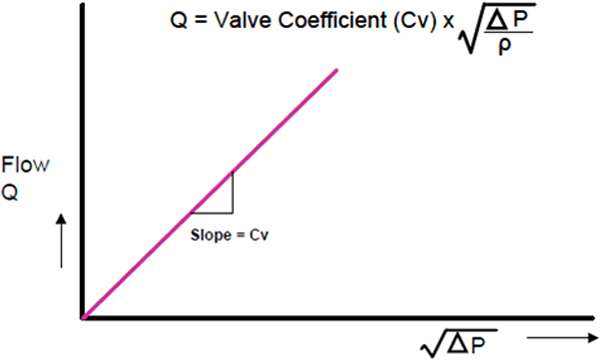

8 Introduction to Control Valves 91

8.1 Introduction 91

8.2 Definition of a Control Valve 92

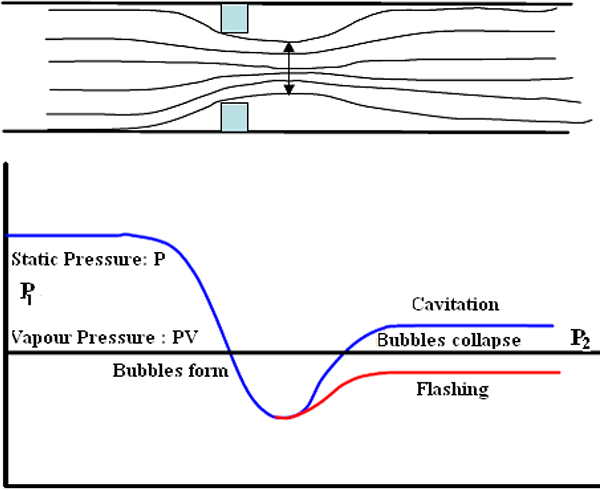

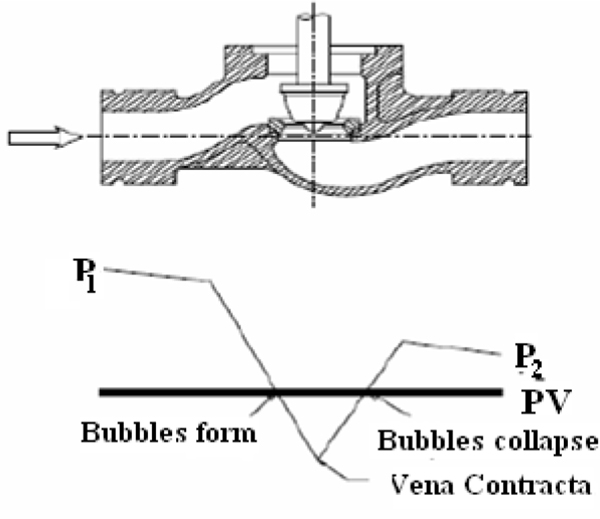

8.3 Cavitation 99

8.4 Flashing 100

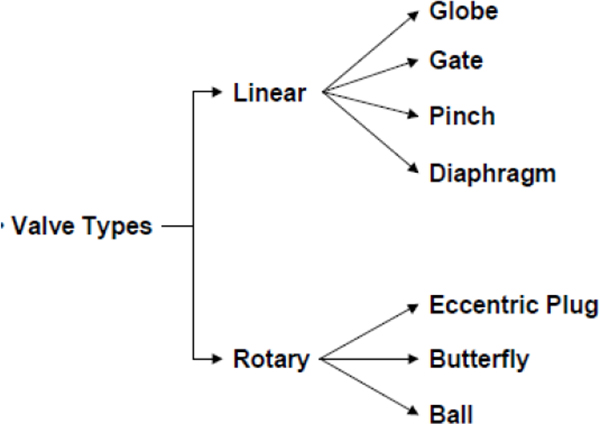

9 Different Types of Control Valves 101

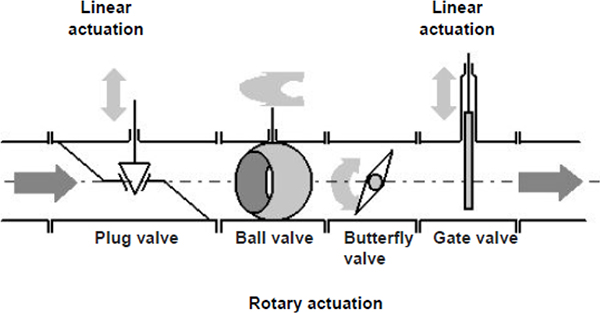

9.1 Introduction 101

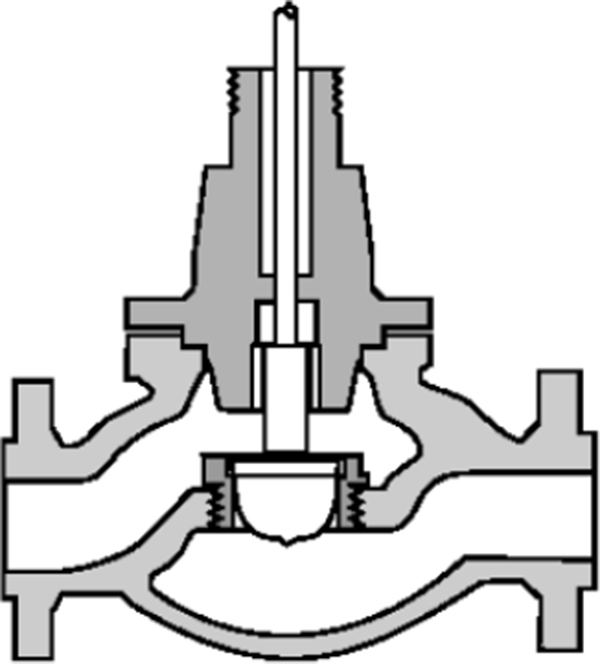

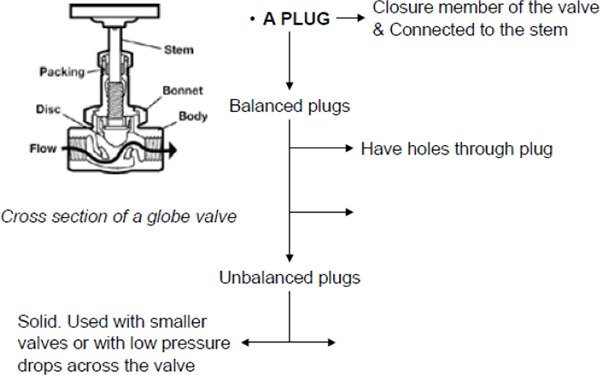

9.2 Globe Valves 102

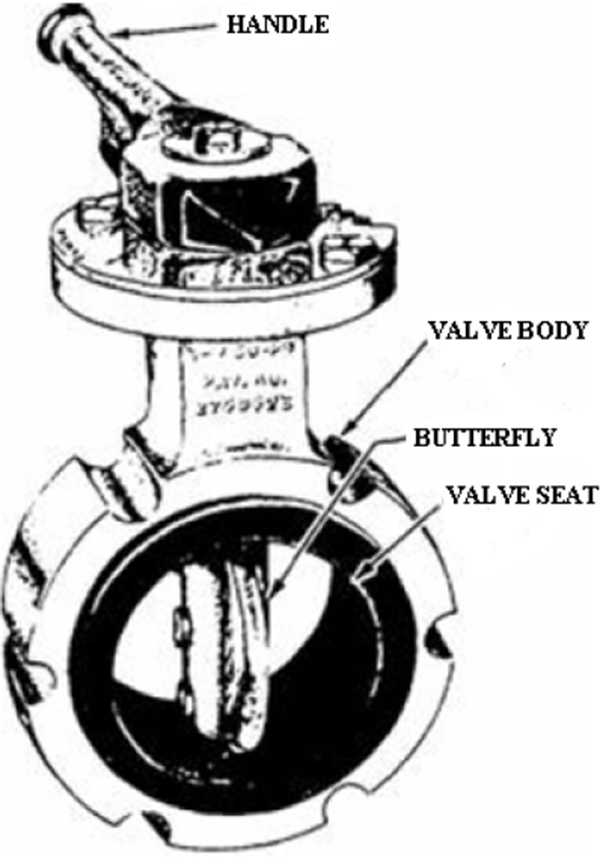

9.3 Butterfly Valves 105

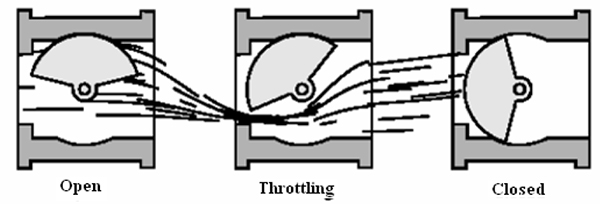

9.4 Eccentric Disk or High Performance Butterfly Valves 106

9.5 Ball Valves 107

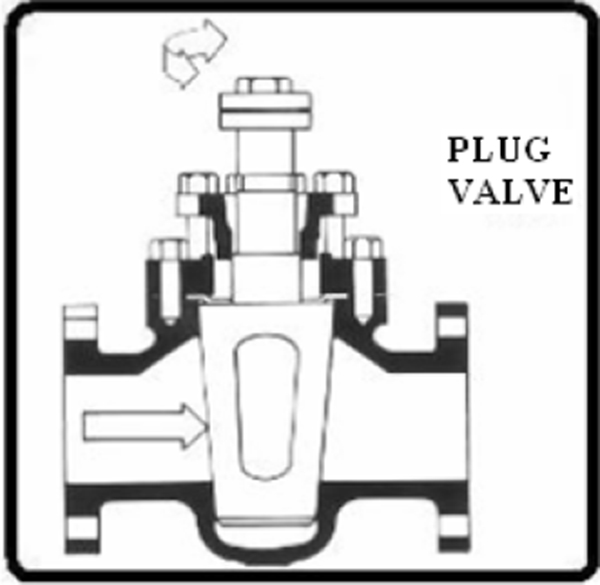

9.6 Rotary Plug Valves 110

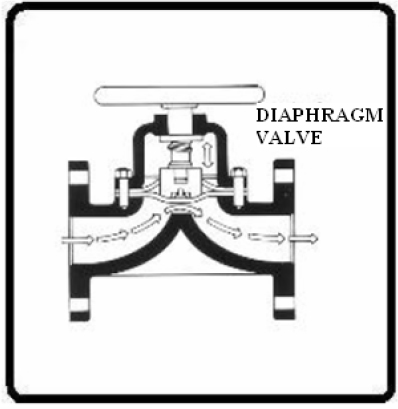

9.7 Diaphragm Valves 110

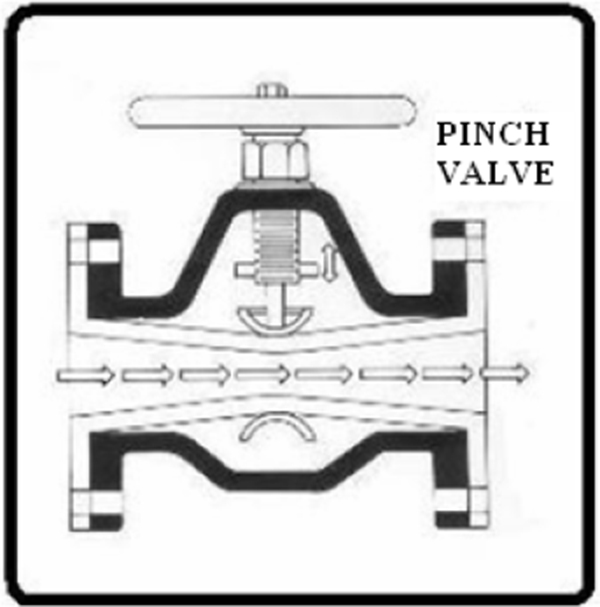

9.8 Pinch Valves 111

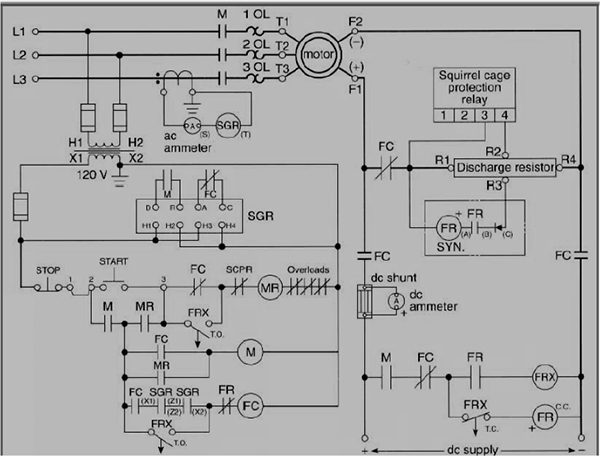

10 Fundamentals of PLCs 113

10.1 Introduction to the PLC 113

10.2 Alternative Control Systems – where do PLCs fit in? 114

10.3 Why PLCs have become so widely accepted 114

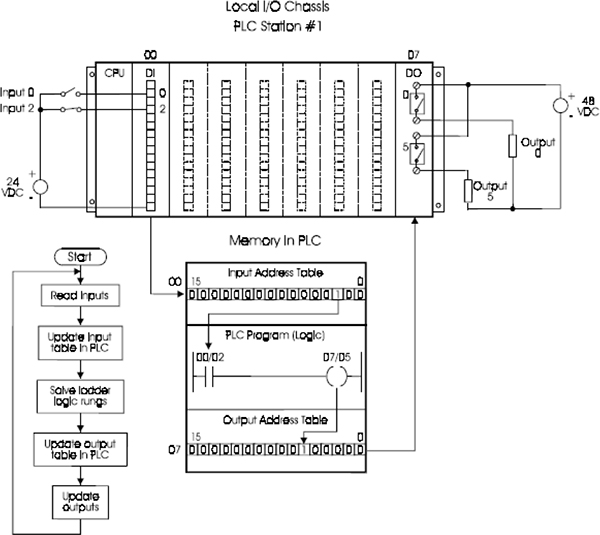

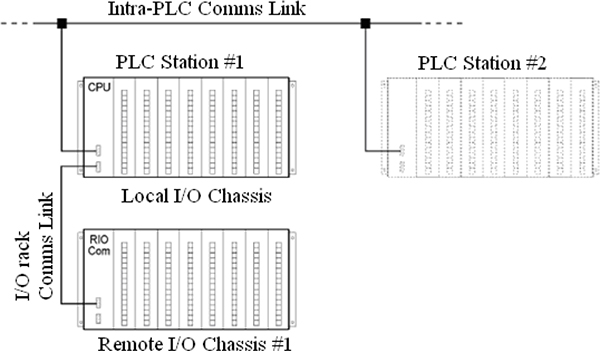

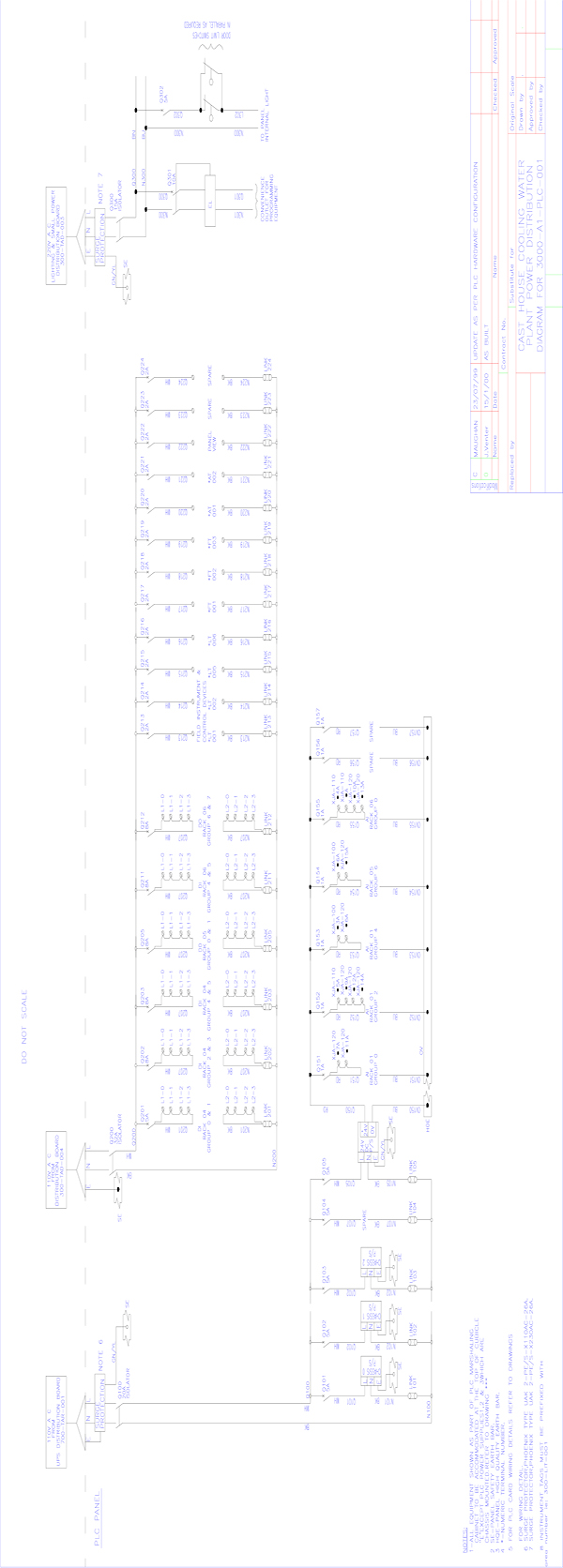

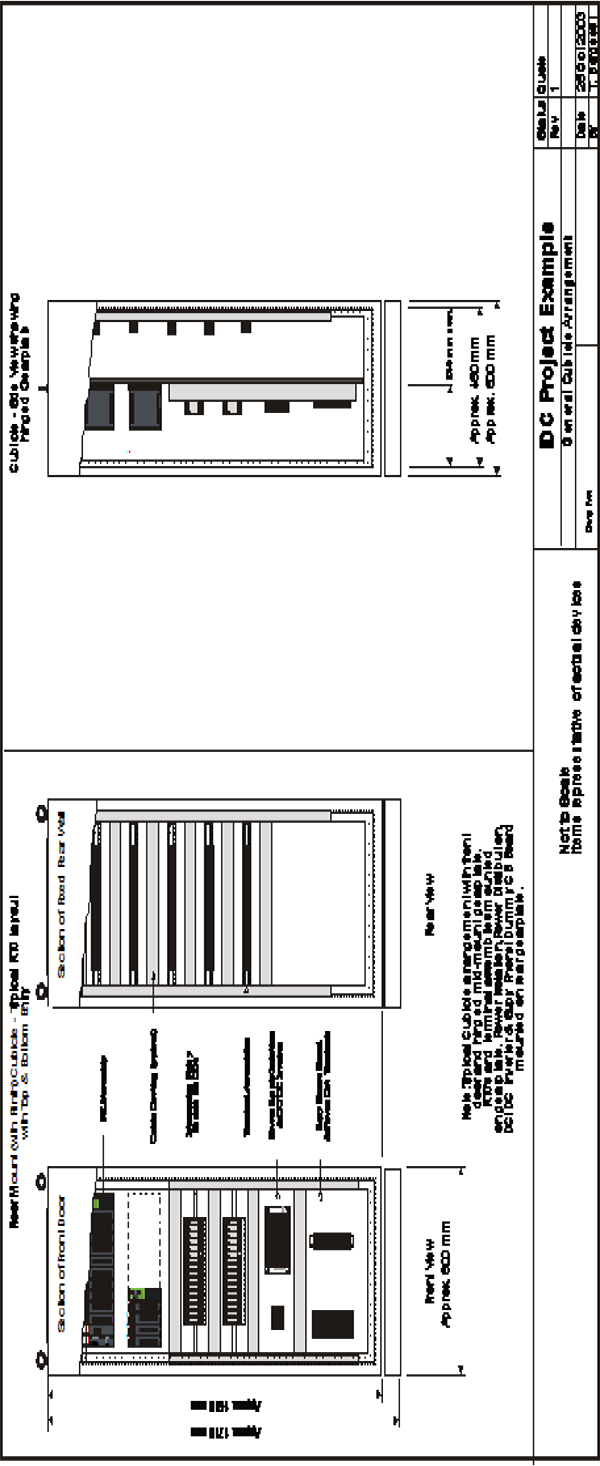

11 Fundamentals of PLC Hardware 119

11.1 Block diagram of typical PLC 119

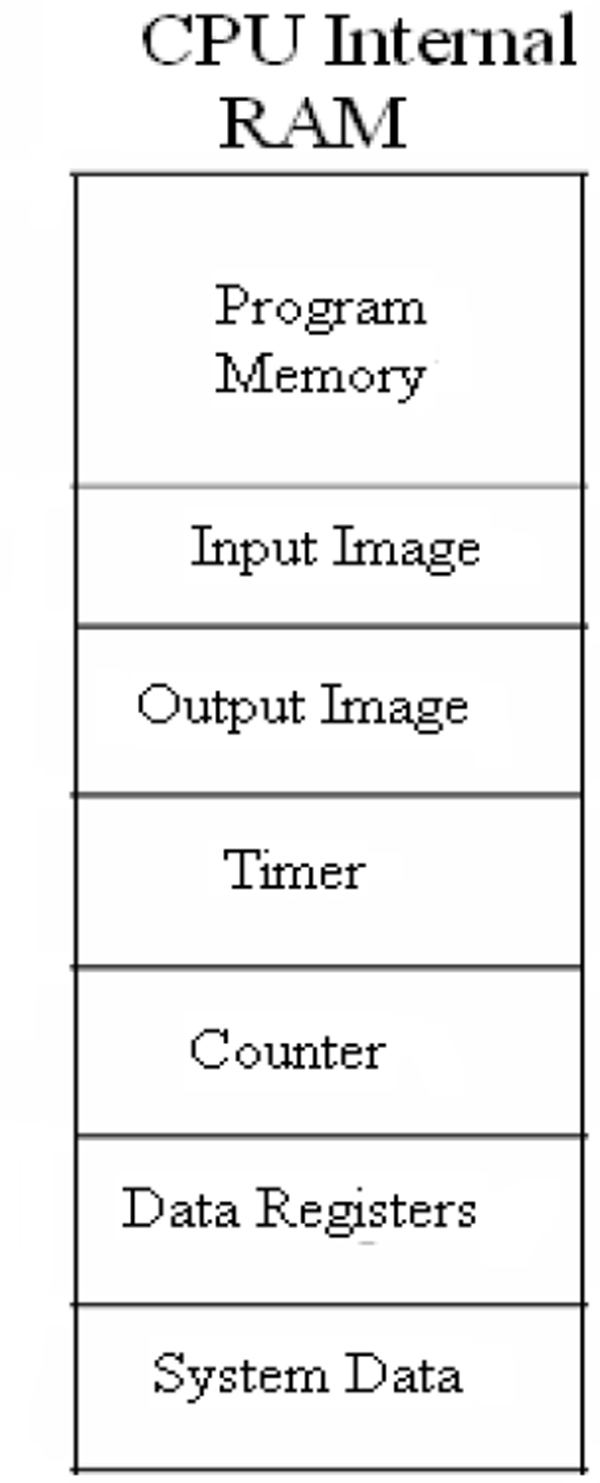

11.2 PLC processor module – memory organization 120

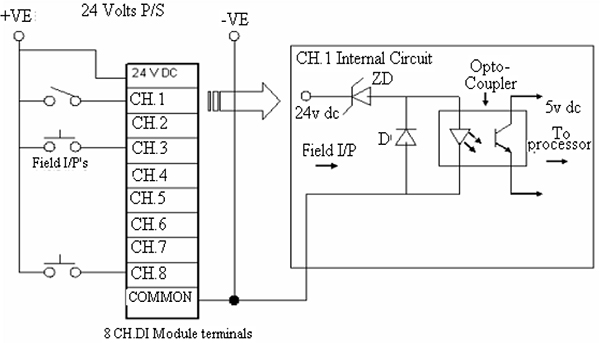

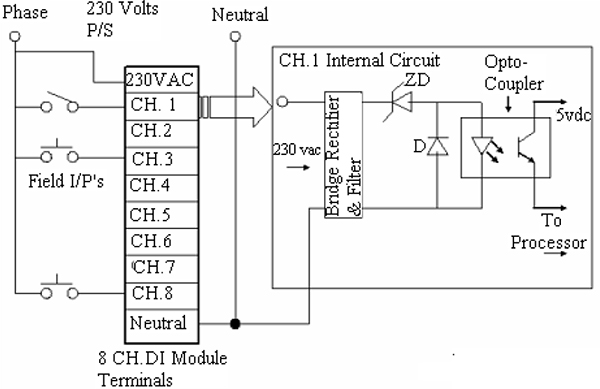

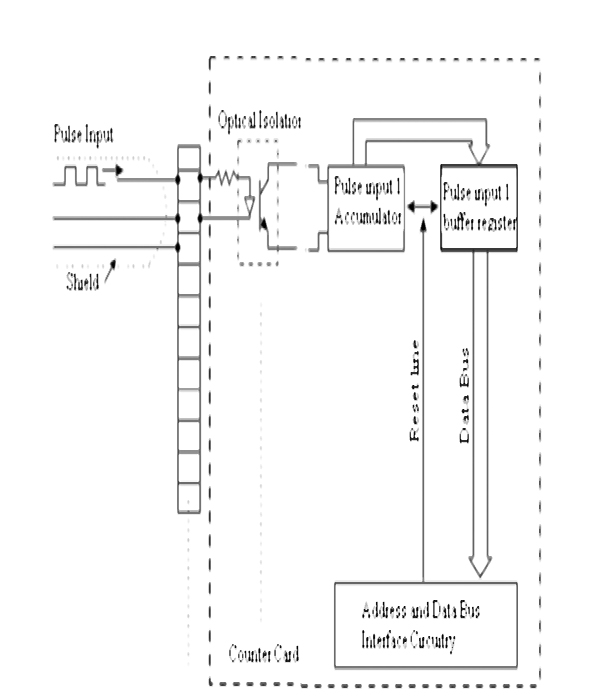

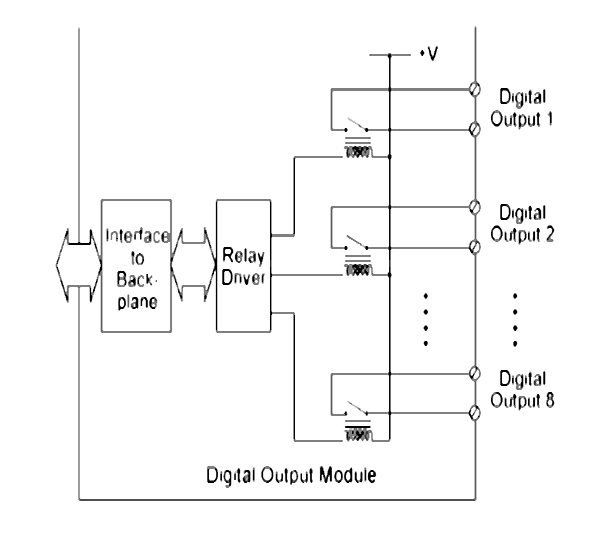

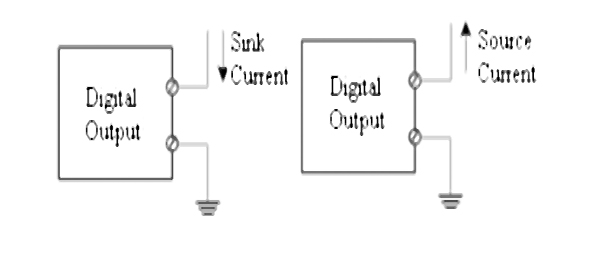

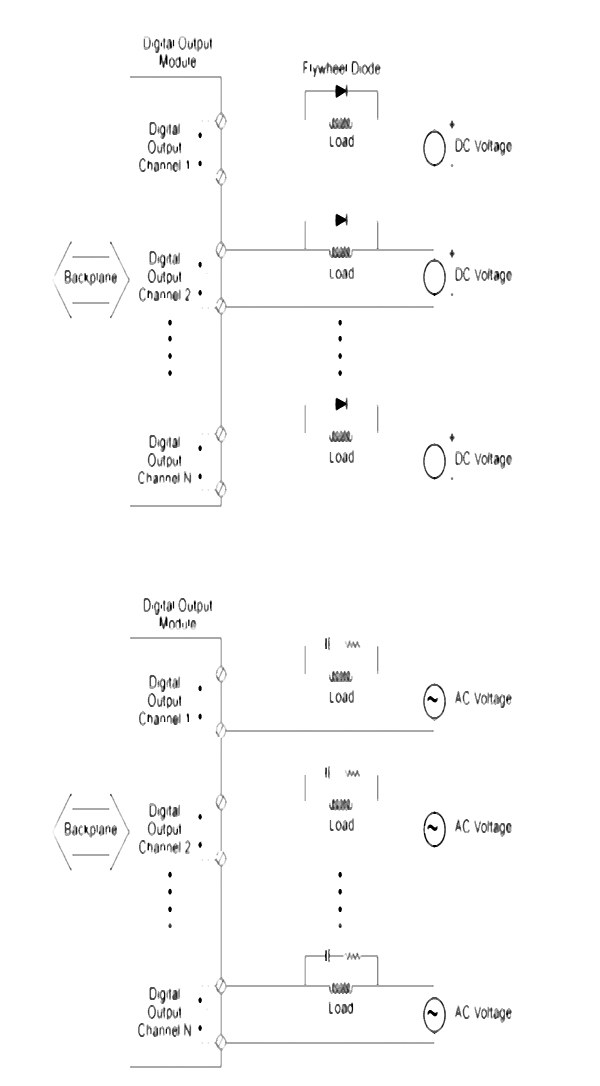

11.3 Input / Output section – module types 122

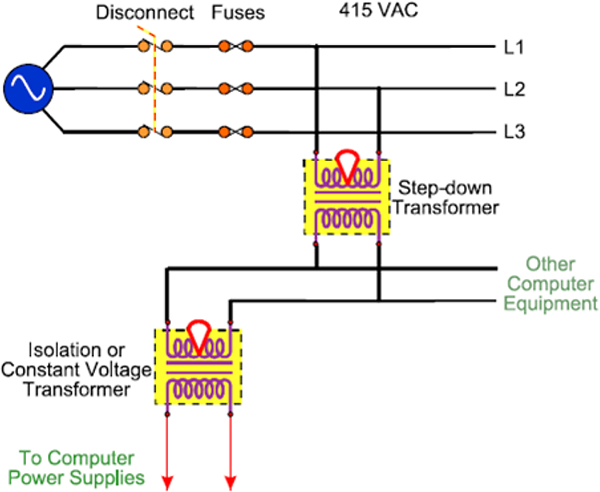

11.4 Power Supplies 127

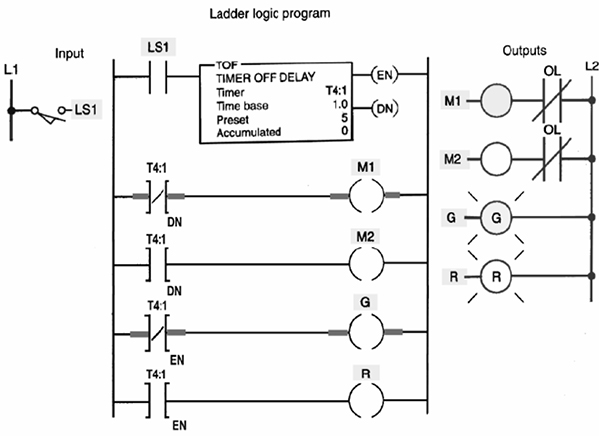

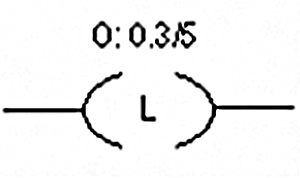

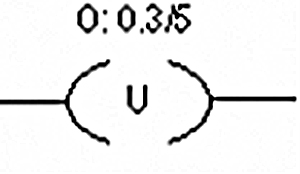

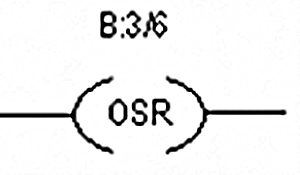

12 Fundamentals of PLC Software 131

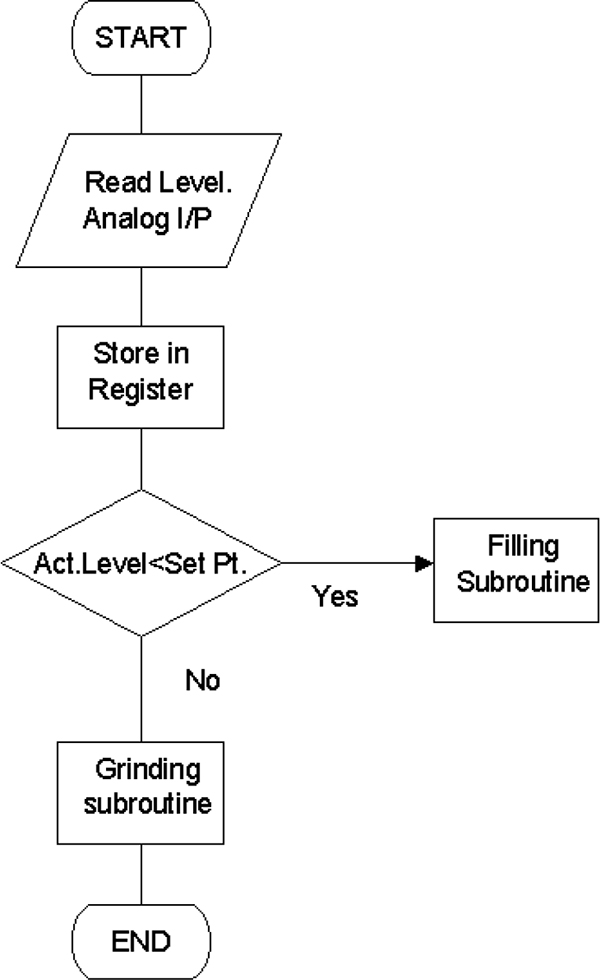

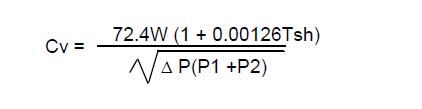

12.1 Methods of representing Logic 131

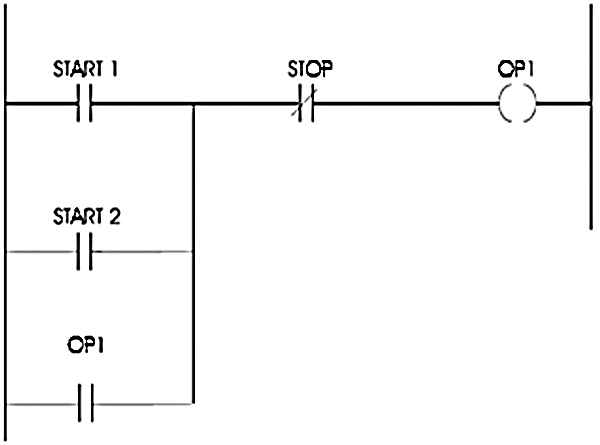

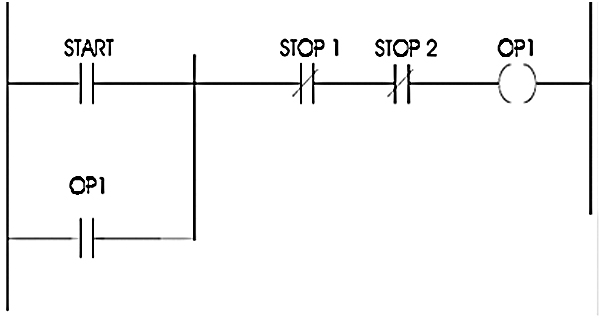

12.2 Ladder Logic Basics 134

12.3 The basic rules for programming 136

13 Introduction to SCADA Systems 139

13.1 Fundamentals 141

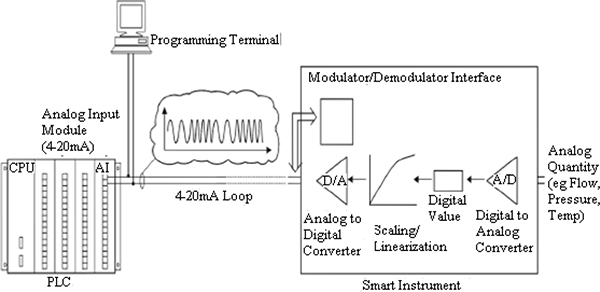

13.2 Comparison of SCADA, DCS, PLC and smart instruments 141

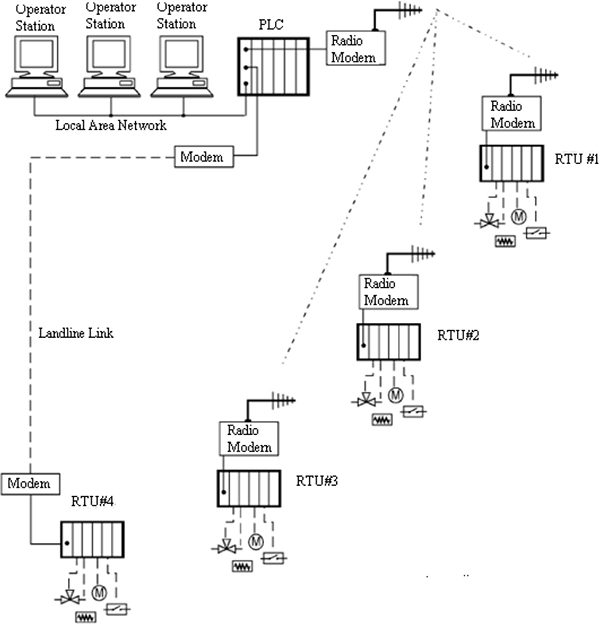

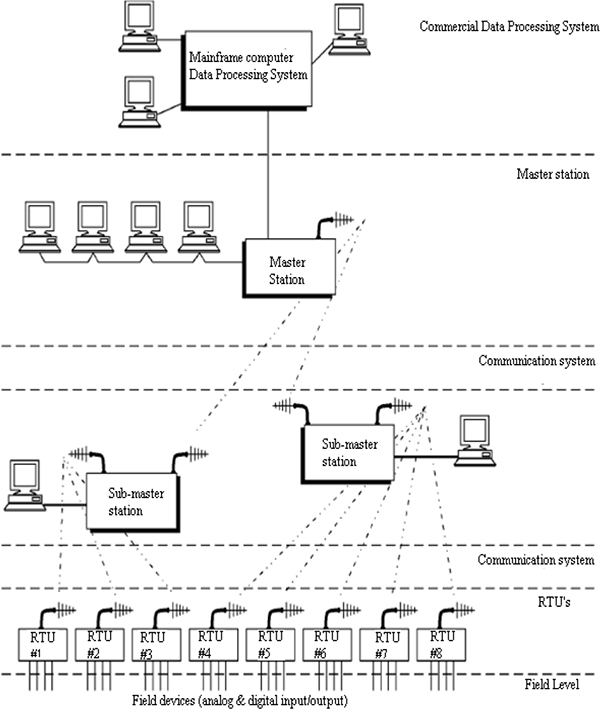

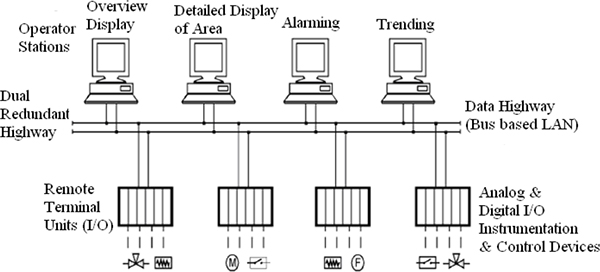

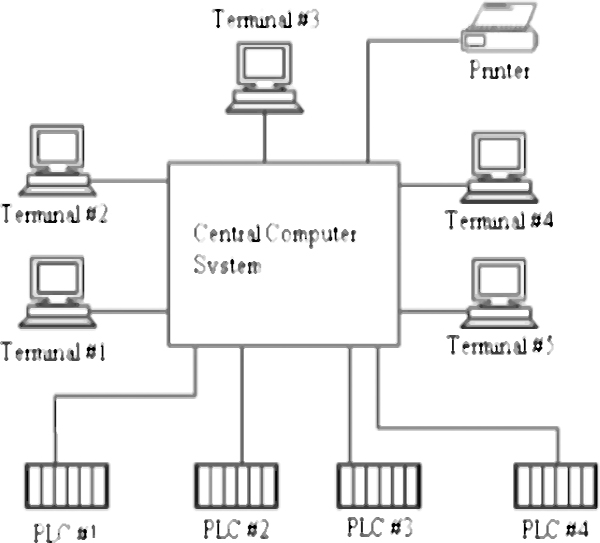

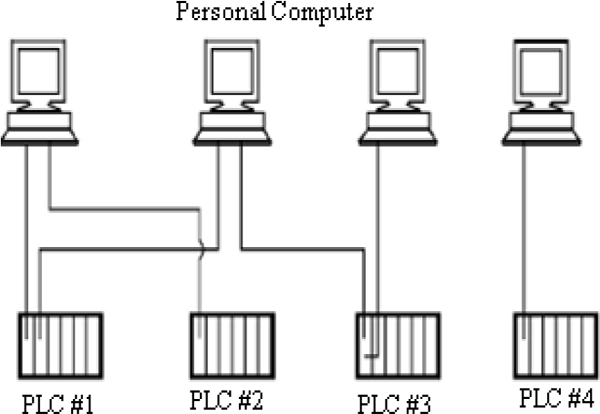

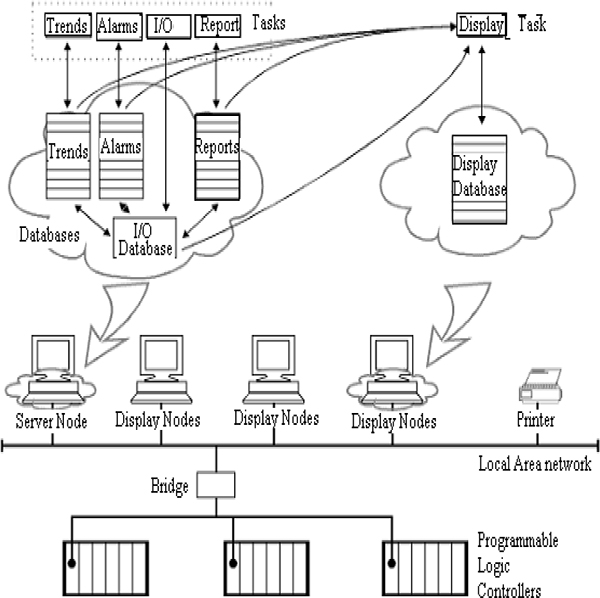

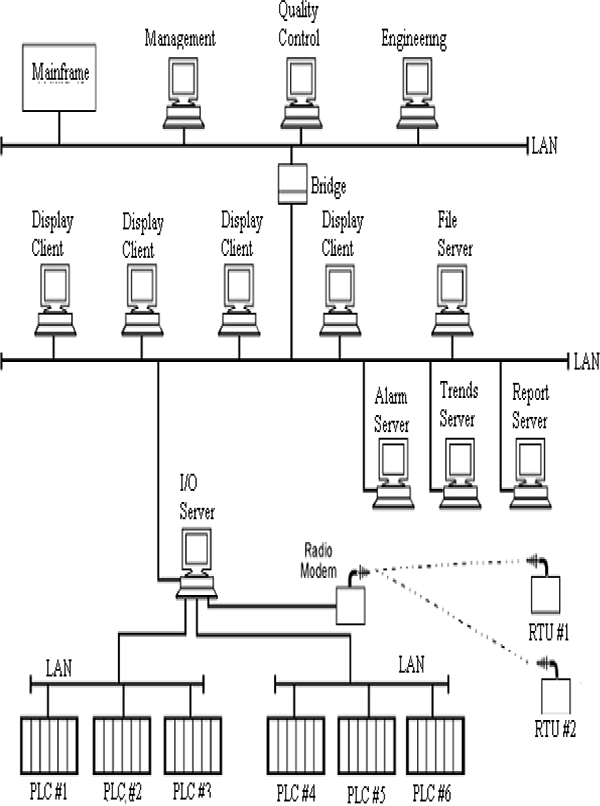

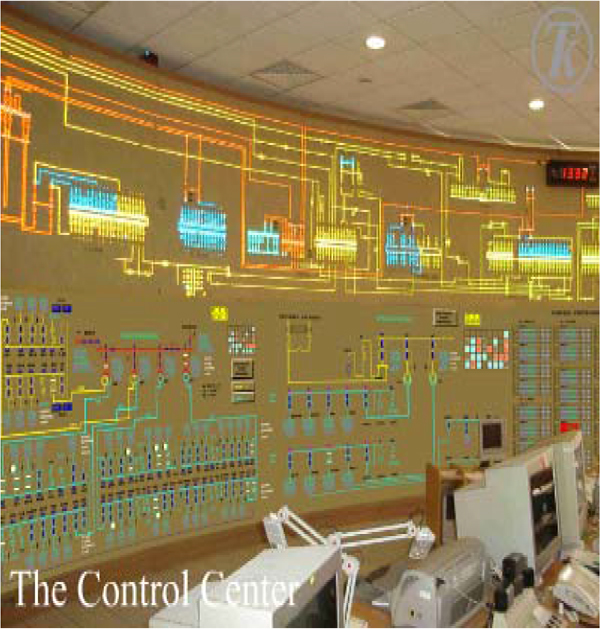

13.3 Typical SCADA installations 146

13.4 Definitions of terms 147

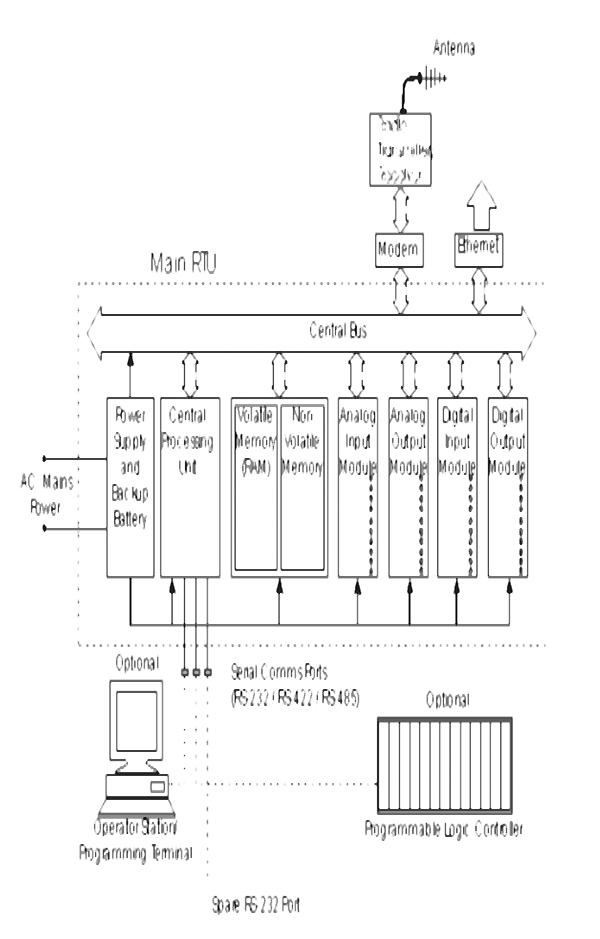

14 SCADA Systems Hardware 149

14.1 Remote Terminal Unit (RTU) structure 149

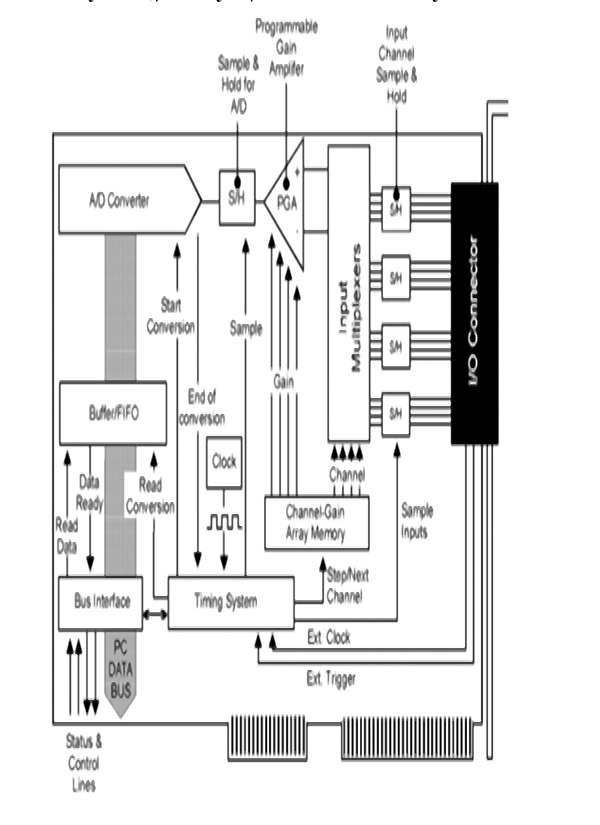

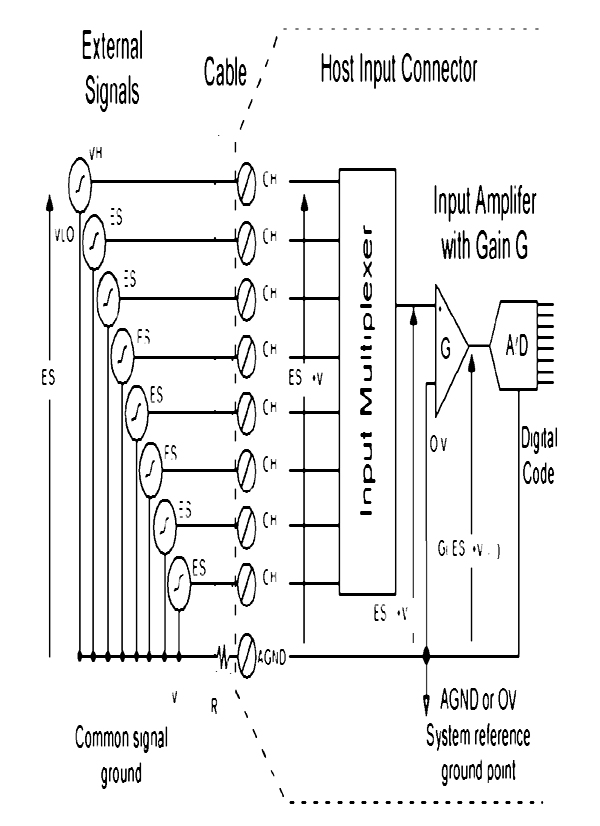

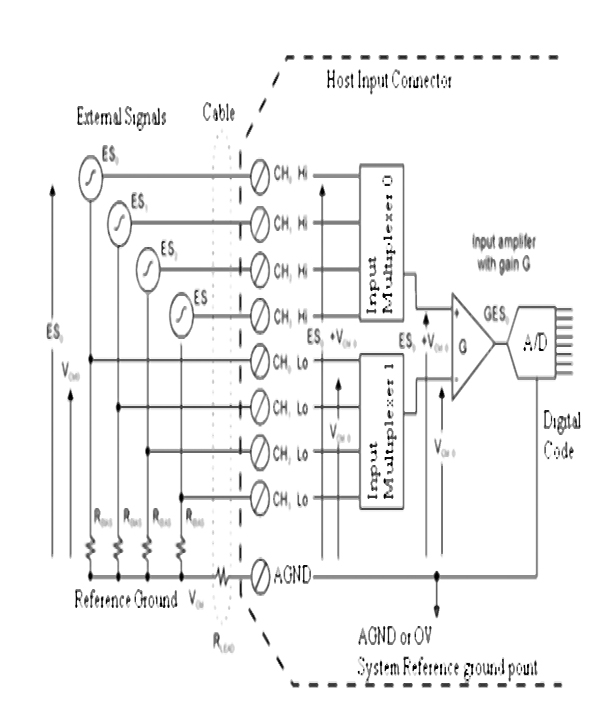

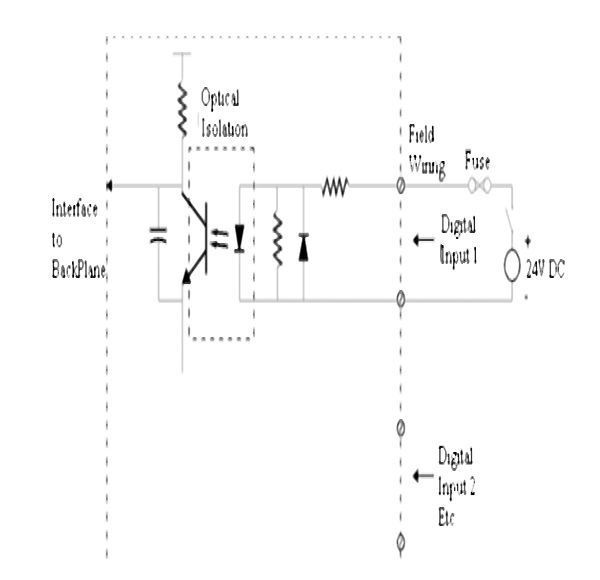

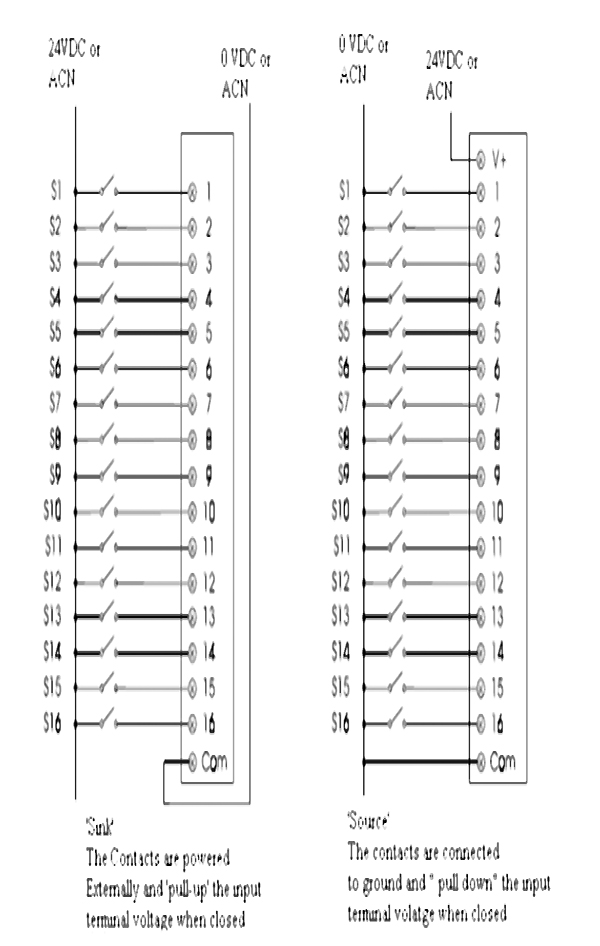

14.2 Analog and digital Input/Output modules 150

14.3 Master site structure 160

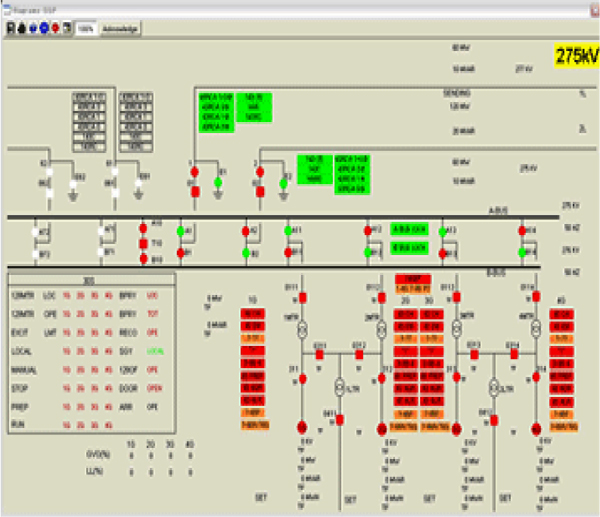

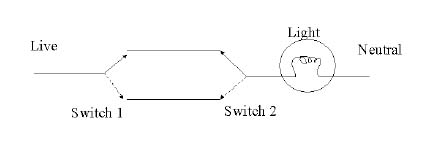

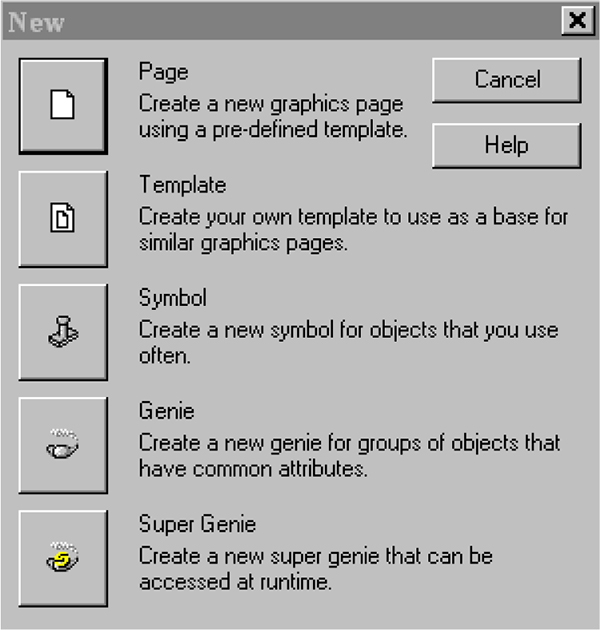

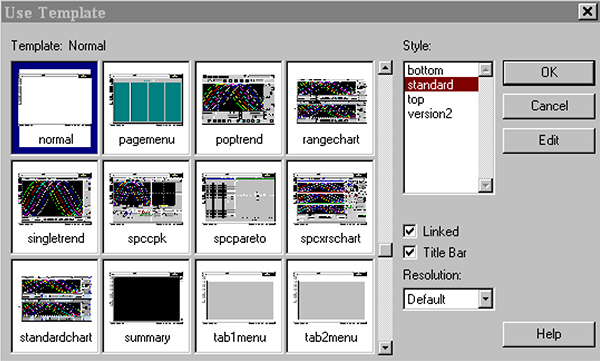

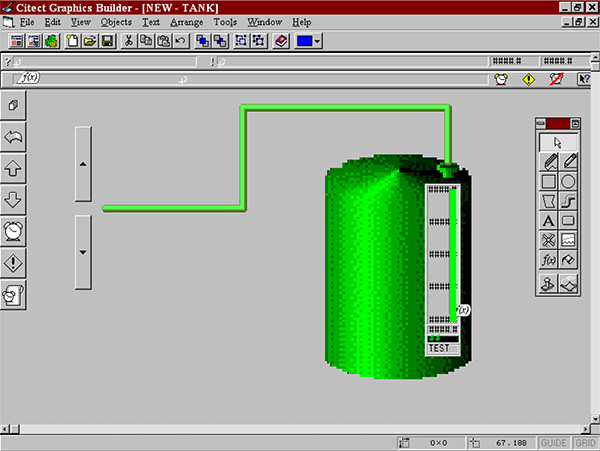

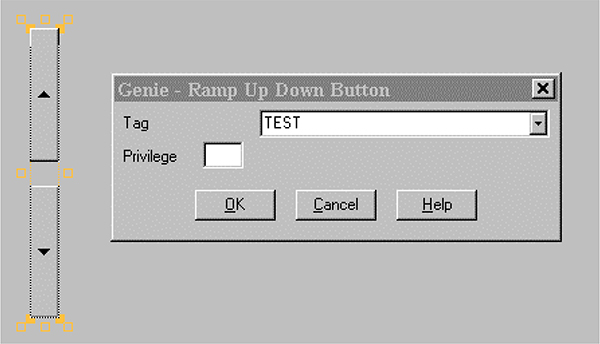

15 SCADA System Software 165

15.1 Fundamentals 165

15.2 Components of a SCADA system 166

15.3 Software – design of SCADA packages 168

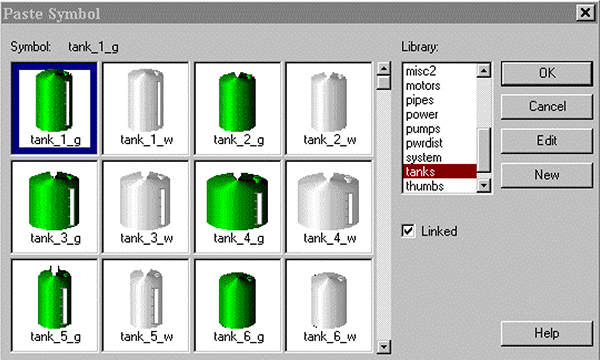

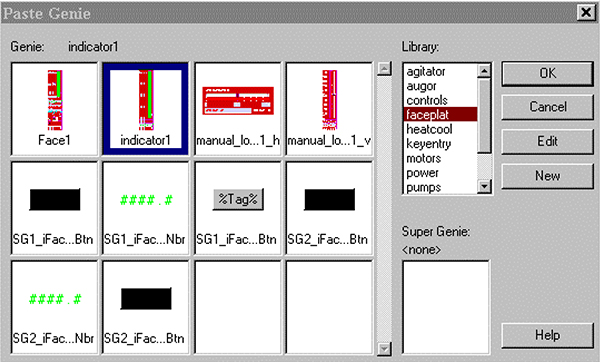

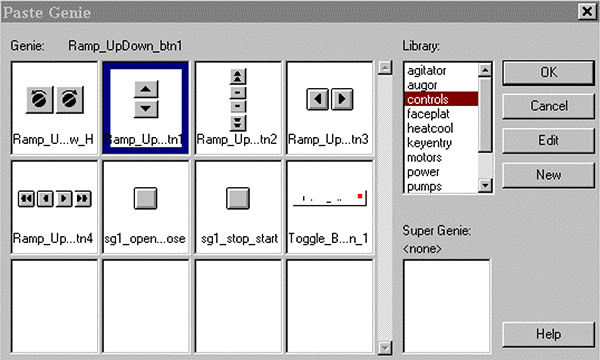

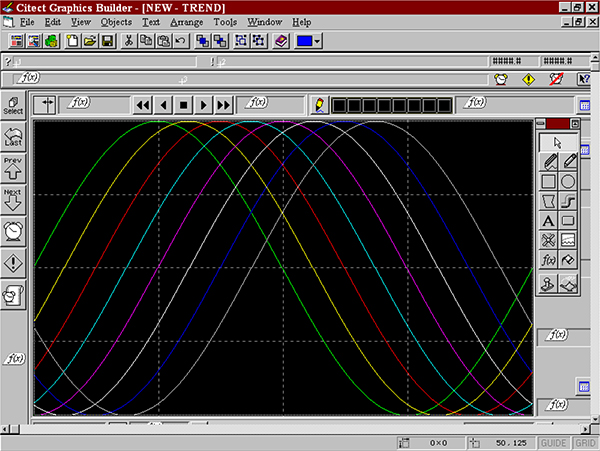

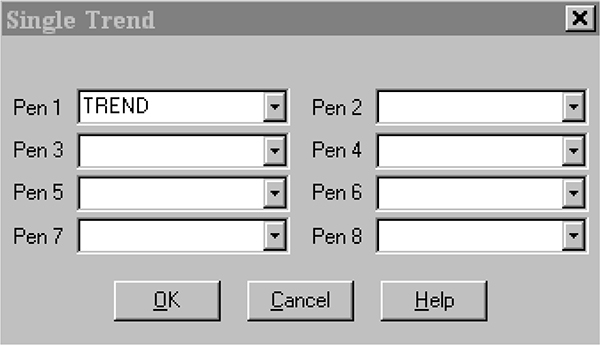

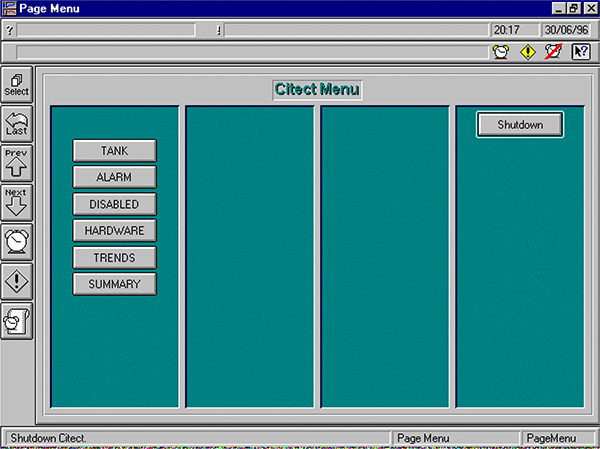

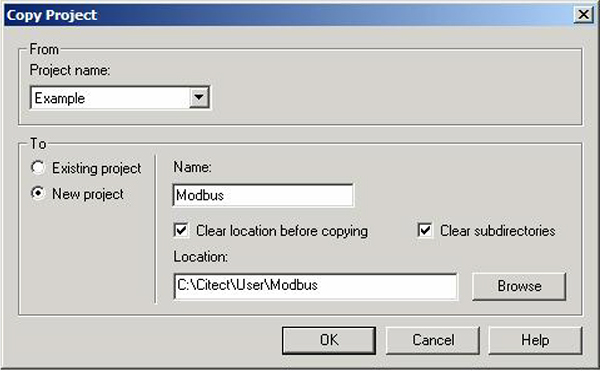

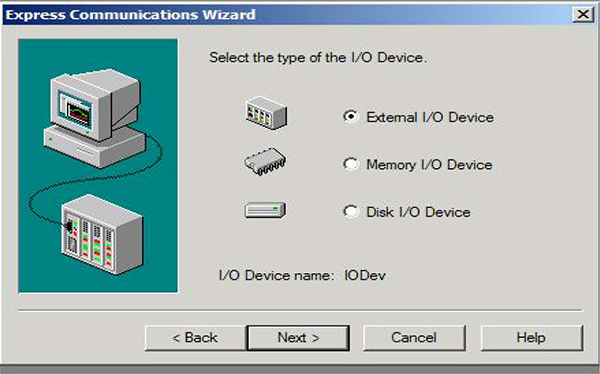

15.4 Configuration of SCADA systems 173

15.5 Building the user interface 181

16 Basics of Data Communications between PLC and SCADA Systems 189

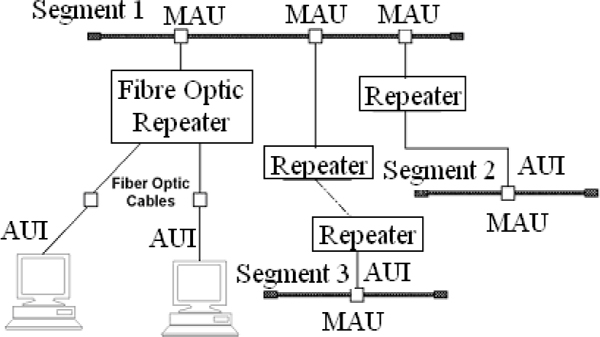

16.1 Twisted pair cables 189

16.2 Fiber optic cables 192

16.3 Public network provided services 194

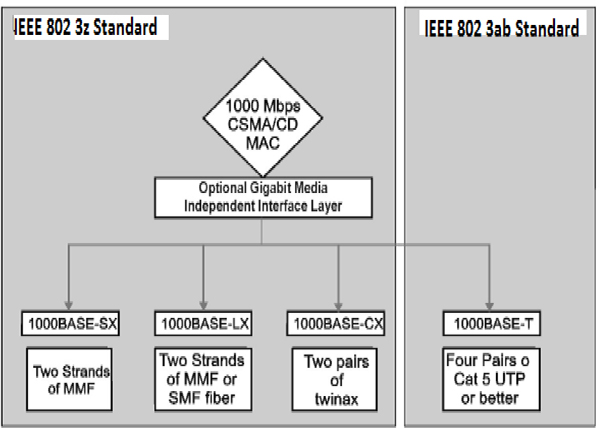

16.4 Industrial Ethernet 194

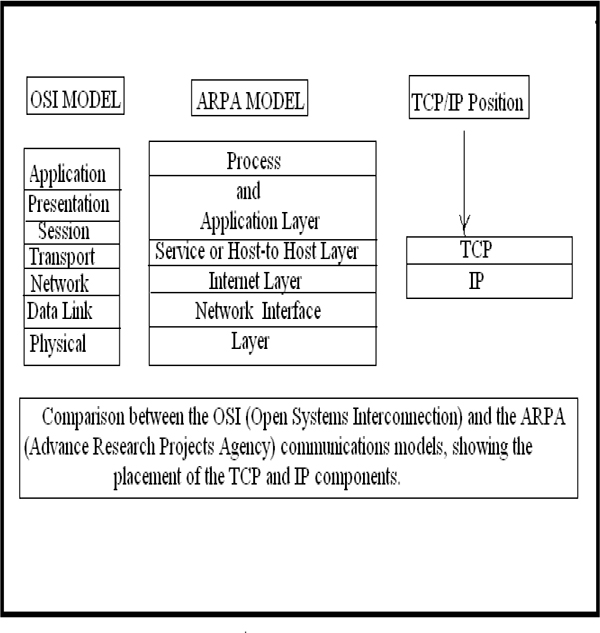

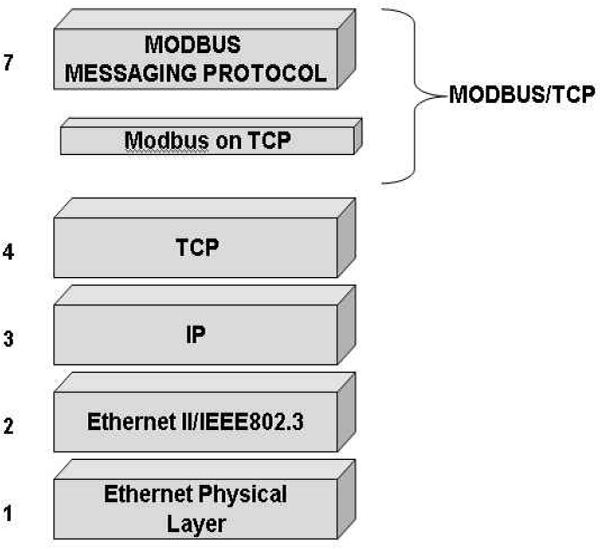

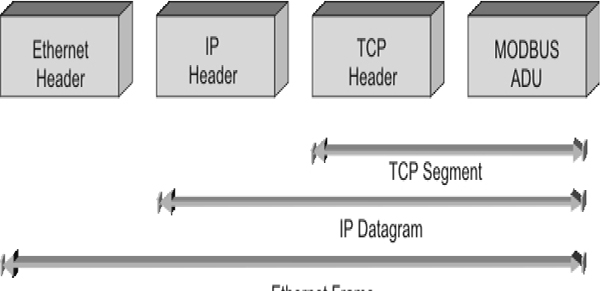

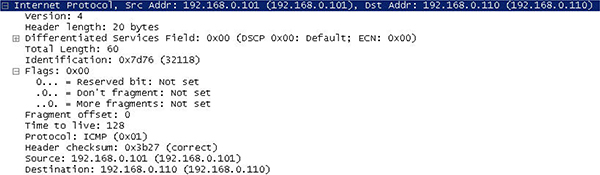

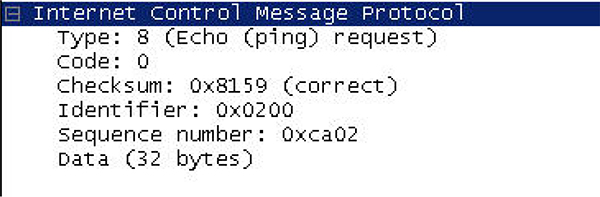

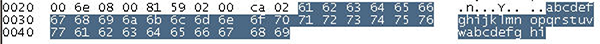

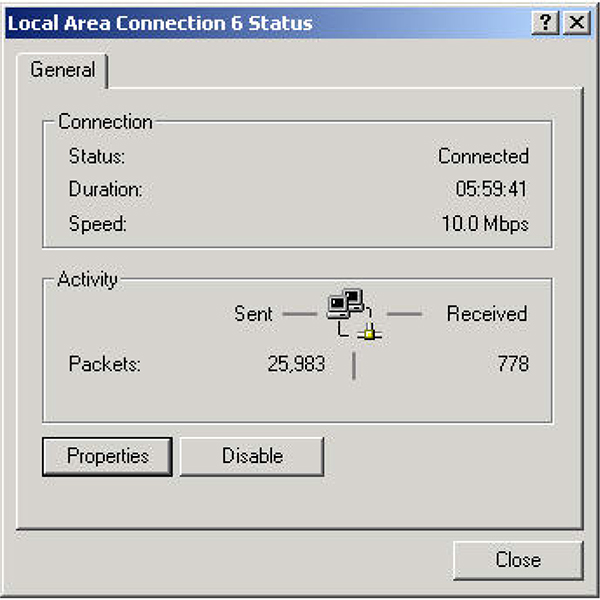

16.5 TCP/IP 197

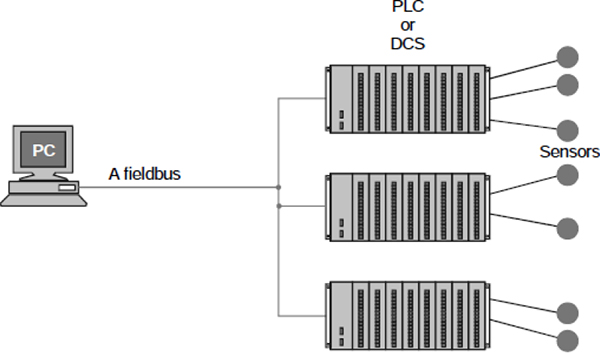

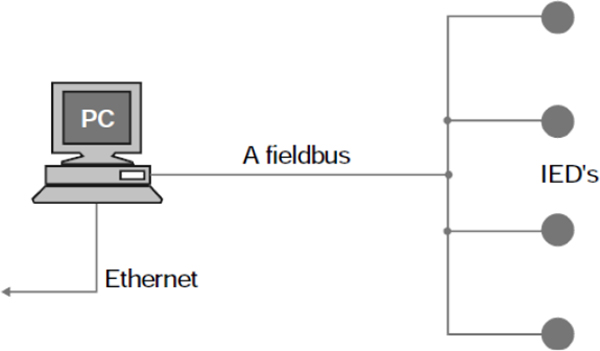

16.6 Fieldbus 199

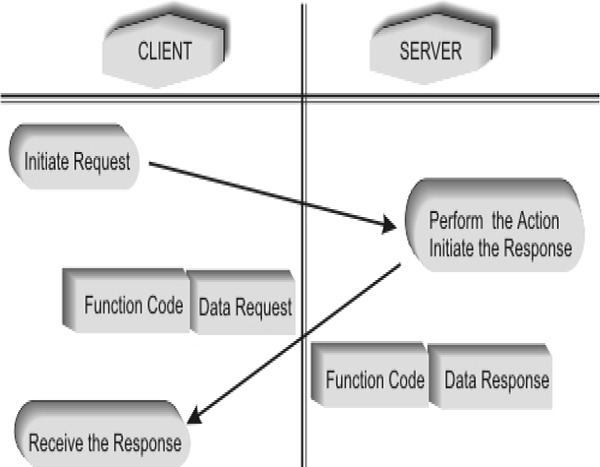

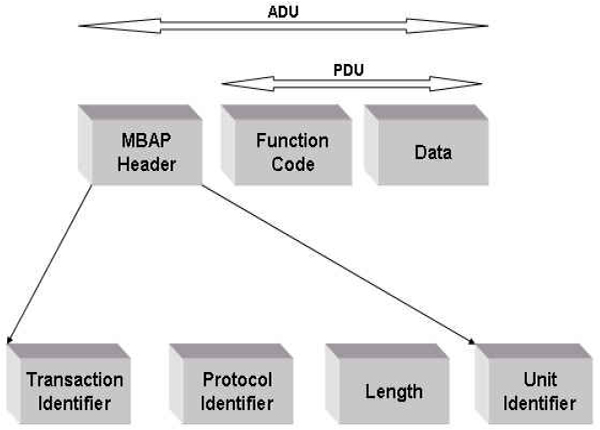

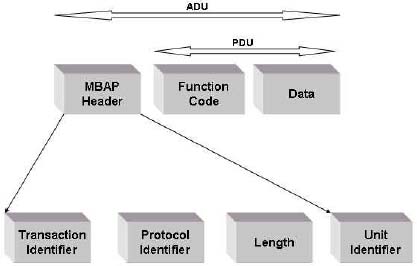

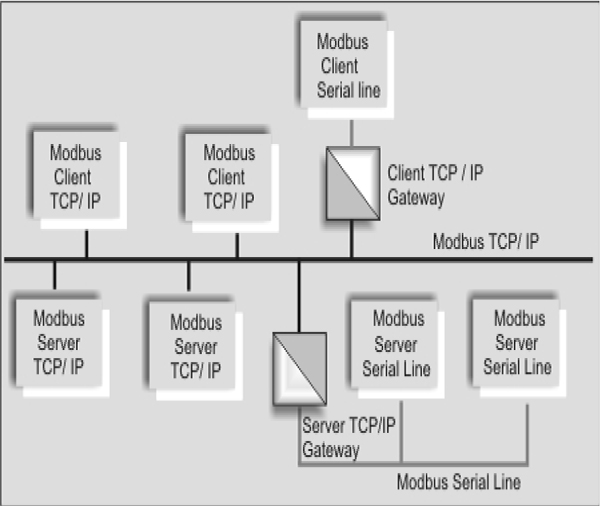

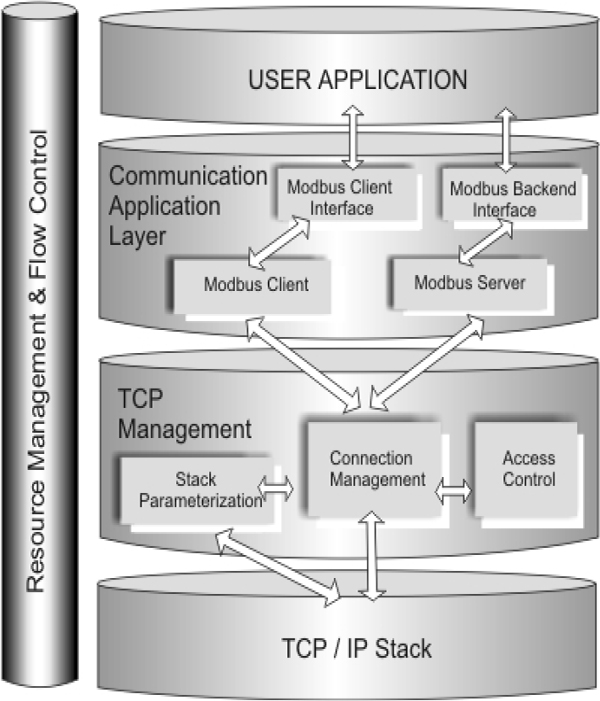

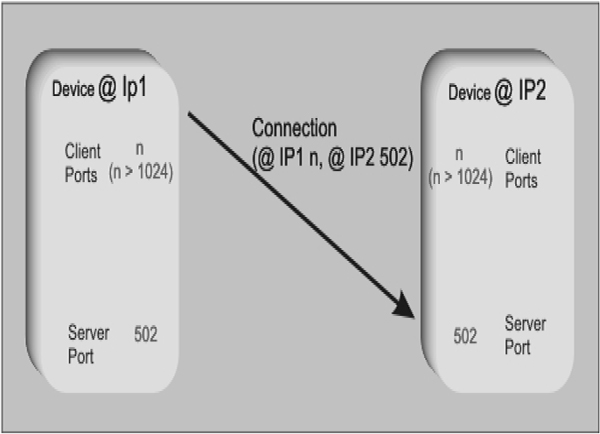

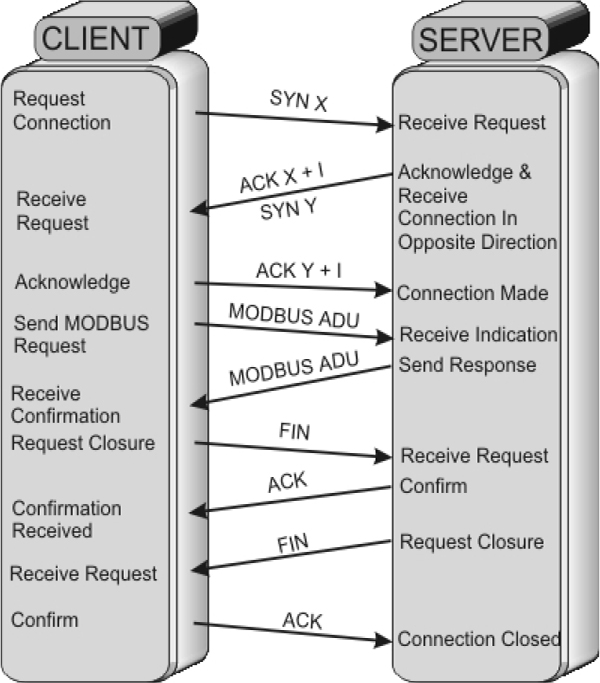

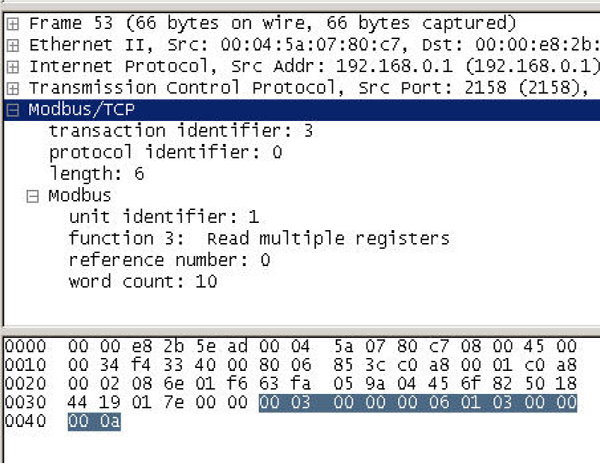

16.7 Modbus 201

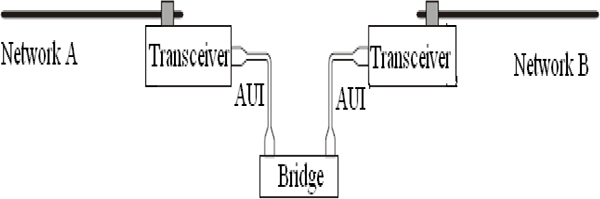

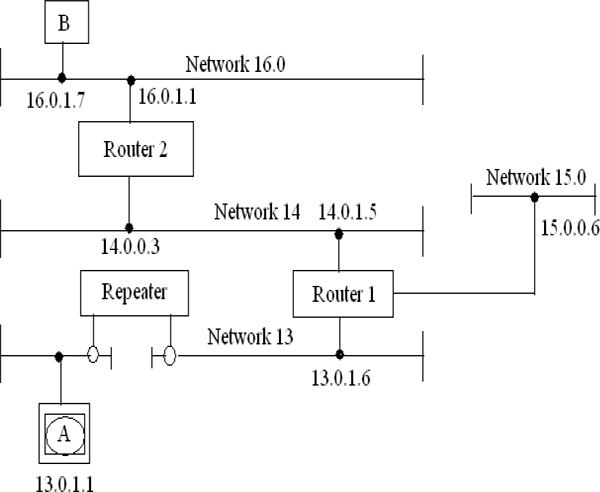

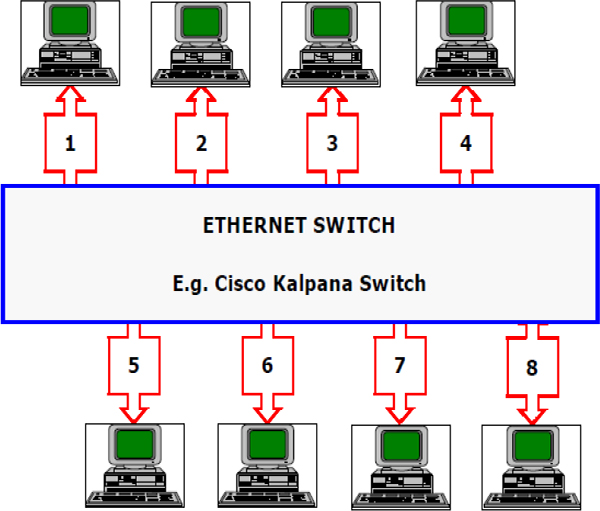

16.8 LAN Connectivity 208

16.9 SCADA network security 211

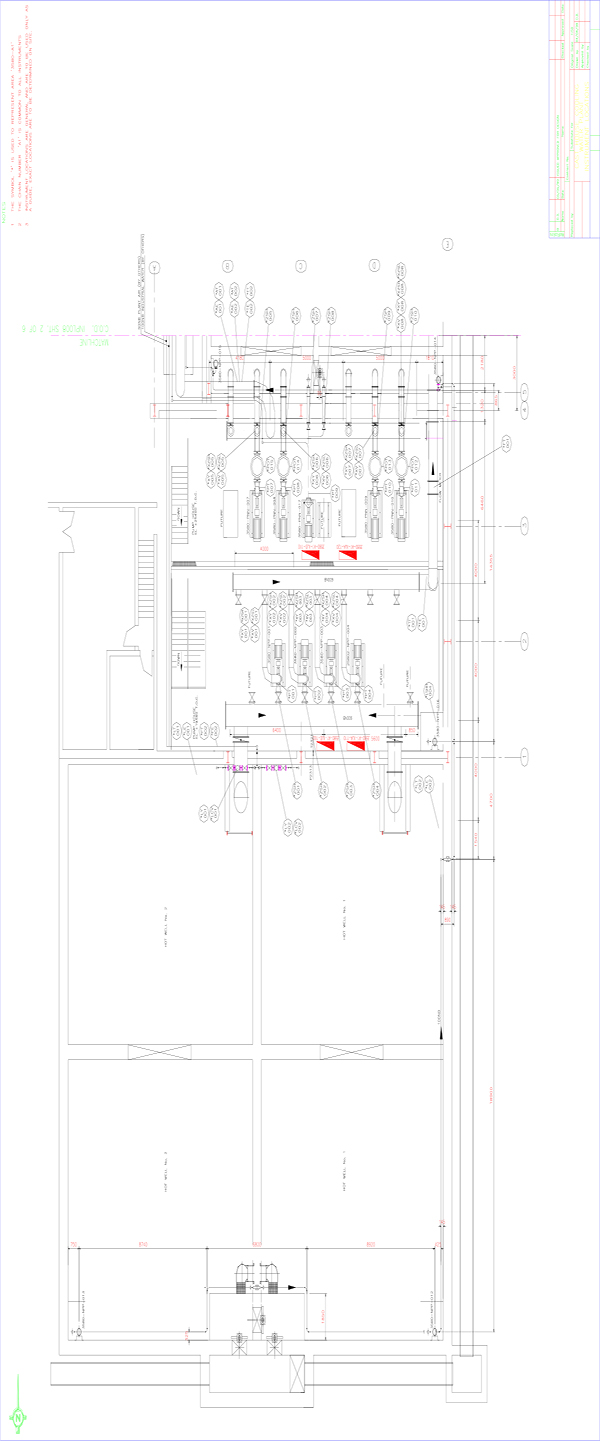

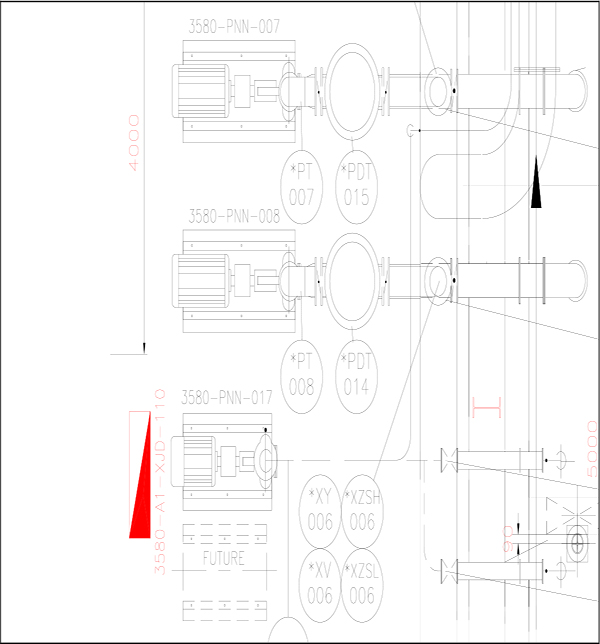

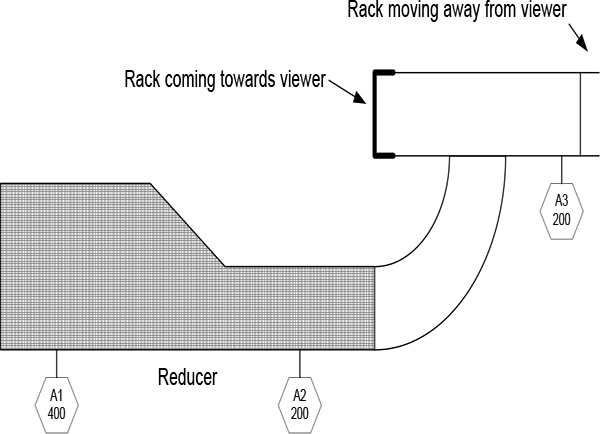

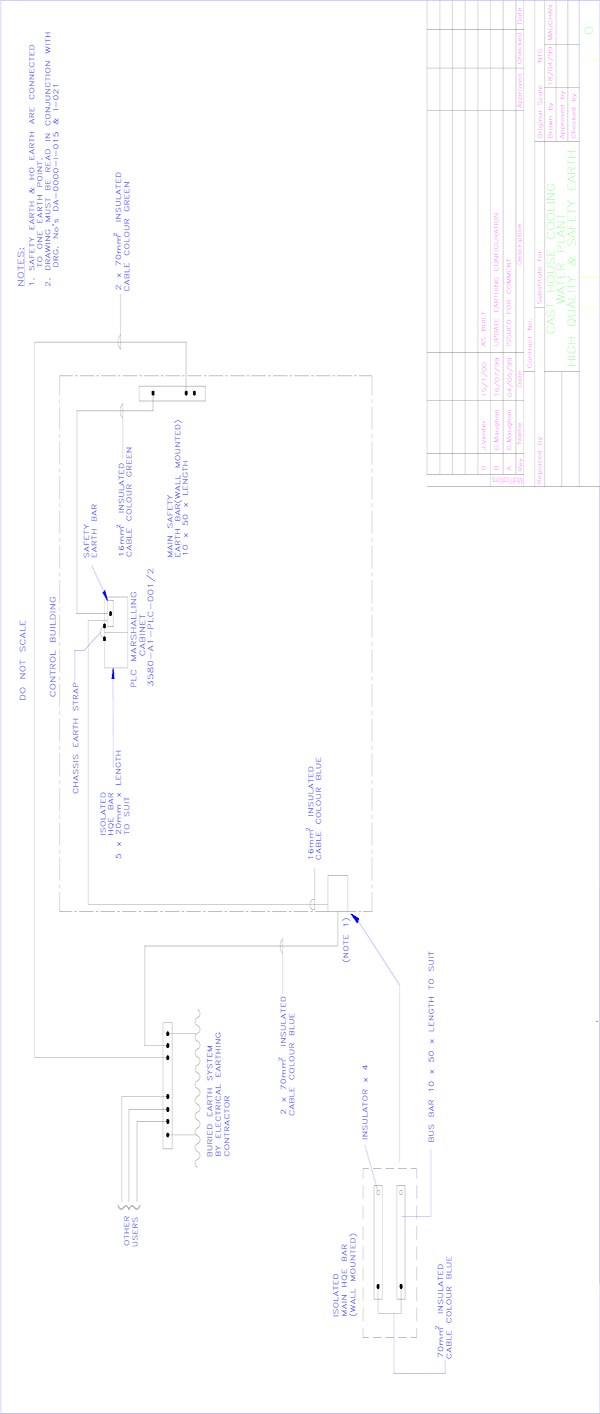

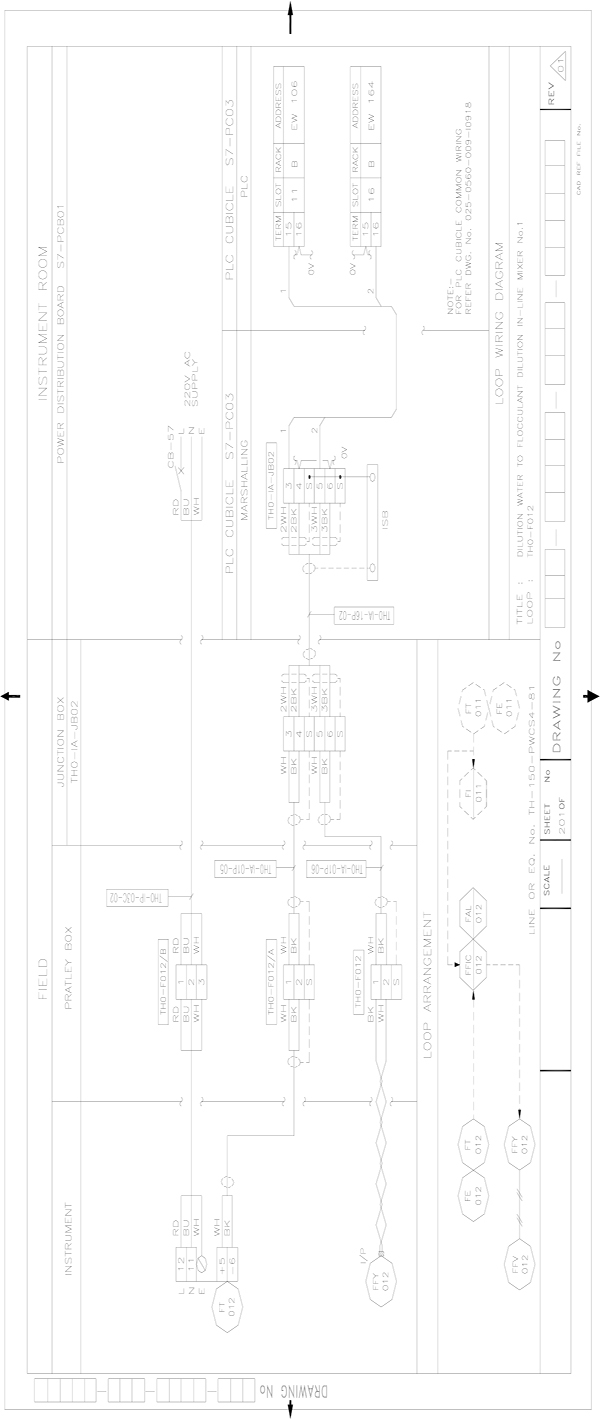

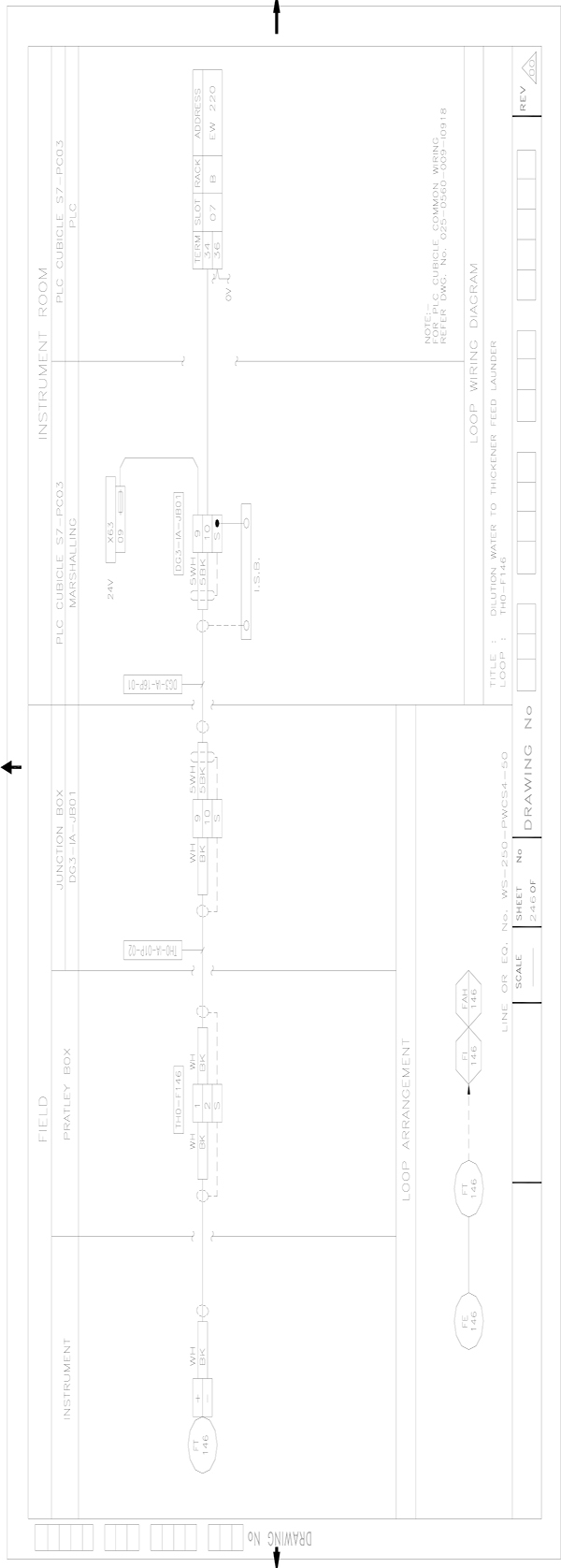

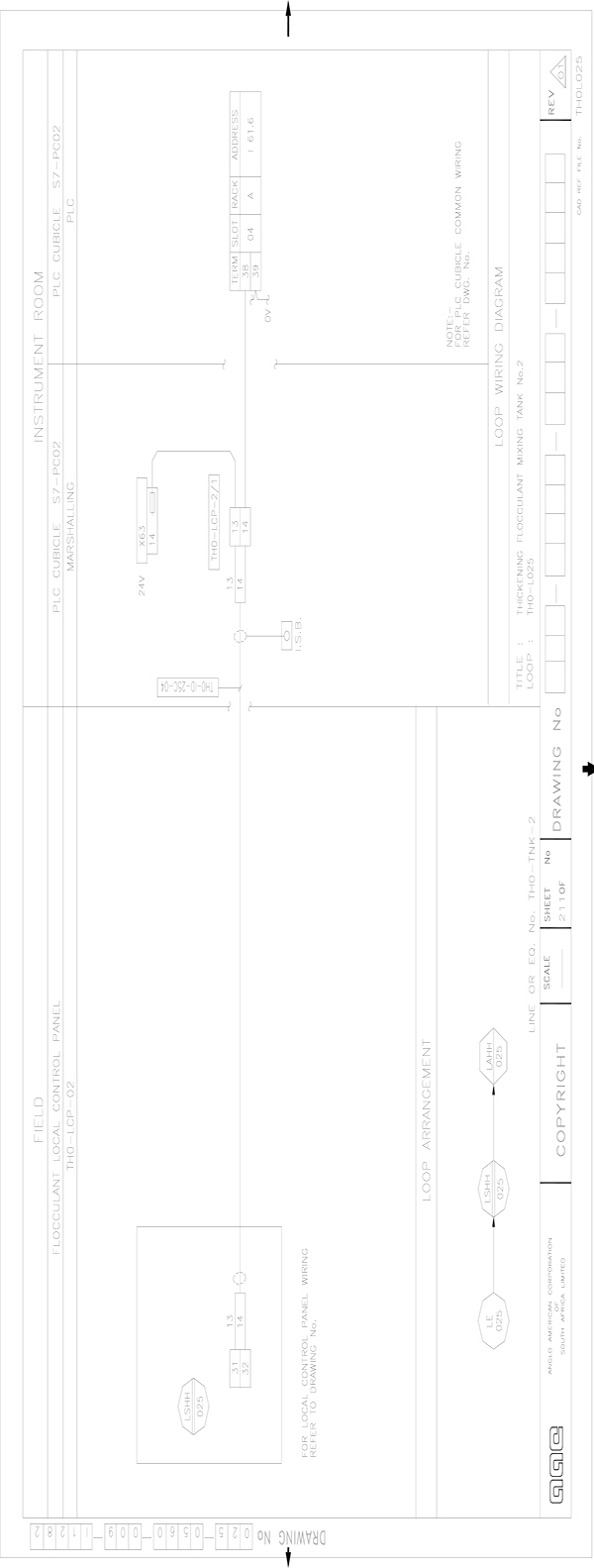

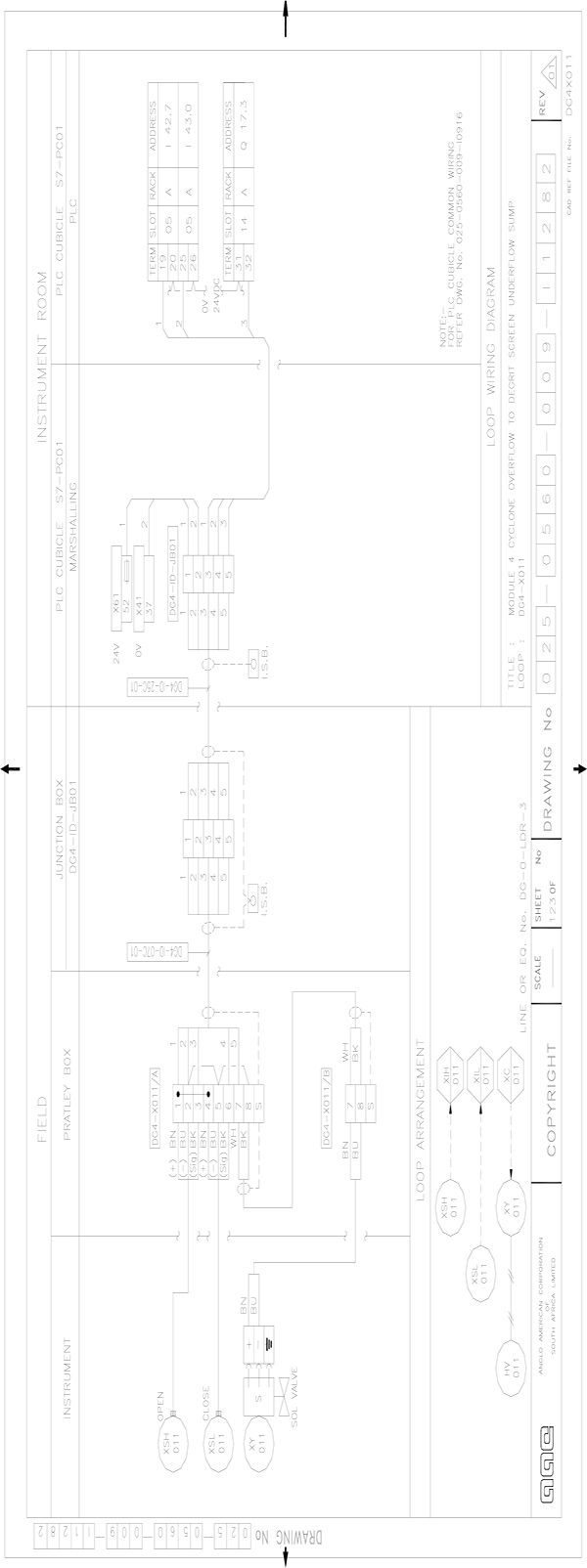

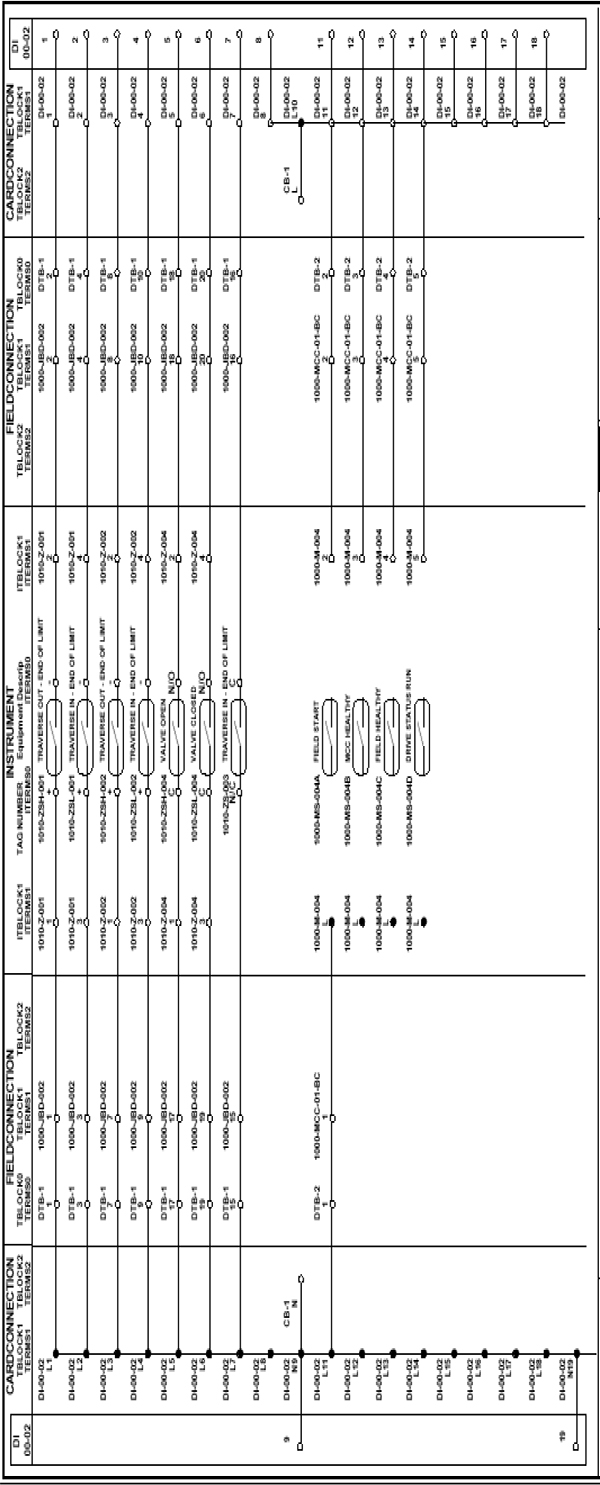

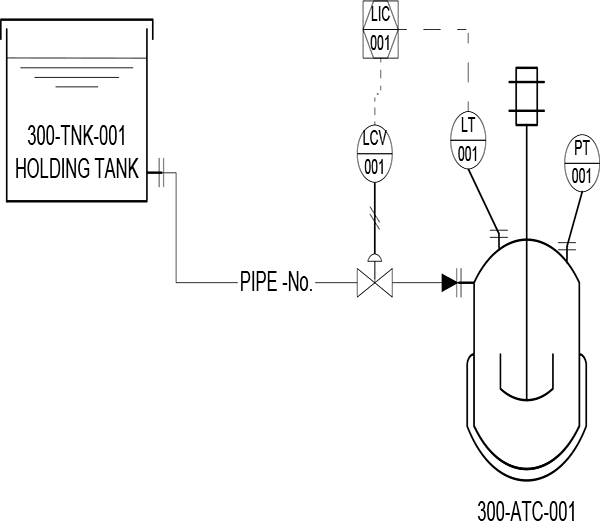

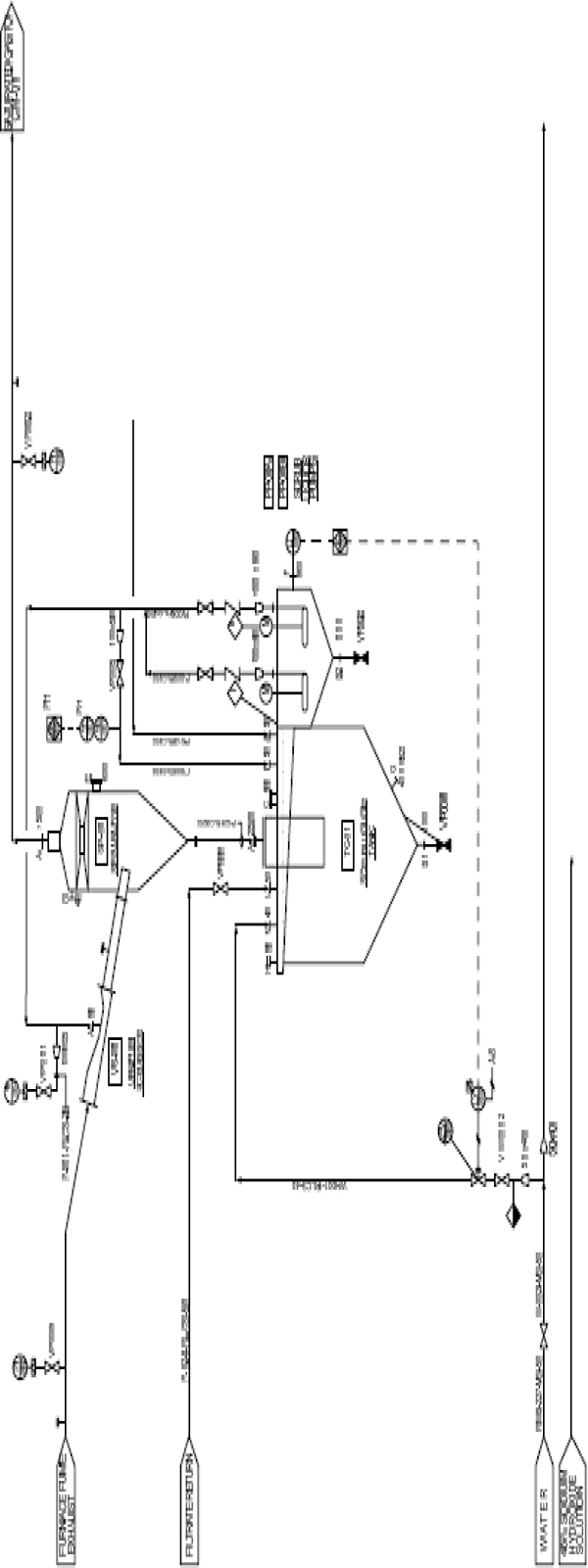

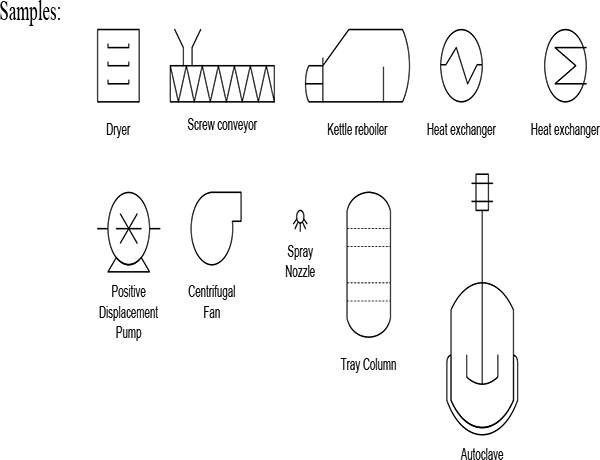

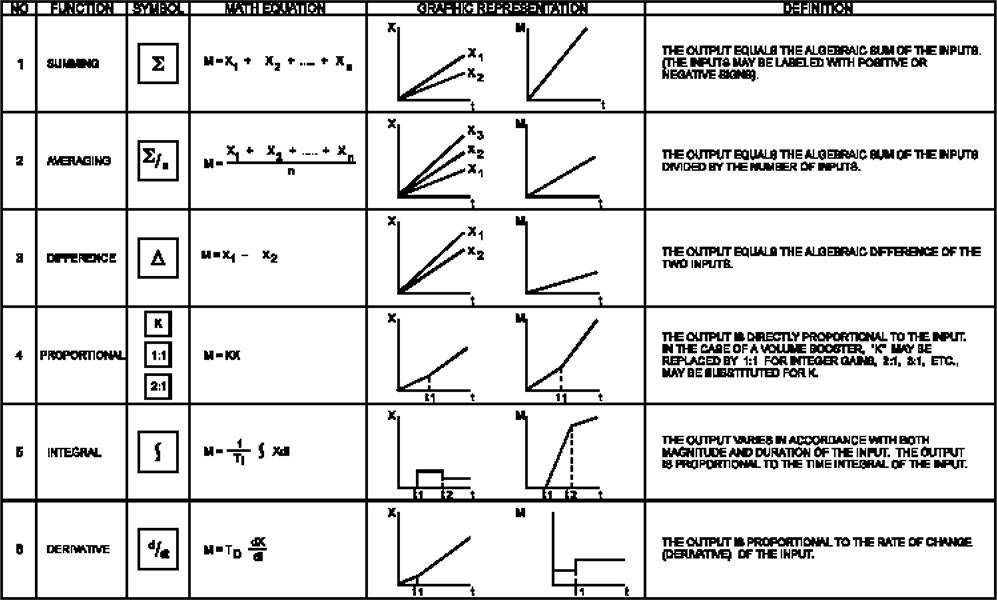

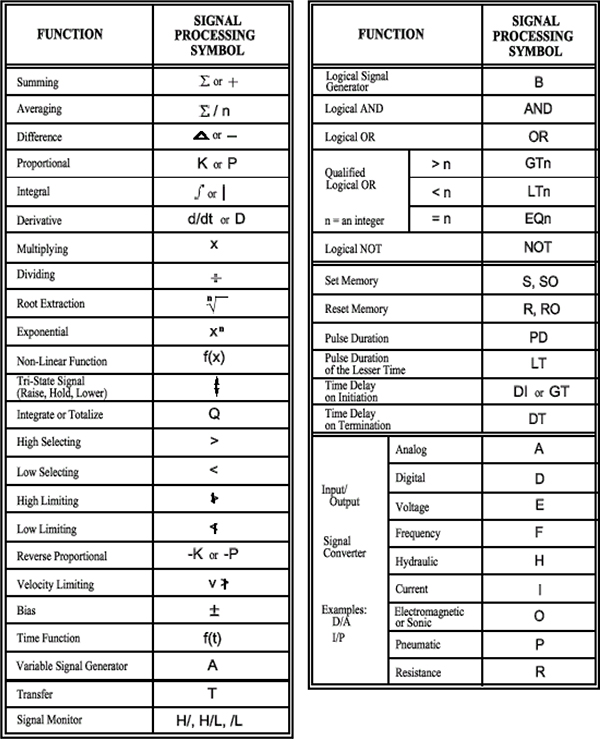

17 Drawing Types and Standards 213

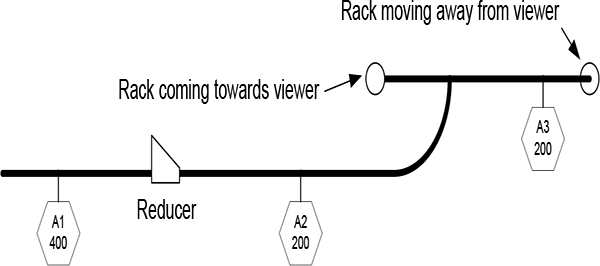

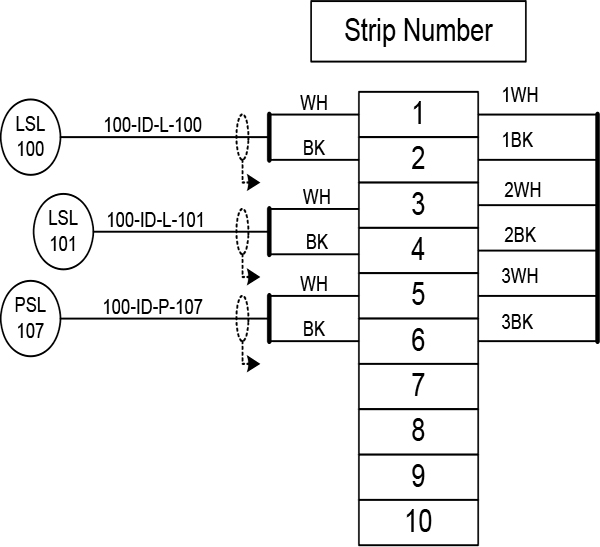

17.1 Understanding diagram layouts and formats 213

17.2 Cross references 229

17.3 Piping & Instrumentation Diagrams (P&ID) 229

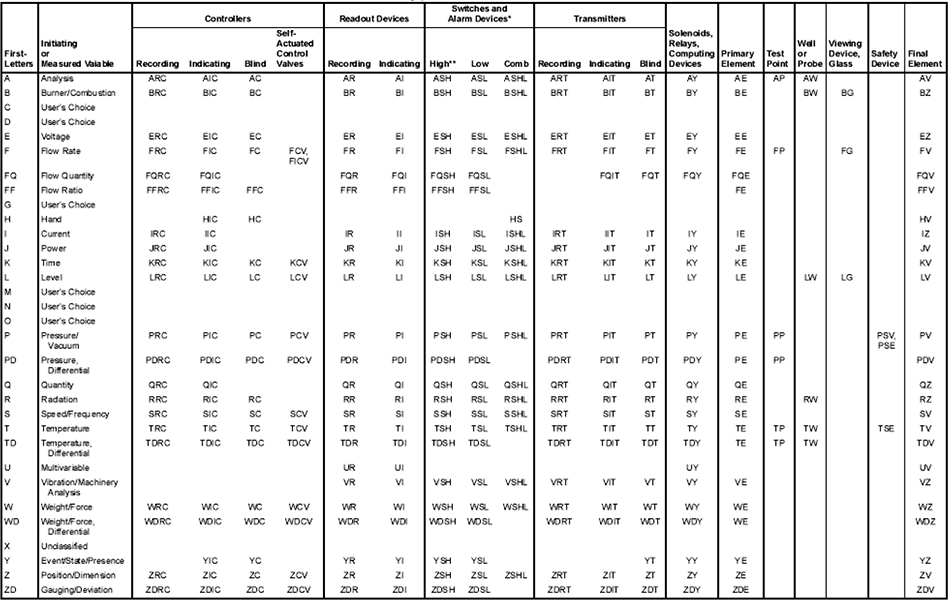

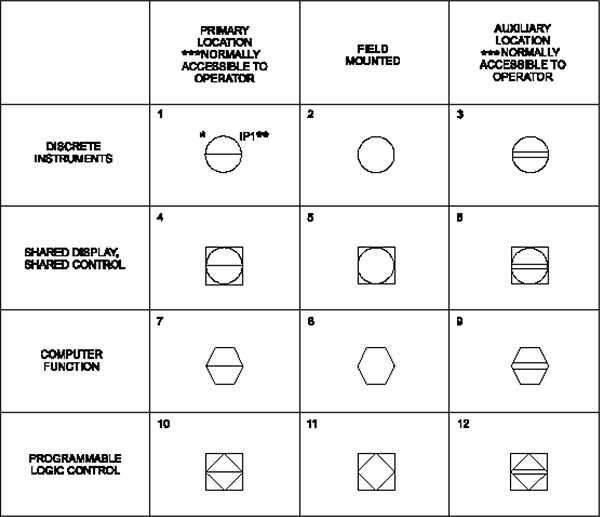

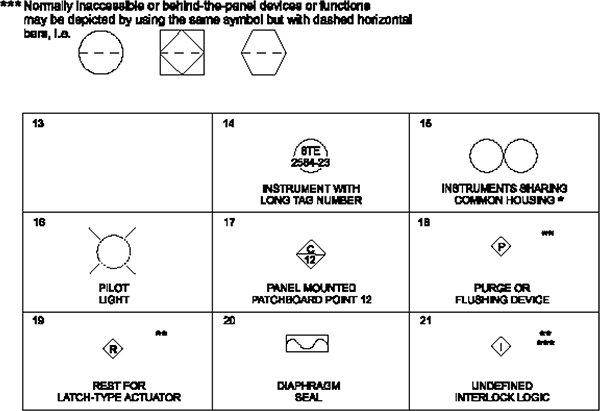

Appendix A Symbols and Numbering 238

Appendix B Practical Exercises 257

Learning objectives

- Overview of instrumentation and control

- Key building blocks of PLCs and SCADA systems

- Outline of the course

1.1 Overview of instrumentation and control

In an instrumentation and control system, data is acquired by measuring instruments and transmitted to a controller, typically a computer. The controller then transmits data (control signals) to control devices, which act upon a given process.

The integration of systems with each other enables data to be transferred quickly and effectively between different systems in a plant along a data communications link. This eliminates the need for expensive and unwieldy wiring looms and termination points.

Productivity and quality are the principal objectives in the good management of any production activity. Management can be substantially improved by the availability of accurate and timely data. From this, we can surmise that a good instrumentation and control system can facilitate both quality and productivity.

The main purpose of an instrumentation and control system, in an industrial environment, is to provide the following:

- Control of the processes and alarms

- Control of sequencing, interlocking and alarms

- An operator interface for display and control

- Management information

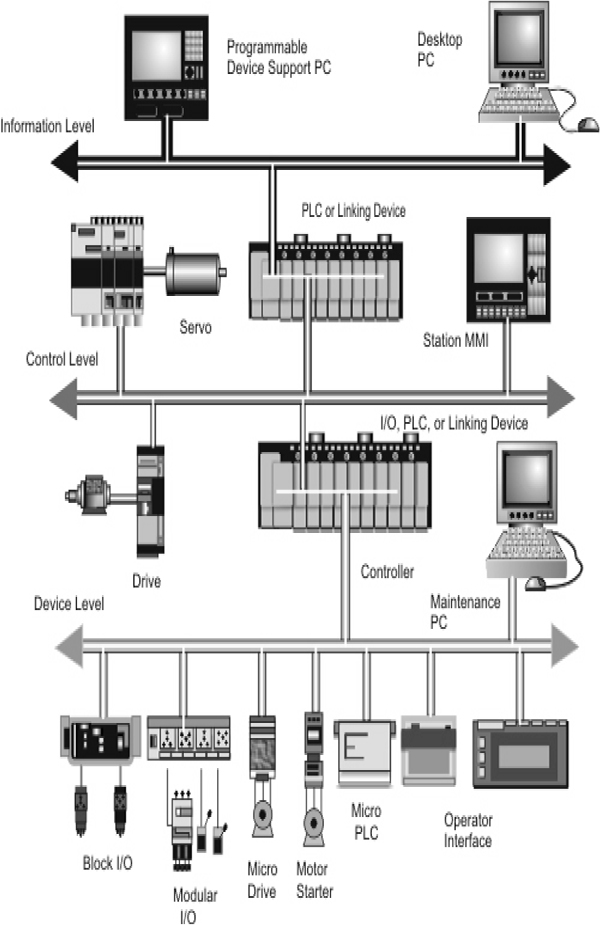

- Distributed Control Systems (DCSs)

- Programmable Logic Controllers (PLCs)

- Supervisory Control and Data Acquisition (SCADA) system

- Smart instrumentation systems

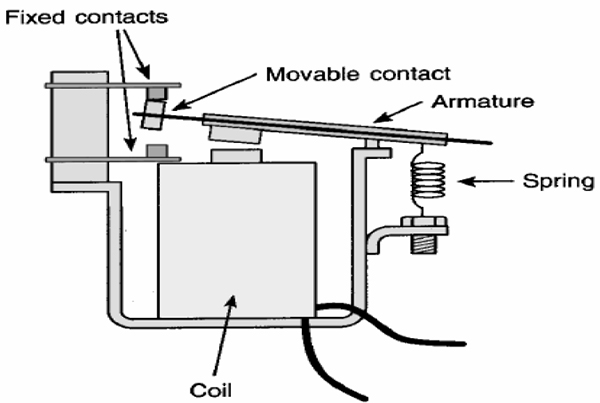

1.2 Key building blocks of PLC and SCADA systems

1.2.1 PLC Systems

In the past, processes were controlled manually, which was a very tedious job. During the early years of control, hard-wired relays were used to control the same process. However, relays could not meet all the needs of modern times, and a faster solution was required. Simply, when a change of control logic was required, the entire hardware wiring needed to be changed. This was time-consuming as well as tiresome. Then, PLCs were developed to automate this process, hence the origin of the so-called “ladder diagram” programming.

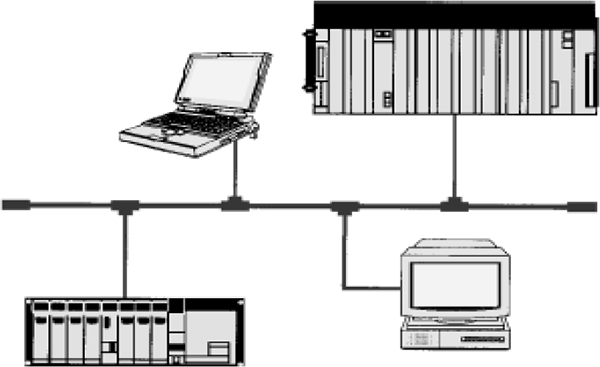

Since the late 1970s, PLCs have replaced hard-wired relays with a combination of ladder logic software and solid state electronic input and output modules. They are often used in place of RTUs as they offer a standard hardware solution, which is very economically priced.

The PLCs have become important building blocks for automated systems. Because they have constantly increased in capability while decreasing in cost, PLCs have solidified their position as the device of choice for a wide variety of control tasks.

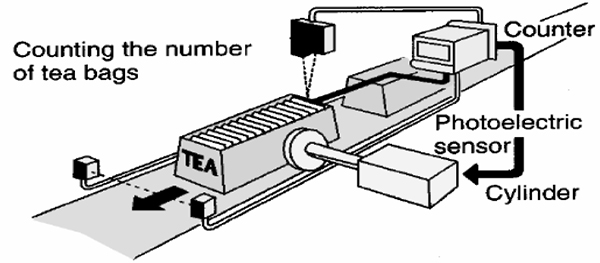

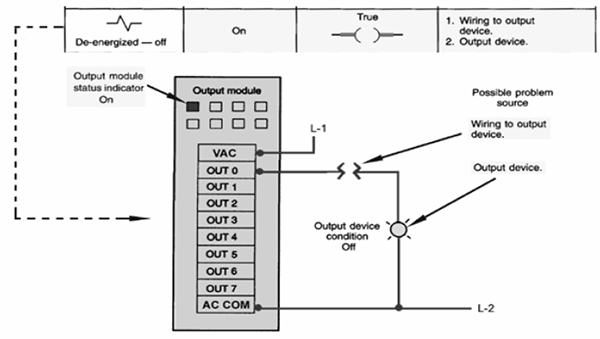

In brief terms, a PLC is a digital electronic device that contains a programmable (changeable) memory in which a sequence of instructions is stored. Those instructions enable the PLC to perform various useful control functions such as relay logic, counting, timing, sequencing, and arithmetic computation. These functions are usually used to monitor and control individual machines or complex processes via inputs and outputs (I/Os). Input/output modules connected to the PLC provide analogue or digital electronic interfaces to the external world. The PLC reads inputs, processes them through a program, and generates outputs.

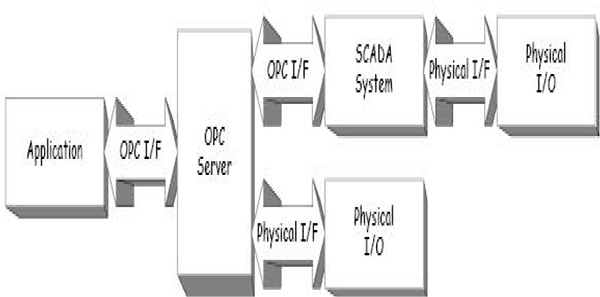

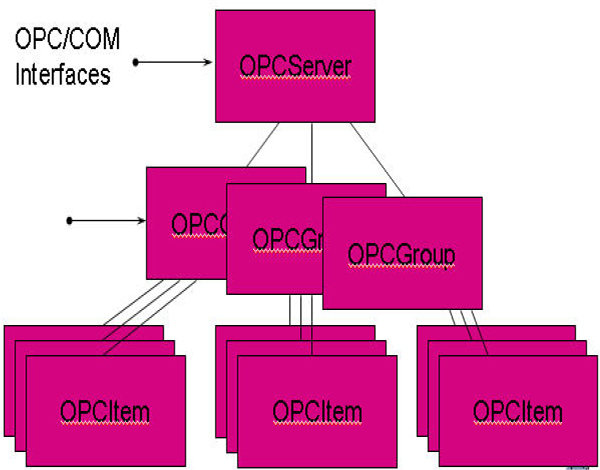

1.2.2 SCADA system

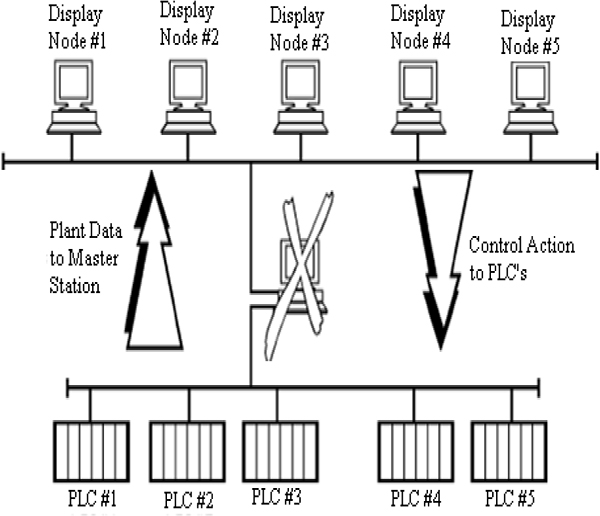

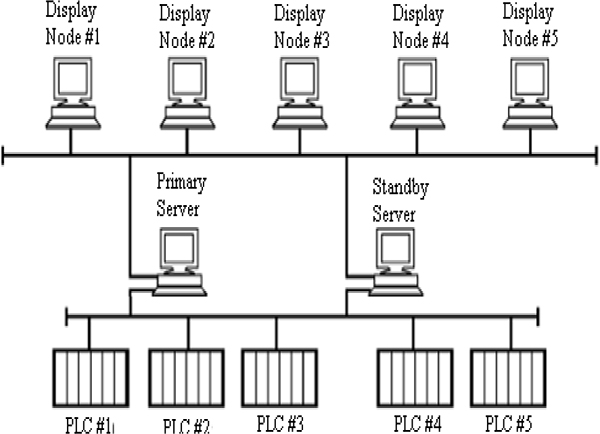

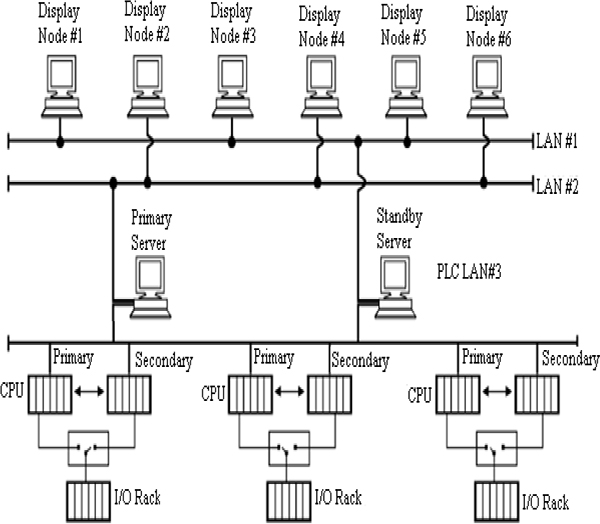

A Supervisory Control and Data Acquisition (SCADA) system means a system consisting of a number of Remote Terminal Units (RTUs) collecting field data connected back to a master station via a communications system.

In the first generation of telemetry systems, the objective was to simply have an idea of the system operation. Telemetry is all about remote monitoring. The basic approach was to gather data (generally restricted to measurements of the same type), and relay those results to another location.

These early systems were followed by data acquisition systems, which also captured and stored data. Finally, the control aspect was added as well. It needs to be emphasized that applications of SCADA cover all types of services, not just electrical systems.

SCADA found its first application in the power generation and transmission sectors of the electric utility industry. The interconnection of large power grids in the Midwestern and the Southern U.S. (1962) created the largest synchronized system in the world. The blackout of 1965 prompted the U.S. Federal Power Commission to urge closer coordination between regional coordination groups (Electric Power Reliability Act of 1967), and led to the consequent formation of the National Electric Reliability Council (1970). The importance and urgency of closer coordination was re-emphasized with the northeast blackout of 2003. Transmission SCADA became the base to manage the transmission grid.

In the late 1980s and the early 1990s, when SCADA vendors delivered reasonably priced “small” SCADA systems on low-cost hardware architectures to the small co-ops and municipality utilities, the first real deployments of distribution SCADA systems began. As the market expanded, SCADA vendors who had been providing transmission SCADA began to take notice of the distribution market.

1.3 Outline of the course

The title of the course is – Fundamentals of Instrumentation, Process Control, PLC’s and SCADA for Plant Operators and other Non-Instrument Personnel. This course gives a brief introduction to the measurement and instruments used for measurements. Different types of measurements exist and depending on the type of measurement the instruments used will vary. This will be discussed in detail.

Automation of the control system is gaining prominence due to the ease of controlling facilities it provides. Some of such control systems are PLC, SCADA, DCS and others. These control systems and their components are given in this course. The practical exercises are given which makes the candidate understand the systems in a better way.

Anybody with an interest in gaining know-how in the full range of instrumentation, process control, PLCs, SCADA and P&ID documentation. This can range from operators, trades personnel, procurement staff, sales staff, technicians and engineers from other backgrounds/disciplines, such as mechanical, electrical and civil. Even the plant secretary who is keen to have a good understanding of the key concepts would benefit. Managers who are keen to understand the key workings and the future of their plants would also benefit from this course.

The course mainly covers the following topics:

- Process measurement

- Pressure measurement

- Level measurement

- Temperature measurement

- Flow measurement

- Fundamentals of process loop tuning

- Introduction to control valves

- Different types of control valves

- Fundamentals of PLCs

- Fundamentals of PLC hardware

- Fundamentals of PLC software

- Introduction to SCADA systems

- SCADA systems hardware

- SCADA systems software

- Basics of data communications between PLC and SCADA systems

- Drawing types and standards

In this chapter we will discuss the basic measuring concepts and terminology used. The measurement and control of the overall control system is highlighted.

Learning objectives

- Basic measurement concepts

- Definition of terminology

- Measuring instruments and control valves as part of the overall control system

2.1 Basic measurement concepts

The basic set of units used on this course is the International standard of unit system (SI unit system). This can be summarised in Table 2.1.

| Quantity | Unit | Abbreviation |

|---|---|---|

| Length | Meter | m |

| Mass | Kilogram | kg |

| Time | Second | s |

| Current | Ampere | A |

| Temperature | Degrees kelvin | °K |

| Voltage | Volt | V |

| Resistance | Ohm | Ω |

| Capacitance | Farad | F |

| Inductance | Henry | H |

| Energy | Joules | J |

| Power | Watt | W |

| Frequency | Hertz | Hz |

| Charge | Coulomb | C |

| Force | Newton | N |

| Magnetic flux | Weber | Wb |

| Magnetic field | Webers/metre2 | Wb/m2 |

| Density | Kilogram/metre3 | kg/m3 |

There are a number of criteria that must be satisfied when specifying process measurement equipment. Below is a list of the more important specifications.

2.1.1 Accuracy

The accuracy specified by a device is the amount of error that may occur when measurements are taken. It determines how precise or correct the measurements are to the actual value and is used to determine the suitability of the measuring equipment. Accuracy can be expressed as any of the following:

- Error in units of the measured value

- Percent of span

- Percent of upper range value

- Percent of scale length

- Percent of actual output value

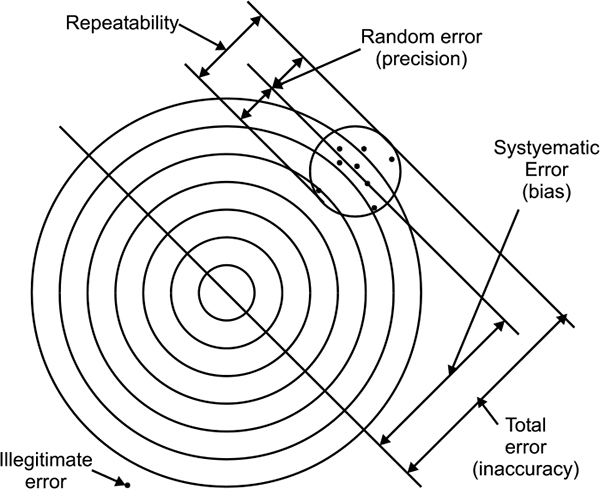

Accuracy generally contains the total error in the measurement and accounts for linearity, hysteresis and repeatability. Figure 2.1 shows errors in measurement.

Reference accuracy is determined at reference conditions, i.e. constant ambient temperature, static pressure, and supply voltage. There is also no allowance for drift over time.

Accuracy terminology

2.1.2 Range of operation

The range of operation defines the high and low operating limits between which the device will operate correctly, and at which the other specifications are guaranteed. Operation outside of this range can result in excessive errors, equipment malfunction and even permanent damage or failure.

2.1.3 Budget/Cost

Although not so much a specification, the cost of the equipment is certainly a selection consideration. This is generally dictated by the budget allocated for the application. Even if all the other specifications are met, this can prove an inhibiting factor.

2.1.4 Hysteresis

Hysteresis is the difference in the output for given input when the input is increasing and output for same input when input is decreasing. In other words, it is the difference in the way device works when moving from 0% to 100%, compared to the way the device works when moving from 100% to 0%. When input of any instrument is slowly varied from zero to full scale and then back to zero, its output varies. One example is shown in Figure 2.2. This is where the accuracy of the device is dependent on the previous value and the direction of variation. Hysteresis causes a device to show an inaccuracy from the correct value, as it is affected by the previous measurement.

Hysteresis

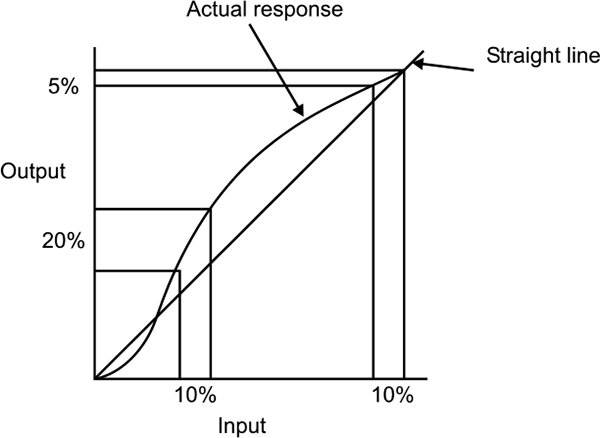

2.1.5 Linearity

Linearity expresses the deviation of the actual reading from a straight line. If all outputs are in the same proportion to corresponding inputs over a span of values, then input-output plot is straight line else it will be non linear as shown in Figure 2.3. For continuous control applications, the problems arise due to the changes in the rate the output differs from the instrument. The gain of a non-linear device changes as the change in output over input varies. In a closed loop system, changes in gain affect the loop dynamics. In such an application, the linearity needs to be assessed. If a problem does exist, then the signal needs to be linearised.

Linearity

2.1.6 Repeatability

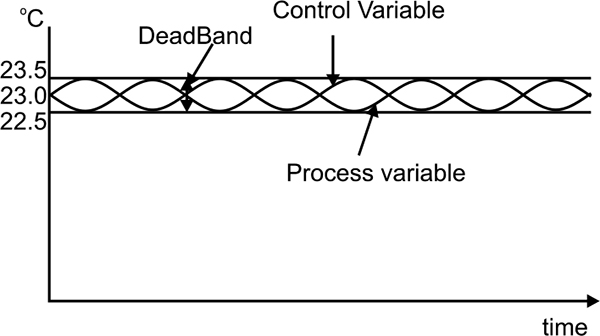

Repeatability defines how close a second measurement is to the first under the same operating conditions, and for the same input. Repeatability is generally within the accuracy range of a device and is different from hysteresis in that the operating direction and conditions must be the same.

Continuous control applications can be affected by variations due to repeatability. When a control system sees a change in the parameter it is controlling, it will adjust its output accordingly. However if the change is due to the repeatability of the measuring device, then the controller will over-control. This problem can be overcome by using the deadband in the controller as shown in Figure 1.4; however repeatability becomes a problem when an accuracy of say, 0.1% is required, and a repeatability of 0.5% is present.

Repeatability

Ripples or small oscillations can occur due to over controlling. This needs to be accounted for in the initial specification of allowable values.

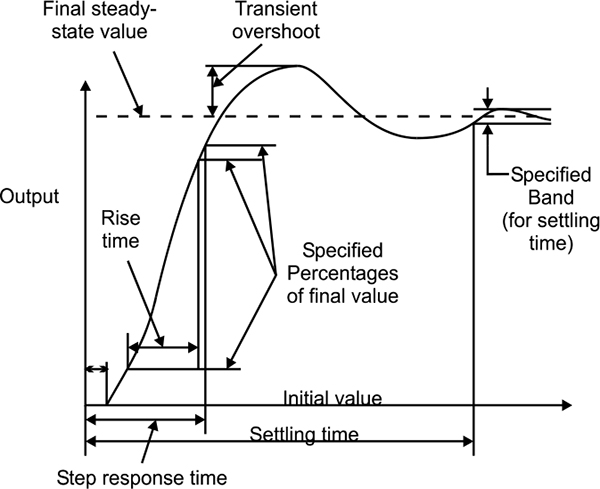

2.1.7 Response

When the output of a device is expressed as a function of time (due to an applied input) the time taken to respond can provide critical information about the suitability of the device. A slow responding device may not be suitable for an application. This typically applies to continuous control applications where the response of the device becomes a dynamic response characteristic of the overall control loop. However in critical alarming applications where devices are used for point measurement, the response may be just as important. Figure 1.5 shows response of the system to a step input.

Typical time response for a system with a step input

2.2 Definition of terminology

Accuracy: How precise or correct the measured value is to the actual value. Accuracy is an indication of error in the measurement.

Ambient: The surrounds or environment in reference to a particular point or object.

Attenuation: A decrease in signal magnitude over a period of time.

Calibrate: To configure a device so that the required output represents (to a defined degree of accuracy) the respective input.

Closed loop: Relates to a control loop where the process variable is used to calculate the controller output.

Coefficient, temperature: A coefficient is typically a multiplying factor. The temperature coefficient defines how much change in temperature there is for a given change in resistance (for a temperature dependent resistor).

Cold junction: The thermocouple junction which is at a known reference temperature.

Compensation: A supplementary device used to correct errors due to variations in operating conditions.

Controller: A device which operates automatically to regulate the control of a process with a control variable.

Elastic: The ability of an object to regain its original shape when an applied force is removed. When a force is applied that exceeds the elastic limit, then permanent deformation will occur.

Excitation: The energy supply required to power a device for its intended operation.

Gain: This is the ratio of the change of the output to the change in the applied input. Gain is a special case of sensitivity, where the units for the input and output are identical and the gain is unit less.

Hunting: Generally an undesirable oscillation at or near the required set point. Hunting typically occurs when the demands on the system performance are high and possibly exceed the system capabilities. The output of the controller can be over controlled due to the resolution of accuracy limitations.

Hysteresis: The accuracy of the device is dependent on the previous value and the direction of variation. Hysteresis causes a device to show an inaccuracy from the correct value, as it is affected by the previous measurement.

Ramp: Defines the delayed and accumulated response of the output for a sudden change in the input.

Range: The region between the specified upper and lower limits where a value or device is defined and operated.

Reliability: The probability that a device will perform within its specifications for the number of operations or time period specified.

Repeatability: The closeness of repeated samples under exact operating conditions.

Reproducibility: The similarity of one measurement to another over time, where the operating conditions have varied within the time span, but the input is restored.

Resolution: The smallest interval that can be identified as a measurement varies.

Resonance: The frequency of oscillation that is maintained due to the natural dynamics of the system.

Response: Defines the behaviour over time of the output as a function of the input. The output is the response or effect, with the input usually noted as the cause.

Self Heating: The internal heating caused within a device due to the electrical excitation. Self heating is primarily due to the current draw and not the voltage applied, and is typically shown by the voltage drop as a result of power (I2R) losses.

Sensitivity: This defines how much the output changes, for a specified change in the input to the device.

Set-point: Used in closed loop control, the set point is the ideal process variable. It is represented in the units of the process variable and is used by the controller to determine the output to the process.

Span Adjustment: The difference between the maximum and minimum range values. When provided in an instrument, this changes the slope of the input-output curve.

Steady state: Used in closed loop control where the process no longer oscillates or changes and settles at some defined value.

Stiction: Shortened form of static friction, and defined as resistance to motion. More important is the force required (electrical or mechanical) to overcome such a resistance.

Stiffness: This is a measure of the force required to cause a deflection of an elastic object.

Thermal shock: An abrupt temperature change applied to an object or device.

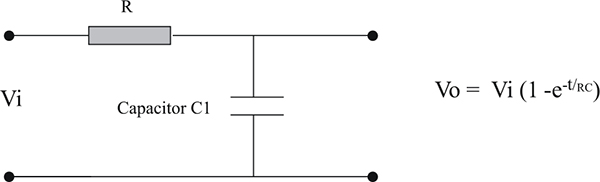

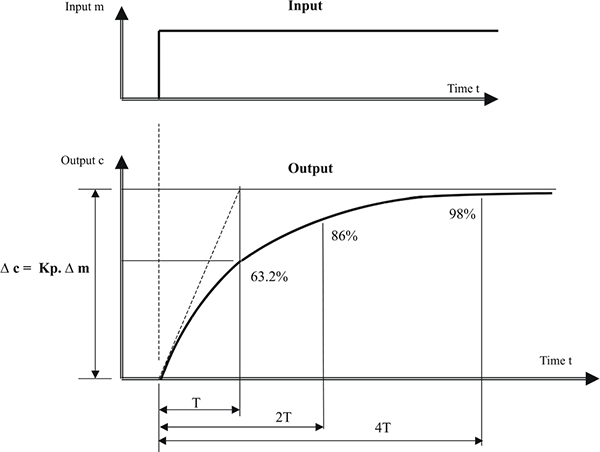

Time constant: Typically a unit of measure which defines the response of a device or system. The time constant of a first order system is defined as the time taken for the output to reach 63.2% of the total change, when subjected to a step input change.

Transducer: An element or device that converts information from one form (usually physical, such as temperature or pressure) and to another (usually electrical, such as volts or milli volts or resistance change). A transducer can be considered to comprise a sensor at the front end (at the process) and a transmitter.

Transient: A sudden change in a variable which is neither a controlled response nor long lasting.

Transmitter: A device that converts from one form of energy to another. Usually from electrical to electrical for the purpose of signal integrity for transmission over longer distances and for suitability with control equipment.

Variable: Generally, this is some quantity of the system or process. The two main types of variables that exist in the system are the measured variable and the controlled variable. The measured variable is the measured quantity and is also referred to as the process variable as it measures process information. The controlled variable is the controller output which controls the process.

Vibration: This is the periodic motion (mechanical) or oscillation of an object.

Zero adjustment: The zero in an instrument is the output provided when no or zero input is applied. The zero adjustment produces a parallel shift in the input-output curve.

2.3 Measuring instruments and control valves as part of the overall control system

Figure 2.6 shows how instrument and control valves fit into the overall control system structure. The topic of controllers and tuning forms part of a separate workshop.

Instruments and control valves in the overall control system

2.3.1 Typical applications

Some typical applications are listed below:

HVAC (Heating, ventilation and air conditioning) applications

- Heat transfer

- Billing

- Axial fans

- Climate control

- Hot and chilled water flows

- Forced air

- Fumehoods

- System balancing

- Pump operation and efficiency

Petrochemical applications

- Co-generation

- Light oils

- Petroleum products

- Steam

- Hydrocarbon vapours

- Flare lines, stacks

Natural gas

- Gas leak detection

- Compressor efficiency

- Fuel gas systems

- Bi-directional flows

- Mainline measurement

- Distribution lines measurement

- Jacket water systems

- Station yard piping

Power industry

- Feed water

- Circulating water

- High pressure heaters

- Fuel oil

- Stacks

- Auxiliary steam lines

- Cooling tower measurement

- Low pressure heaters

- Reheat lines

- Combustion air

Emissions monitoring

- Chemical incinerators

- Trash incinerators

- Refineries

- Stacks and rectangular ducts

- Flare lines

In this chapter we will discuss the principles of pressure measurement and pressure transducers. Both mechanical and electrical elements are highlighted.

Learning objectives

- Principle of pressure measurement

- Pressure transducers and elements

3.1 Principle of pressure measurement

3.1.1 Bar and Pascal

Pressure is defined as a force per unit area, and can be measured in units such as psi (pounds per square inch), inches of water, and milli-meters of mercury, Pascal (Pa, or N/m�) or bar. Until the introduction of SI units, the ‘bar’ was quite common.

The bar is equivalent to 100,000 N/m�, which were the SI units for measurement. To simplify the units, the N/m� was adopted with the name of Pascal, abbreviated to Pa. Pressure is quite commonly measured in kilopascals (kPa), which is 1000 Pascal and equivalent to 0.145psi.

3.1.2 Absolute, gauge and differential pressure

The Pascal is a means of measuring a quantity of pressure. When the pressure is measured in reference to an absolute vacuum (no atmospheric conditions), then the result will be in Pascal (Absolute). However when the pressure is measured relative to the atmospheric pressure, then the result will be termed Pascal (Gauge). If the gauge is used to measure the difference between two pressures, it then becomes Pascal (Differential).

Note 1: It is common practice to show gauge pressure without specifying the type, and to specify absolute or differential by stating ‘absolute’ or ‘differential’ for those pressures.

Note 2: Older measurement equipment may be in terms of psi (pounds per square inch) and as such represent gauge and absolute pressure as psig and psia respectively. Note that the ‘g’ and ‘a’ are not recognized in the SI unit symbols, and as such are no longer encouraged.

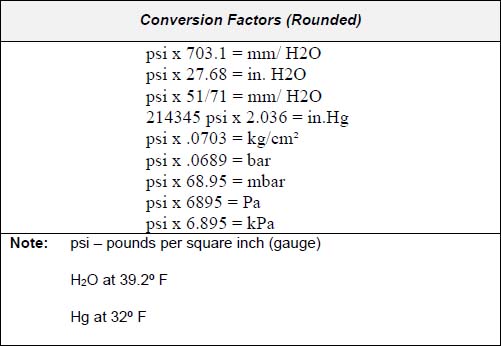

To determine differential in inches of mercury vacuum multiply psi by 2.036 (or approximately 2). Another common conversion is 1 Bar = 14.7 psi. Table 3.1 shows different conversion factors.

Conversion Factor

3.2 Pressure transducers and elements

In this section we have discussed about pressure transducers and elements in mechanical and electrical.

3.2.1 Pressure transducers and elements – mechanical

Mechanical pressure transducers and elements are as follows

- Bourdon tube

- Helix and spiral tubes

- Spring and bellows

- Diaphragm

- Manometer

- Single and Double inverted bell

Bourdon Tube

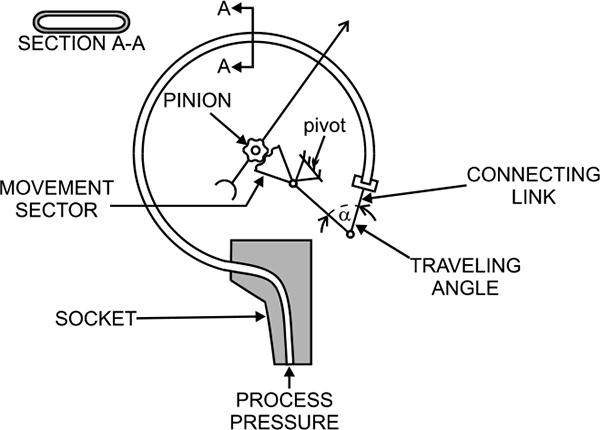

The Bourdon tube works on a simple principle that a bent tube will change its shape when exposed to variations of internal and external pressure. As pressure is applied internally, the tube straightens and returns to its original form when the pressure is released.

The tip of the tube moves with the internal pressure change and is easily converted with a pointer onto a scale. A connector link is used to transfer the tip movement to the geared movement sector. The pointer is rotated through a toothed pinion by the geared sector.

This type of gauge may require vertical mounting (orientation dependent) for correct results. The element is subject to shock and vibration, which is also due to the mass of the tube. Because of this and the amount of movement with this type of sensing, they are prone to breakage, particularly at the base of the tube.

The main advantage with the Bourdon tube is that it has a wide operating range (depending on the tube material). This type of pressure measurement can be used for positive or negative pressure ranges, although the accuracy is impaired when in a vacuum.

Selection and Sizing: The type of duty is one of the main selection criteria when choosing Bourdon tubes for pressure measurement. For applications, which have rapid cycling of the process pressure, such in ON/OFF controlled systems, then the measuring transducer requires an internal snubber. They are also prone to failure in these applications.

Liquid filled devices are one way to reduce the wear and tear on the tube element.

Advantages

- Inexpensive

- Wide operating range

- Fast response

- Good sensitivity

- Direct pressure measurement

Disadvantages

- Primarily intended for indication only

- Non-linear transducer, linearised by gear mechanism

- Hysteresis on cycling

- Sensitive to temperature variations

- Limited life when subject to shock and vibration

Application Limitations: These devices should be used in air if calibrated for air, and in liquid if calibrated for liquid. Special care is required for liquid applications in bleeding air from the liquid lines.

Figure 3.1 shows pressure measurement using C type Bourdon Tube. This type of pressure measurement is limited in applications where there is input shock (a sudden surge of pressure), and in fast moving processes.

C-Bourdon pressure element

If the application is for the use of oxygen, then the device cannot be calibrated using oil. Lower ranges are usually calibrated in air. Higher ranges, usually 1000kPa, are calibrated with a dead weight tester (hydraulic oil).

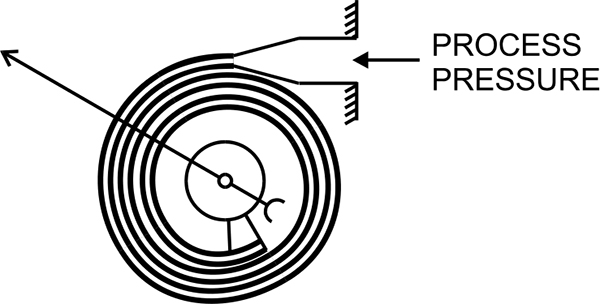

Helix and spiral tubes

Helix and spiral tubes are fabricated from tubing into shapes as per their naming. With one end sealed, the pressure exerted on the tube causes the tube to straighten out. The amount of straightening or uncoiling is determined by the pressure applied.

These two approaches use the Bourdon principle. The uncoiling part of the tube is mechanically linked to a pointer which indicates the applied pressure on a scale. This has the added advantage over the Bourdon tube, as there are no movement losses due to links and levers.

The Spiral tube is suitable for pressure ranges up to 28,000 kPa and the helical tube for ranges up to 500,000 kPa. The pressure sensing elements vary depending on the range of operating pressure and type of process involved.

The choice of spiral or helical elements is based on the pressure ranges. The pressure level between spiral and helical tubes varies depending on the manufacturer. Low pressure elements have only two or three coils to sense the span of pressures required, however high pressure sensing may require up to 20 coils. Figure 3.2 shows pressure measurement using spiral bourdon element.

Spiral bourdon element

One difference and advantage of these is the dampening they have with fluids under pressure.

The advantages and disadvantages of this type of measurement are similar to the Bourdon tube with the following differences

Advantages

- Increased accuracy and sensitivity

- Higher over range protection

Disadvantages

- Very expensive

Application Limitations: Process pressure changes cause problems with the increase in the coil size.

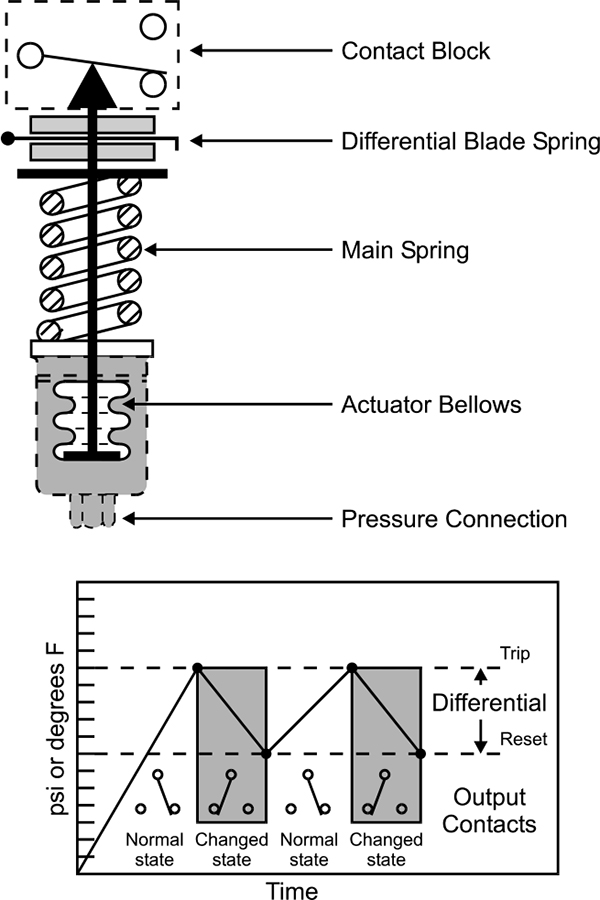

Spring and bellows

A bellows is an expandable element and is made up of a series of folds, which allow expansion. One end of the Bellows is fixed and the other moves in response to the applied pressure. A spring is used to oppose the applied force and a linkage connects the end of the bellows to a pointer for indication. Bellows type sensors are also available which have the sensing pressure on the outside and the atmospheric conditions within.

The spring is added to the bellows for more accurate measurement. The elastic action of the bellows by themselves is insufficient to precisely measure the force of the applied pressure.

This type of pressure measurement is primarily used for ON/OFF control providing clean contacts for opening and closing electrical circuits. This form of sensing responds to changes in pneumatic or hydraulic pressure. Figure 3.3 shows pressure measurement using bellows.

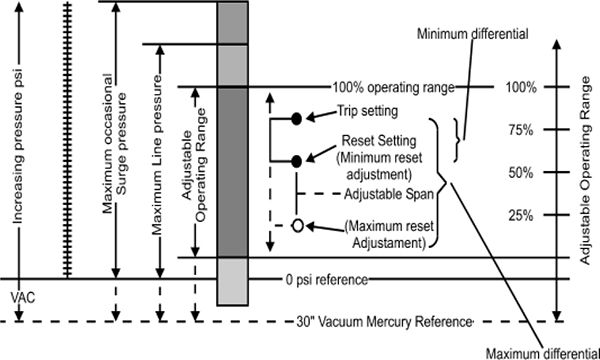

Typical Application: The process pressure is connected to the sensor and is applied directly into the bellows. As the pressure increases, the bellows exert force on the main spring. When the threshold force of the main spring is overcome, the motion is transferred to the contact block causing the contacts to actuate. This is the Trip setting.

When the pressure decreases, the main spring will retract which causes the secondary differential blade spring to activate and reset the contacts. This is the Reset setting.

The force on the main spring is varied by turning the operating range adjustment screw. This determines where the contacts will trip.

The force on the secondary differential blade spring is varied by turning the differential adjustment screw. This determines where the contacts will reset. Figure 3.4 shows working of bellows graphically.

Basic mechanical structure

Graphical illustration of technical terms

Copper alloy bellows may be used on water or air. Other liquids and gases may be used if non-corrosive to this alloy. Use type 316 stainless steel for more corrosive liquids or gases.

Diaphragm

Many pressure sensors depend on the deflection of a diaphragm for measurement. The diaphragm is a flexible disc, which can be either flat or with concentric corrugations and is made from sheet metal with high tolerance dimensions.

The diaphragm can be used as a means of isolating the process fluids, or for high-pressure applications. It is also useful in providing pressure measurement with electrical transducers.

Diaphragms are well developed and proven. Modern designs have negligible hysteresis, friction and calibration problems when used with smart instrumentation. They are used extensively on air conditioning plants and for ON/OFF switching applications.

Selection: The selection of diaphragm materials is important, and is very much dependent on the application. Beryllium copper has good elastic qualities, where Ni-Span C has a very low temperature coefficient of elasticity.

Stainless steel and Inconel are used in extreme temperature applications, and are also suited for corrosive environments. For minimum hysteresis and drift, then Quartz is the best choice. There are two main types of construction and operation of diaphragm sensors. They are

- Motion Balanced

- Force Balanced

Motion balanced designs are used to control local, direct reading indicators. They are however more prone to hysteresis and friction errors.

Force balanced designs are used as transmitters for relaying information with a high accuracy; however they do not have direct indication capability.

Advantages

- Provide isolation from process fluid

- Good for low pressure

- Inexpensive

- Wide range

- Reliable and proven

- Used to measure gauge, atmospheric and differential pressure

Manometer

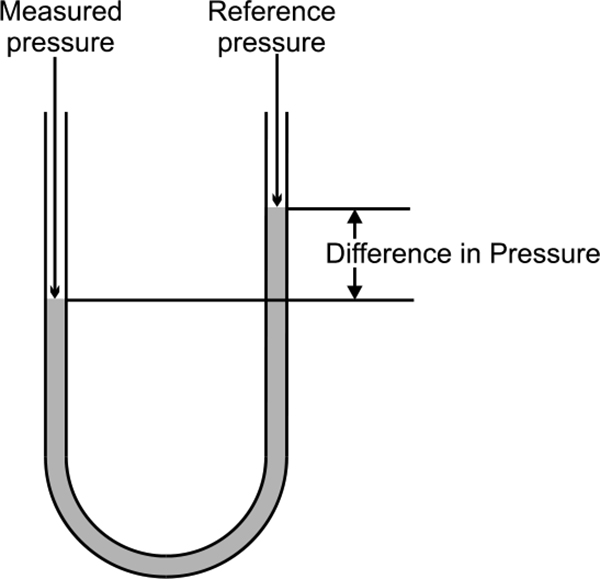

The simplest form of a manometer is that of a U-shaped tube filled with liquid. The reference pressure and the pressure to be measured are applied to the open ends of the tube. If there is a difference in pressure, then the heights of the liquid on the two sides of the tube will be different.

This difference in the heights is the process pressure in mm of water (or mm of mercury). The conversion into kPa is quite simple

For water, Pa = mm H2O x 9.807

For mercury, Pa = mm Hg x 133.3

Typical Applications: This type of pressure measurement is mainly used for spot checks or for calibration. They are used for low range measurements, as higher measurements require mercury. Mercury is toxic and is therefore considered mildly hazardous. Figure 3.5 shows simplest form of manometer for pressure measurement.

Simplest form of manometer

Advantages

- Simple operation and construction

- Inexpensive

Disadvantages

- Low-pressure range (water)

- Higher-pressure range requires mercury

- Readings are localised

Application Limitations: Manometers are limited to a low range of operation due to size restrictions. They are also difficult to integrate into a continuous control system.

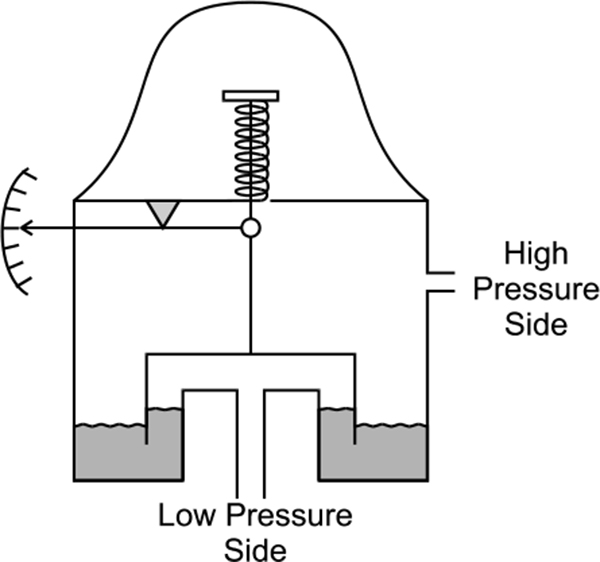

Single and double inverted bell

The Bell instrument measures the pressure difference in a compartment on each side of a bell-shaped chamber. If the pressure to be measured is referenced to the surrounding conditions, then the lower compartment is vented to the atmosphere and gauge pressure is measured. If the lower compartment is evacuated to form a vacuum, then the pressure measured will be in absolute units. However, to measure differential pressure, the higher pressure is connected to the top of the chamber and the lower pressure to the bottom. Figure 3.6 shows bell instrument for pressure measurement.

Inverted bell d/p detector

The bell instrument is used in applications where very low pressures are required to be measured, typically in the order of 0-250 Pa.

3.2.2 Pressure transducers and elements – electrical

The typical range of transducers here are:

- Strain gauge

- Vibrating wire

- Piezoelectric

- Capacitance

- Linear Variable Differential Transformer

- Optical

Strain gauge

Strain gauge sensing uses a metal wire or semiconductor chip to measure changes in pressure. A change in pressure causes a change in resistance as the metal is deformed. This deformation is not permanent, as the pressure (applied force) does not exceed the elastic limit of the metal. If the elastic limit is exceeded than permanent deformation will occur.

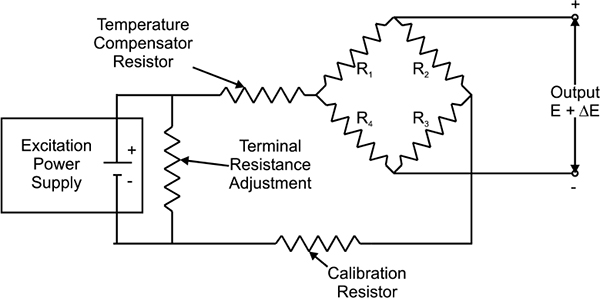

This is commonly used in a Wheatstone bridge arrangement where the change in pressure is detected as a change in the measured voltage.

Strain gauges in their infancy were metal wires supported by a frame. Advances in the technology of bonding materials mean that the wire can be adhered directly to the strained surface. Since the measurement of strain involves the deformation of metal, the strain material need not be limited to being a wire. As such, further developments also involve metal foil gauges. Bonded strain gauges are the more commonly used type. Figure 3.7 shows simple Wheatstone circuit for strain gauge.

As strain gauges are temperature sensitive, temperature compensation is required. One of the most common forms of temperature compensation is to use a wheatstone bridge. Apart from the sensing gauge, a dummy gauge is used which is not subjected to the forces but is also affected by temperature variations. In the bridge arrangement the dummy gauge cancels with the sensing gauge and eliminates temperature variations in the measurement.

Wheatstone circuit for strain gauges32

Strain gauges are mainly used due to their small size and fast response to load changes.

Typical Application: Pressure is applied to an isolating diaphragm, where the force is transmitted to the poly-silicon sensor by means of a silicone fill fluid. The reference side of the sensor is exposed to atmospheric pressure for gauge pressure transmitters. A sealed vacuum reference is used for absolute pressure transmitters.

When the process pressure is applied to the sensor, this creates a small deflection of the sensing diaphragm, which applies strain to the Wheatstone bridge circuit within the sensor. The change in resistance is sensed and converted to a digital signal for processing by the microprocessor.

Selection and Sizing: There exists a very wide selection of strain gauge transducers, in range, accuracy and the associated cost.

Advantages

- Wide range, 7.5kPa to 1400 MPa

- Inaccuracy of 0.1%

- Small in size

- Stable devices with fast response

- Most have no moving parts

- Good over-range capability

Disadvantages

- Unstable due to bonding material

- Temperature sensitive

- Thermo elastic strain causes hysteresis

Application Limitations: All strain gauge applications require regulated power supplies for the excitation voltage, although this is commonly internal with the sensing circuits.

Vibrating wire

This type of sensor consists of an electronic oscillator circuit, which causes a wire to vibrate at its natural frequency when under tension. The principle is similar to that of a guitar string. The vibrating wire is located in a diaphragm. As the pressure changes on the diaphragm so does the tension on the wire, which affects the frequency that the wire vibrates or resonates at. These frequency changes are a direct consequence of pressure changes and as such are detected and shown as pressure.

The frequency can be sensed as digital pulses from an electromagnetic pickup or sensing coil. An electronic transmitter would then convert this into an electrical signal suitable for transmission.

This type of pressure measurement can be used for differential, absolute or gauge installations. Absolute pressure measurement is achieved by evacuating the low-pressure diaphragm. A typical vacuum pressure for such a case would be about 0.5 Pa.

Advantages

- Good accuracy and repeatability

- Stable

- Low hysteresis

- High resolution

- Absolute, gauge or differential measurement

Disadvantages

- Temperature sensitive

- Affected by shock and vibration

- Non-linear

- Physically large

Application Limitations: Temperature variations require temperature compensation within the sensor this problem limits the sensitivity of the device. The output generated is non-linear which can cause continuous control problems.

This technology is seldom used any more. Being older technology it is typically found with analogue control circuitry.

Piezoelectric

When pressure is applied to crystals, they are elastically deformed. Piezoelectric pressure sensing involves the measurement of such deformation. When a crystal is deformed, an electric charge is generated for only a few seconds. The electrical signal is proportional to the applied force.

Because these sensors can only measure for a short period, they are not suitable for static pressure measurement. More suitable measurements are made of dynamic pressures caused from:

- Shock

- Vibration

- Explosions

- Pulsations

- Engines

- Compressors

This type of pressure sensing does not measure static pressure, and as such requires some means of identifying the pressure measured. As it measures dynamic pressure, the measurement needs to be referenced to the initial conditions before the impact of the pressure disturbance. The pressure can be expressed in relative pressure units, Pascal relative.

Quartz is commonly used as the sensing crystal as it is inexpensive, stable and insensitive to temperature variations. Tourmaline is an alternative, which gives faster response speeds, typically in the order of microseconds.

Advantages

- Accuracy 0.075%

- Very high pressure measurement, up to 70MPa

- Small size

- Robust

- Fast response, < 1 nanosecond

- Self-generated signal

Disadvantages

- Dynamic sensing only

- Temperature sensitive

Application Limitations: Require special cabling and signal conditioning.

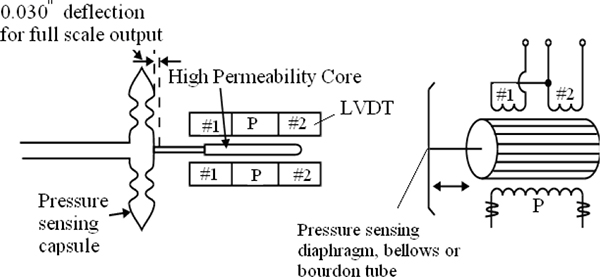

Capacitance

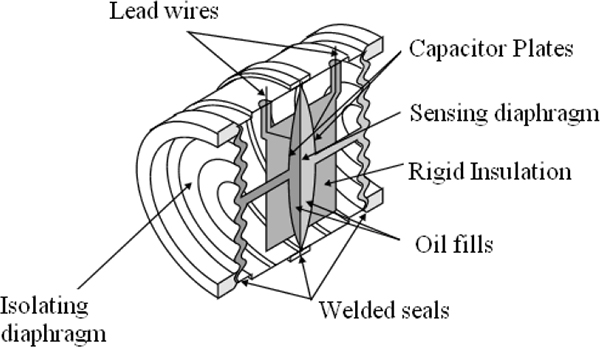

Capacitive pressure measurement involves sensing the change in capacitance that results from the movement of a diaphragm. The sensor is energised electrically with a high frequency oscillator. Figure 3.8 shows cross section of Rosemount sensor. When the diaphragm is deflected due to pressure changes, the relative capacitance is measured by a bridge circuit. Figure 3.9 shows capacitance pressure detector.

Two designs are quite common. The first is the two-plate design and is configured to operate in the balanced or unbalanced mode. The other is a single capacitor design.

The balanced mode is where the reference capacitor is varied to give zero voltage on the output. The unbalanced mode requires measuring the ratio of output to excitation voltage to determine pressure. This type of pressure measurement is quite accurate and has a wide operating range. Capacitive pressure measurement is also quite common for determining the level in a tank or vessel.

Cross section of the Rosemount S-Cell™ Sensor (Courtesy of Rosemount)

Capacitance pressure detector

Advantages

- Inaccuracy 0.01 to 0.2%

- Range of 80Pa to 35MPa

- Linearity

- Fast response

Disadvantages

- Temperature sensitive

- Stray capacitance problems

- Vibration

- Limited overpressure capability

- Cost

Application Limitations: Many of the disadvantages above have been addressed and their problems reduced in newer designs. Temperature controlled sensors are available for applications requiring a high accuracy.

With strain gauges being the most popular form of pressure measurement, capacitance sensors are the next most common solution.

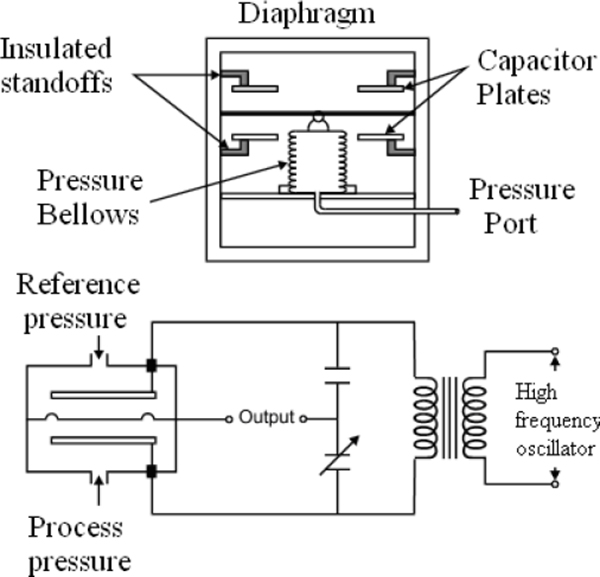

Linear Variable Differential Transformers (LVDT)

This type of pressure measurement relies on the movement of a high permeability core within transformer coils. The movement is transferred from the process medium to the core by use of a diaphragm, bellows or bourdon tube.

The LVDT operates on the inductance ratio between the coils. Three coils are wound onto the same insulating tube containing the high permeability iron core. The primary coil is located between the two secondary coils and is energised with an alternating current.

Equal voltages are induced in the secondary coils if the core is in the centre. The voltages are induced by the magnetic flux. When the core is moved from the centre position, the result of the voltages in the secondary windings will be different. The secondary coils are usually wired in series. Figure 3.10 shows LVDT for measurement of pressure.

LVDTs are sensitive to vibration and are subject to mechanical wear.

Linear variable differential transformer

Disadvantages

- Mechanical wear

- Vibration

Optical

Optical sensors can be used to measure the movement of a diaphragm due to pressure. An opaque vane is mounted to the diaphragm and moves in front of an infrared light beam. As the light is disturbed, the received light on the measuring diode indicates the position of the diaphragm.

A reference diode is used to compensate for the ageing of the light source. Also, by using a reference diode, the temperature effects are nullified as they affect the sensing and reference diodes in the same way.

Advantages

- Temperature corrected

- Good repeatability

- Negligible hysteresis

Disadvantages

- Expensive

Learning objectives

- Principles of level measurement

- Simple sight glasses

- Hydrostatic pressure

- Ultrasonic measurement

- Electrical measurement

- Density measurement

4.1 Principle of level measurement

4.1.1 Continuous measurement

The units of level are metres (m). However, there are numerous ways to measure level that require different technologies and various units of measurement.

Such means may be

- Ultrasonic, transit time

- Pulse echo

- Pulse radar

- Pressure, hydrostatic

- Weight, strain gauge

- Conductivity

- Capacitive

For continuous measurement, the level is detected and converted into a signal that is proportional to the level. Microprocessor based devices can indicate level or volume.

Different techniques also have different requirements. For example, when detecting the level from the top of a tank, the shape of the tank is required to deduce volume.

When using hydrostatic means, which detects the pressure from the bottom of the tank, the density must be known and remain constant.

4.1.2 Point detection

Point detection can also be provided for all liquids and solids. Some of the more common types are:

- Capacitive

- Microwave

- Radioactive

- Vibration

- Conductive

A level measuring system often consists of the sensor and a separate signal conditioning instrument. This combination is often chosen when multiple outputs (continuous and switched) are required and parameters may need to be altered.

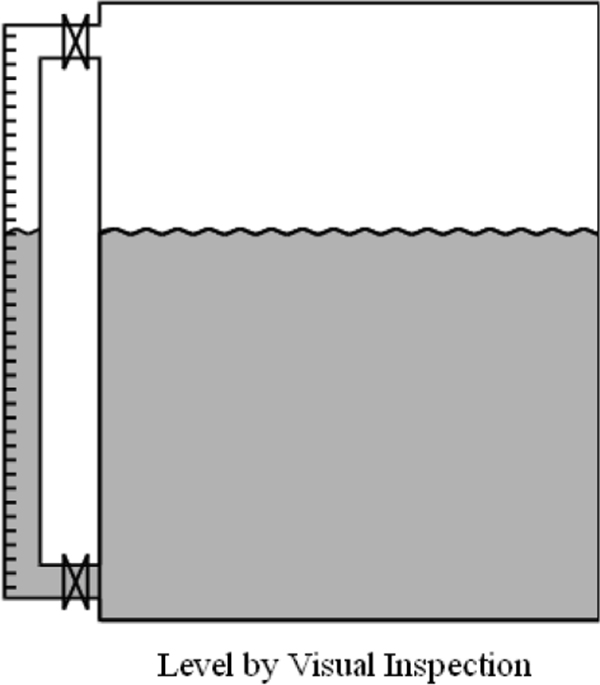

4.2 Simple sight glasses

A visual indication of the level can be obtained when part of the vessel is constructed from transparent material or the liquid in a vessel is bypassed through a transparent tube. The advantage of using stop valves with the use of a bypass pipe is the ease in removal for cleaning. Figure 4.1 shows level measurement using visual inspection.

Level by Visual Inspection

Advantages

- Very simple

- Inexpensive

Disadvantages

- Not suitable for automated control

- Maintenance – requires cleaning

- Fragile – easily damaged

Applications/Limitations

These are not highly suited for industrial applications as manual viewing and transfer of information is required by the operator.

Applications of such level measuring devices can be seen in tanks for the storage of lubricating oils or water. They provide a very simple means of accessing level information and can simplify the task of physically viewing or dipping a tank. They are, however, generally limited to operator inspection.

Sight glasses are also not suitable for dark or dirty liquids. This type should not be used when measuring hazardous liquids as the glass-tube is easily damaged or broken. In installations where the gauge is at a lower temperature than the process condensation can occur outside the gauge, impairing the accuracy of the reading.

4.3 Hydro pressure

Some of the different types of level measurement with pressure are:

- Static pressure

- Differential pressure

- Bubble tube method

- Diaphragm Box

- Weighing

4.3.1 Static pressure

The basis of hydrostatic pressure measurement for level is such that the measured pressure is proportional to the height of liquid in the tank, irrespective of volume. The pressure is related to the height by the following:

Where: P = pressure

h = height

ρ = relative density of fluid

g = acceleration due to gravity

For constant density, the only variable that changes is the height. In fact, any instrument that can measure pressure can be calibrated to read height of a given liquid, and can be used to measure liquid level in vessels under atmospheric conditions.

Most pressure sensors compensate for atmospheric conditions, so the pressure on the surface of liquids open to the atmosphere will be zero. The measuring units are generally in Pascal, but note that 10 Pa is approximately equivalent to 1 mm head of water.

Easy to remember ? 100 kPa = 10 m head of water = 1 bar = 1 atm

Actual numbers ? 101.325 kPa = 10.333 m head of water = 1.01325 bar = 1 atm

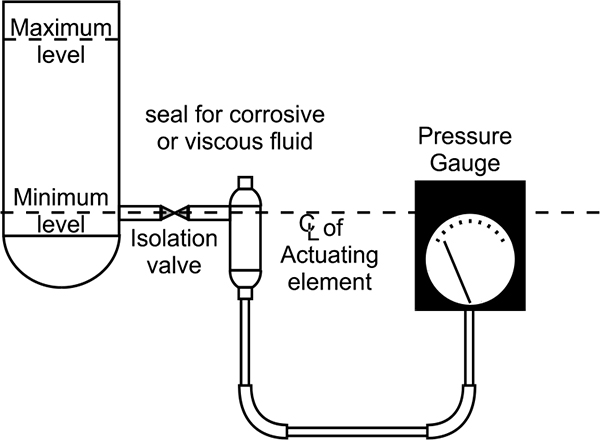

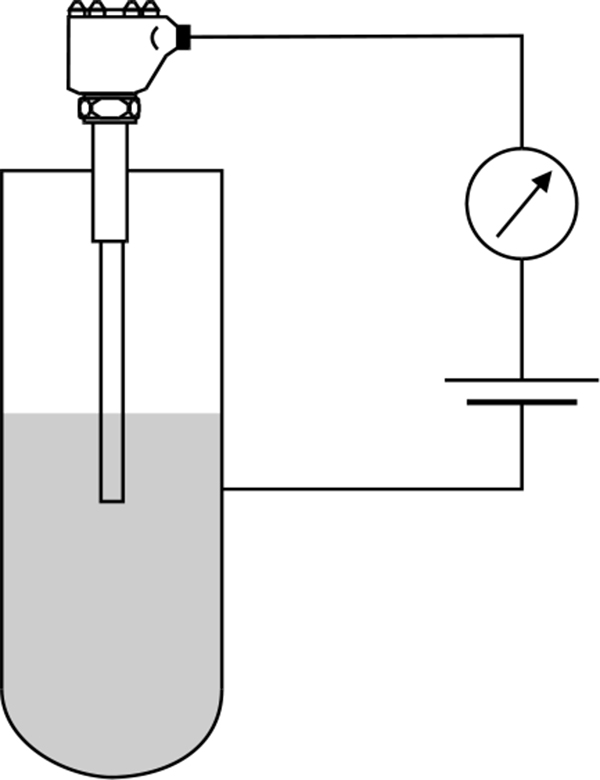

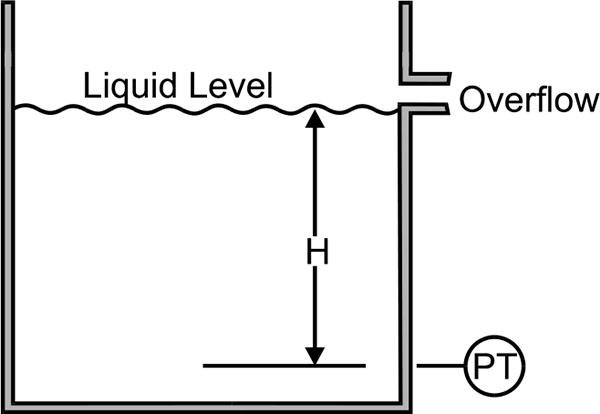

Hydrostatic pressure transducers always consist of a membrane, which is connected either mechanically or hydraulically to a transducer element. The transducer element can be based on such technologies as inductance, capacitance, strain gauge or even semiconductor. Figure 4.2 shows level measurement using pressure gauge.

A pressure gauge used to measure the height of a liquid in an open tank

The pressure transducer can be mounted in many types of pressure sensor so that the application can be quite specific to the requirement of the process conditions. Since the movement of the membrane is only a few microns, the semiconductor transducer is extremely insensitive to dirt or product build-up. This makes this type of measurement useful for such applications as sewage water, sludge, paint and oils. A seal is required for corrosive or viscous liquids or in the case where a pipe is used to hydraulically transmit the pressure to a gauge.

Since there is no application of movement, there is no relaxing force to cause hysteresis.

A pressure sensor is exposed to the pressure of the system, and therefore needs to be mounted at or near the bottom of the vessel. In situations where it is not possible to mount the sensor directly in the side of the vessel at the appropriate depth, it can be mounted from the top of the vessel and lowered into the fluid on the end of a rod or cable. This method is commonly used for applications in reservoirs and deep wells.

If the use of extension nozzles or long pipes is unavoidable, precautions are required to ensure the fluid will not harden or congeal in the pipe. If this occurs, then the pressure detected will no longer be accurate. Different mounting systems or pipe heaters could be used to prevent this.

Static pressure is measured in this type of measurement. The sensor therefore, should not be mounted directly in the product stream as the pressure measured will be too high and the level reading inaccurate. For similar reasons, a pressure sensor should not be mounted in the discharge outlet of a vessel, as the pressure measurement will be incorrectly low during discharge.

Advantages

- Level or volume measurement

- Simple to assemble and install

- Simple to adjust

- Reasonably accurate

Disadvantages

- Dependent on relative density of material

- More expensive than simpler types

- Expensive for high accuracy applications

Application Limitations

Level measurement can be made using the hydrostatic principle in open tanks, when the density of the material is constant. The sensor needs to be mounted in an open tank to ensure that the liquid, even at the minimum level always covers the process diaphragm.

Since the sensor is measuring pressure, it is therefore sensitive to sludge and dirt on the bottom of the tank. Build-up can often occur around or in the flange where the sensor is mounted. Bore water can also cause calcium build-up.

It is also critical that the pressure measurement is referenced to atmospheric conditions.

Level Measurement with Changing Product Density

If the material being measured is of varying densities, then accurate level measurement is impaired. However, sensors are available that compensate for various densities. In such sensors, mounting an external limit switch at a known height above the sensor makes corrections. When the switch status changes, the sensor uses the current measured value to automatically compensate for any density change.

It is optimal to mount the external limit switch for this compensation at the point where the level increases or decreases. This correction for density changes is best when the distance between the limit switch and the sensor is made as large as possible.

Variations in the temperature also affect the density of the fluid. Wax is a big problem where the pipes are heated with even slight variations in temperature cause noticeable changes in the density.

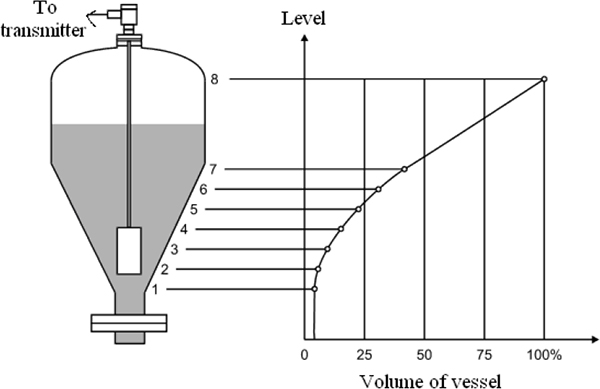

Volume Measurement for Different Vessel Shapes

Level measurement is easily obtained by hydrostatic pressure; however the volume of fluid within a vessel relies on the shape of the vessel. If the shape of the vessel does not change for increasing height then the volume is simply the level multiplied by the cross-sectional area. But, if the shape (or contour) of the vessel changes for increasing height, then the relationship between the height and the volume is not so simple.

To accurately describe the volume in a vessel, a characteristic curve is used to describe the functional relationship between the height (h) and the volume (V) of the vessel. As shown in Figure 4.3.The curve for a horizontal cylinder is of the simplest type and is often a standard characteristic offered by most suppliers. Depending on the sophistication of the manufacturer’s sensors, other curves for various vessel shapes can also be entered.

The output of the sensor can be liberalised using characteristic curves, which are described by up to 100 reference points and determined either by filling the vessel or from data supplied by the manufacturer.

Volume measurement: entering a characteristic curve

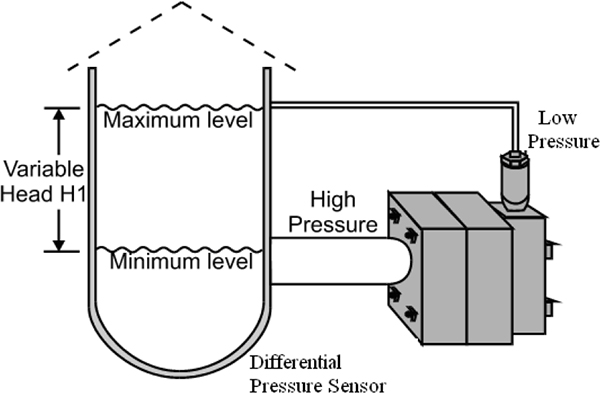

4.3.2 Differential

When the surface pressure on the liquid is greater (as may be the case of a pressurised tank) or different to the atmospheric pressure, then a differential pressure sensor is required. This is because the total pressure will be greater than the head of liquid pressure. With the differential pressure sensor, the pressure on the surface of the liquid will be subtracted from the total pressure, resulting in a measurement of the pressure due to the height of the liquid.

In applying this method of measurement, the LP (low-pressure) side of the transmitter is connected to the vessel above the maximum liquid level. This connection is called the dry leg. The pressure above the liquid is exerted on both the LP and HP (high-pressure) sides of the transmitter, and changes in this pressure do not affect the measured level. Figure 4.4 shows differential pressure method of level measurement.

For a pressurized tank, the level is measured using differential pressure methods

Using DP (Differential pressure) for filters

Differential pressure measurement for level in pressurised tanks is also used in filters to indicate the amount of contamination of a filter. If the filter remains clean, there is no significant pressure difference across the filter. As the filter becomes contaminated, the pressure on the upstream side of the filter will become greater than on the downstream side.

Advantages

- Level measurement in pressurised or evacuated tank

- Simple to adjust

- Reasonably accurate

Disadvantages

- Dependent on relative density of material

- Quite expensive for differential pressure measurement

- Inaccuracies due to build-up

- Maintenance intensive

Application Limitations

The density of the fluid affects the accuracy of the measurement. DP instruments should be used for liquids with relatively fixed specific gravity. Also the process connections are susceptible to plugging from debris, and the wet leg of the process connection may be susceptible to freezing.

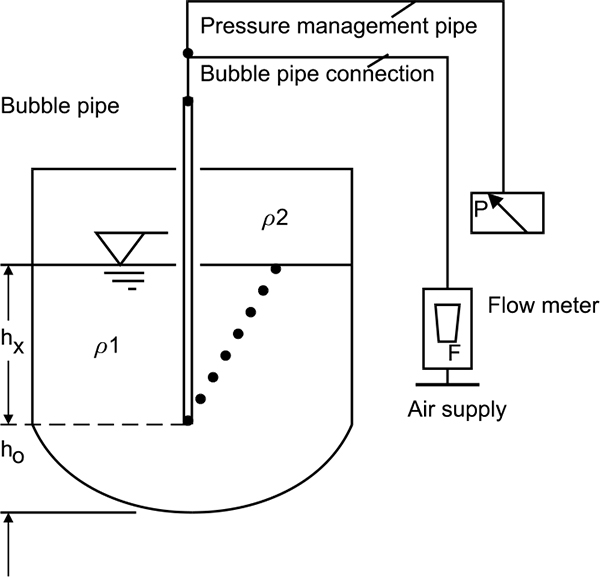

4.3.3 Bubble tube method

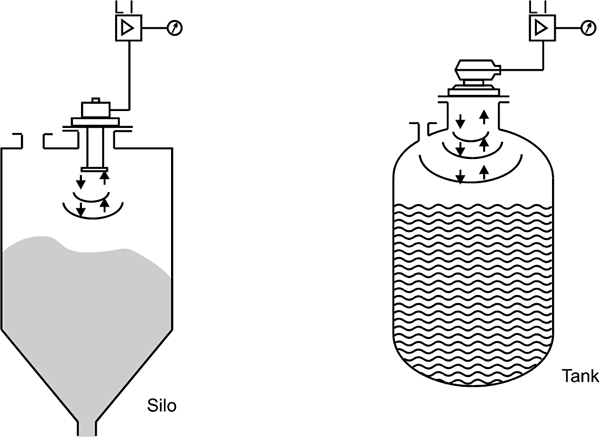

In the bubble type system, liquid level is determined by measuring the pressure required to force a gas into a liquid at a point beneath the surface.

This method uses a source of clean air or gas and is connected through a restriction to a bubble tube immersed at a fixed depth into the vessel. The restriction reduces the airflow to a very small amount. As the pressure builds, bubbles are released from the end of the bubble tube. Pressure is maintained as air bubbles escape through the liquid. Changes in the liquid level cause the air pressure in the bubble tube to vary. At the top of the bubble tube is where a pressure sensor detects differences in pressure as the level changes.

Most tubes use a small V-notch at the bottom to assist with the release of a constant stream of bubbles. This is preferable for consistent measurement rather than intermittent large bubbles. Figure 4.5 shows level measurement by using bubble tube method.

Bubble tube method

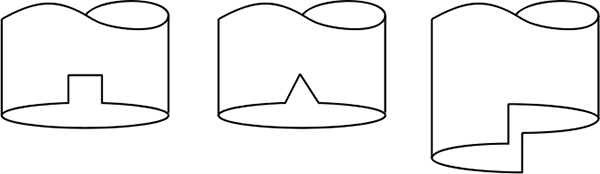

Air bubbler tube notch variations

Bubblers are simple and inexpensive, but not extremely accurate. They have a typical accuracy of about 1-2%. One definite advantage is that corrosive liquids or liquids with solids can only do damage to the inexpensive and easily replaced pipe. They do however introduce a foreign substance into the fluid.

Although the level can be obtained without the liquid entering the piping, it is still possible to have blockages. However, blockages can be minimised by keeping the pipe tip 75mm from the bottom of the tank.

Advantages

- Simple assembly

- Suitable for use with corrosive fluids.

- Intrinsically safe

- High temp applications

Disadvantages

- Requires compressed air and installation of air lines

- Build-up of material on bubble tube not permissible

- Not suited to pressurised vessels

- Mechanical wear

Application Limitations

Bubble tube devices are susceptible to density variations, freezing and plugging or coating by the process fluid or debris. The gas that is used can introduce unwanted materials into the process as it is purged. Also the device must be capable to withstand the maximum air pressure imposed if the pipe becomes blocked. Roding to clean the pipe is assisted by installing a tee section.

4.3.4 Diaphragm box

The diaphragm box is primarily used for water level measurement in open vessels. The box contains a large amount of air, which is kept within a flexible diaphragm. A tube connects the diaphragm box to a pressure gauge.

The pressure exerted by the liquid against the volume of air within the box represents the fluid pressure at that level. The pressure gauge measures the air pressure and relates the value to fluid level.

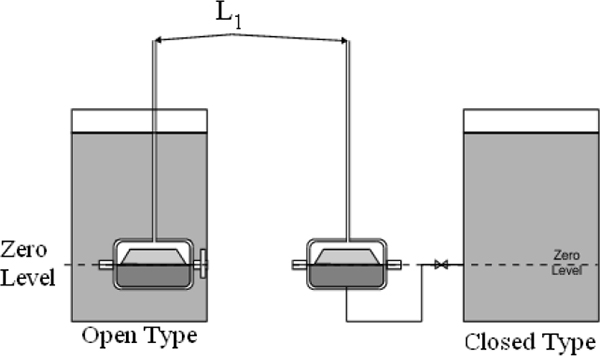

There are two common types of diaphragm boxes – open and closed. The open diaphragm box is immersed in the fluid within the vessel. The closed diaphragm box is mounted externally from the vessel and is connected by a short length of piping. The open box is suitable for applications of suspended material, and the closed type is best suited to clean liquids only. Figure 4.7 shows level measurement by using diaphragm.

Diaphragm box measurements

There are also distance limitations depending on the location of the gauge.

Advantages

- Relatively simple, suitable for various materials and very accurate

Disadvantages

- Requires more mechanical equipment, particularly with pressure vessels

4.3.5 Weighing method

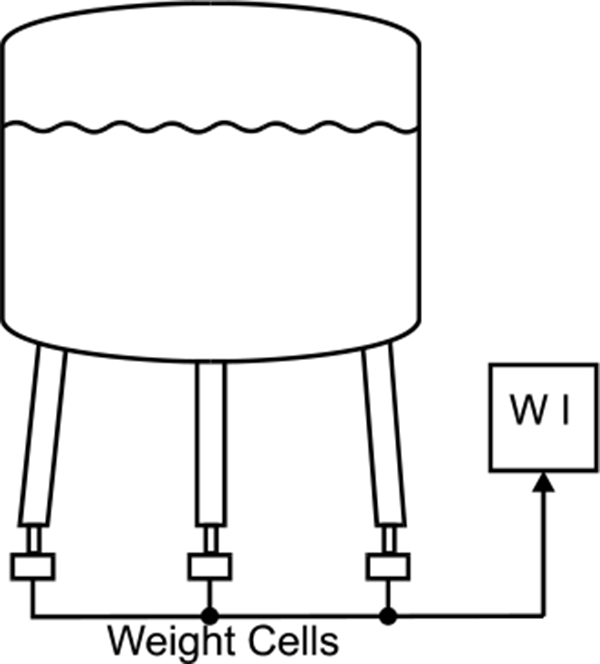

This indirect type of level measurement is suited for liquids and bulk solids. Application involves using load cells to measure the weight of the vessel. With knowledge of the relative density and shape of the storage bin, the level is easy to calculate. Figure 4.8 shows level measurement by using weight.

Level Measurement Using Weight

Advantages

- Very accurate level measurement for material of constant relative density

Disadvantages

- Requires a large amount of mechanical equipment

- Very costly

- Relies on consistent relative density of material

Application Limitations

A large amount of mechanical equipment is required for the framework, and is also needed to stabilise the bin.

Measurement resolution is reduced because priority is given to the accuracy of the overall weight. Unstable readings occur when the bin is being filled or emptied. Because the overall weight is the sum of both the product and container weights wind loading can cause significant problems. For these reasons most installations use a four-load cell configuration

4.4 Ultrasonic measurement

4.4.1 Principles of operation

Ultrasonic level sensors work by sending sound waves in the direction of the level and measuring the time taken for the sound wave to be returned. As the speed of sound is known, the transit time is measured and the distance can be calculated.

Ultrasonic measurement generally measures the distance between the contents and the top of the vessel. The height from the bottom is deduced as the difference between this reading and the total height of the vessel. Ultrasonic measurement systems are available that can measure from the bottom of the vessel when using liquid. Figure 4.9 shows ultrasonic level measurement.

Ultrasonic measurement

The original sound wave pulse has a transmission frequency between 5 and 40 kHz; this depends on the type of transducer used. The transducer and sensor consist of one or more piezoelectric crystals for the transmission and reception of the sound signal. When electrical energy is applied to the piezoelectric crystals, they move to produce a sound signal. When the sound wave is reflected back, the movement of the reflected sound wave generates an electrical signal; this is detected as the return pulse. The transit time is measured as the time between the transmitted and return signals.

4.4.2 Selection and sizing

Below are listed some typical manufacturer’s options.

Automatic Frequency Adaption

Optimum transmission relies on a certain resonance frequency, which is dependent on the transmitter and application. This resonant frequency is also dependent on the build-up of dust, condensation or even changes in temperature.

The sensor electronics can measure the free resonant frequency during the current ringing of the membrane and changes the frequency of the next transmitted pulse to achieve an optimum efficiency.

Exact design specifications depend upon the manufacturer. Some manufacturers may vary the pulse rate and/or the gain (power).

As a guide, the transducer frequency should be chosen so that the acoustic wavelength exceeds the granule size (median diameter) by at least a factor of four. Table 4.1 shows acoustic wavelength in air verses frequency in different temperatures.

| Frequency (kHz) | Length (mm) at 0 Degree Centigrade | Length (mm) at 100 degree centigrade |

|---|---|---|

| 5 | 66 | 77 |

| 10 | 33 | 39 |

| 20 | 17 | 19 |

| 30 | 11 | 13 |

| 40 | 8 | 10 |

Spurious Echo Suppression

Although ultrasonic can produce a good signal for level, they also detect other surfaces within a vessel. Other objects that can reflect a signal can be inlets, reinforcement beams or welding seams. To prevent the device reading these objects as a level, this information can be suppressed. Even though a signal may be reflected from these objects, their characteristics will be different. Suppression of these false signals is based on modifying the detection threshold.

Most suppliers have models that map the bin and the digital data is stored in memory. The reading is adjusted accordingly when a false echo is detected.

Volume Measurement

Most modern ultrasonic measurement devices also calculate volume. This is quite simple if the vessel has a constant cross sectional area. More complex, varying cross sectional area vessels require shapes of known geometry to calculate vessel volume. Conical or square shapes with tapering near the bottom are not uncommon.

Selection considerations: The selection of ultrasonic devices should be based on the following requirement:

- Distance to be measured

- Surface of material

- Environmental conditions

- Acoustic noise

- Pressure

- Temperature Gas

- Mounting

- Self-cleaning

Advantages

- Non contact with product

- Suitable for wide range of liquids and bulk products

- Reliable performance in difficult service

- No moving parts

- Measurement without physical contact

- Unaffected by density, moisture content or conductivity

- Accuracy of 0.25% with temperature compensation and self-calibration

Disadvantages

- Product must give a good reflection and not absorb sound

- Product must have a good distinct layer of measurement and not be obscured by foam or bubbling.

- Not suitable for higher pressures or in a vacuum

- Special cable is required between the transducer and electronics

- The temperature is limited to 170°C

4.5 Electrical measurement

4.5.1 Conductive level detection

Basis of Operation

This form of level measurement is primarily used for high and low level detection. The electrode or conductivity probe uses the conductivity of a fluid to detect the presence of the fluid at the sensing location. The signal provided is either on or off.

When the fluid is not in contact with the probe, the electrical resistance between the probe and the vessel will be very high or even infinite. When the level of the fluid rises to cover the probe and complete the circuit between the probe and the vessel, the resistance in the circuit will be reduced. Figure 4.10 shows conductive level detection.

Probes used on vessels constructed of a non-conductive material must have a good earth connection. The earth connection does not need to be an earthing wire it could be a feed pipe, mounting bracket or a second probe.

Corrosion of the electrode can affect the performance of the probe. Direct current can cause oxidation as a result of electrolysis, although this can be minimised by using AC power.

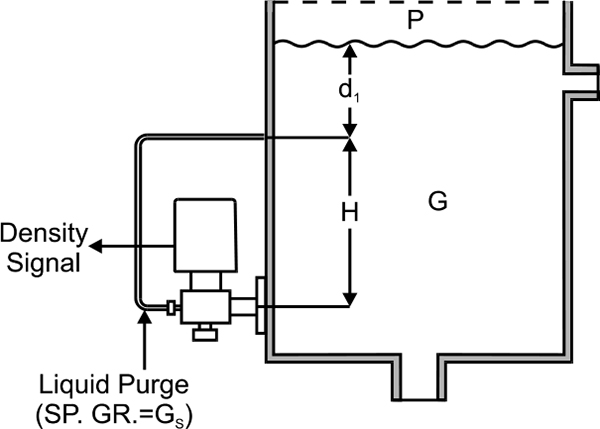

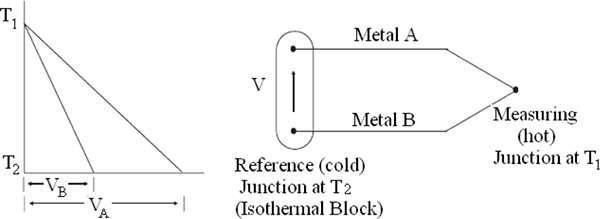

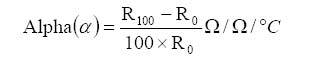

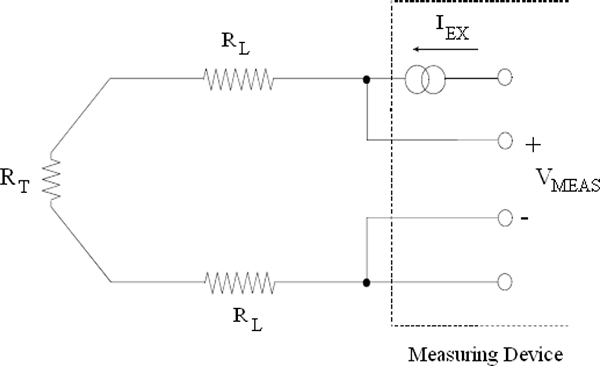

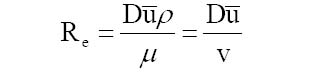

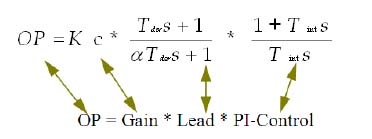

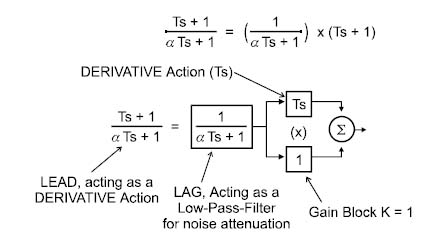

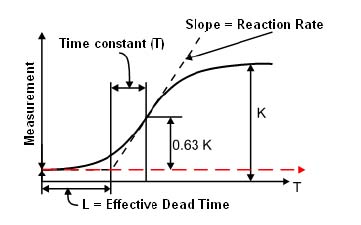

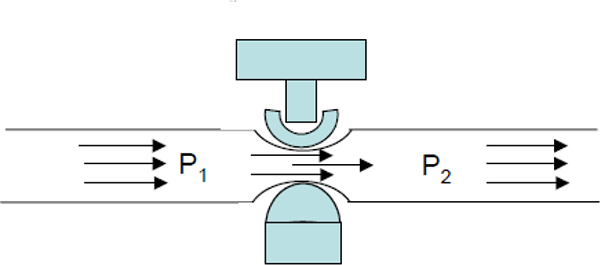

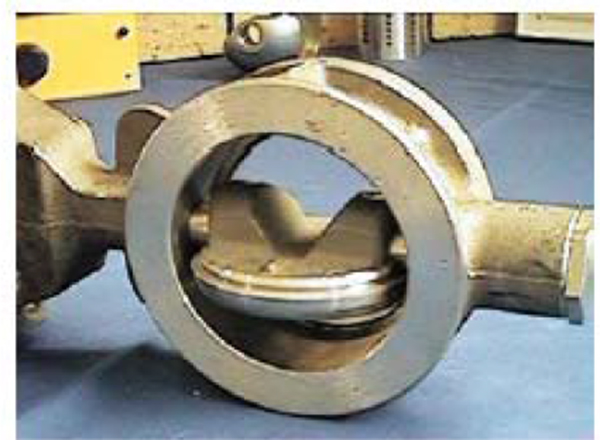

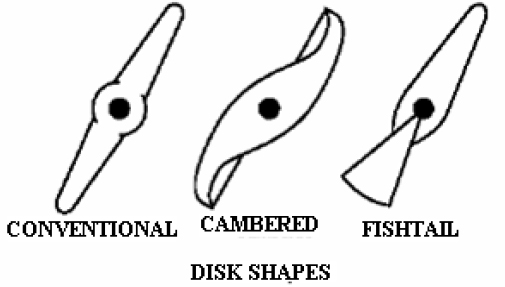

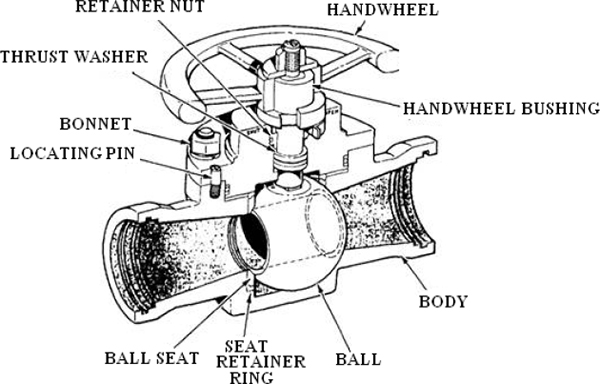

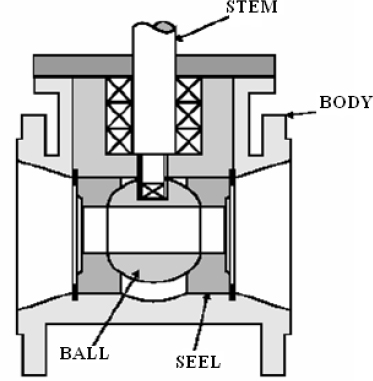

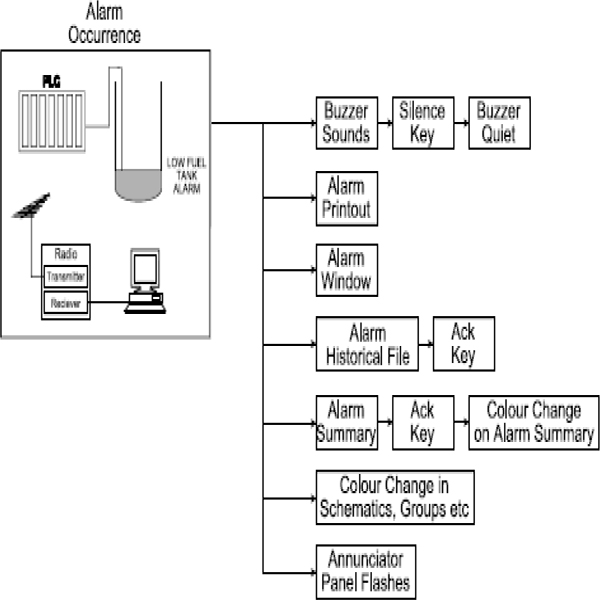

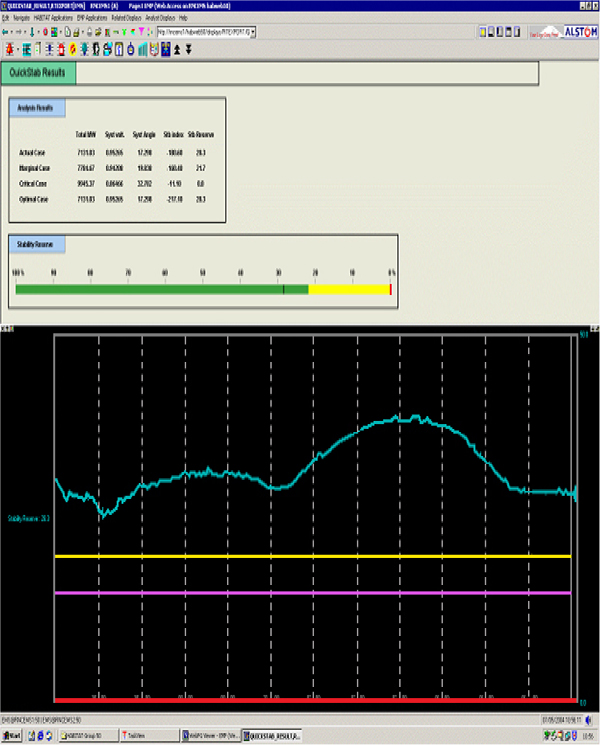

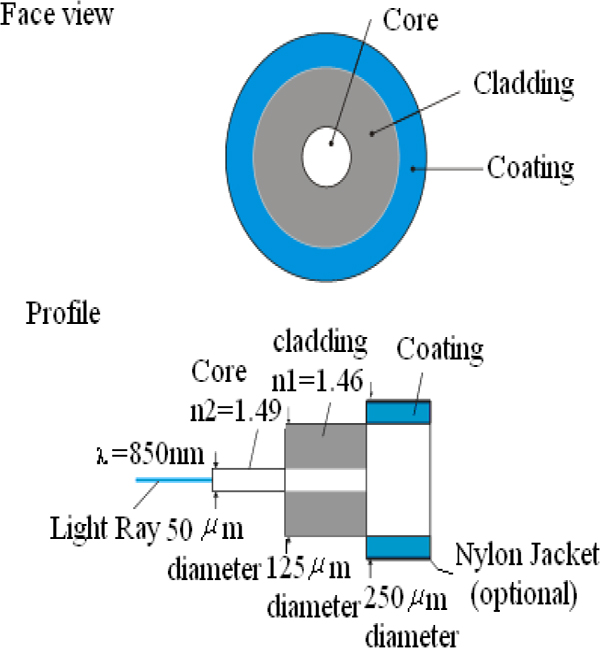

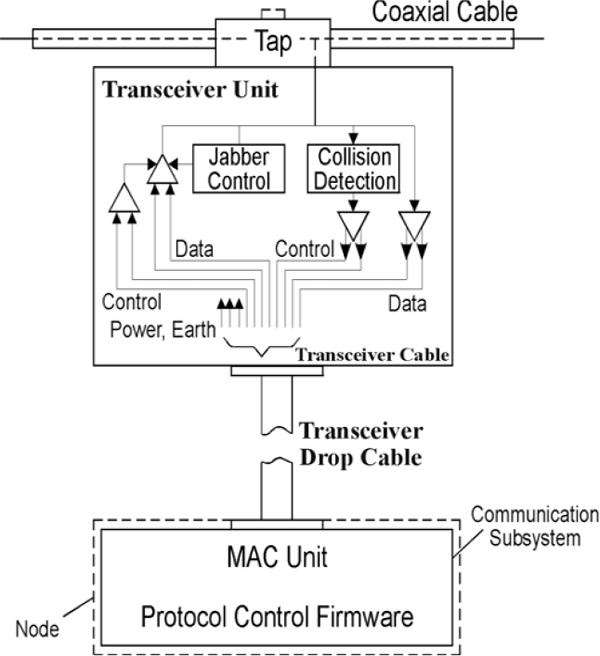

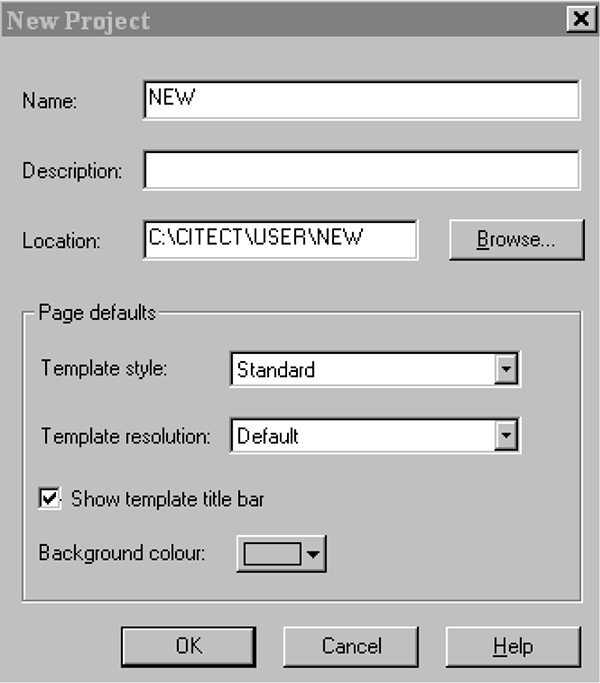

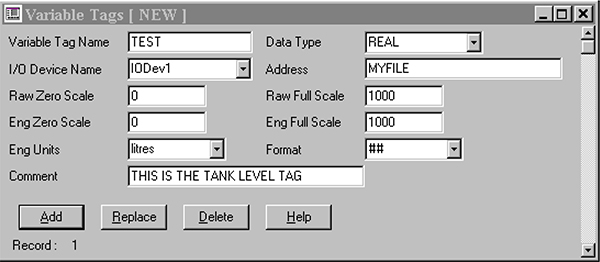

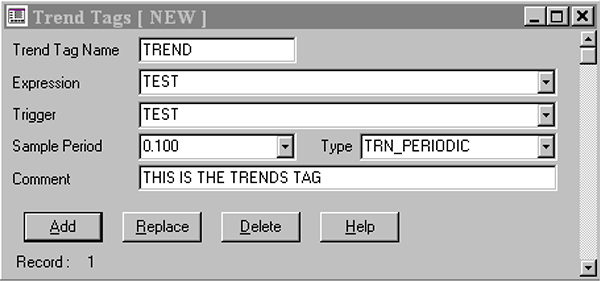

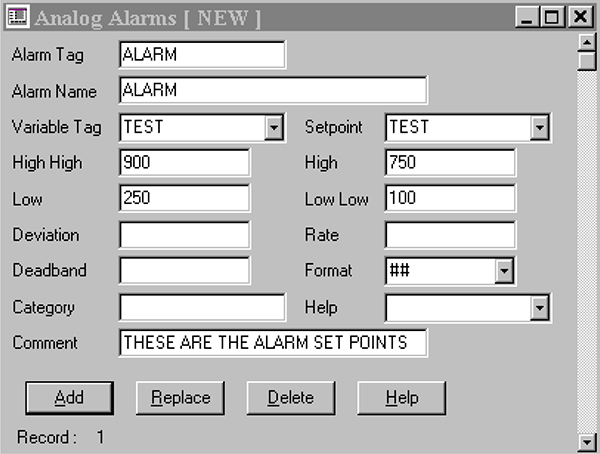

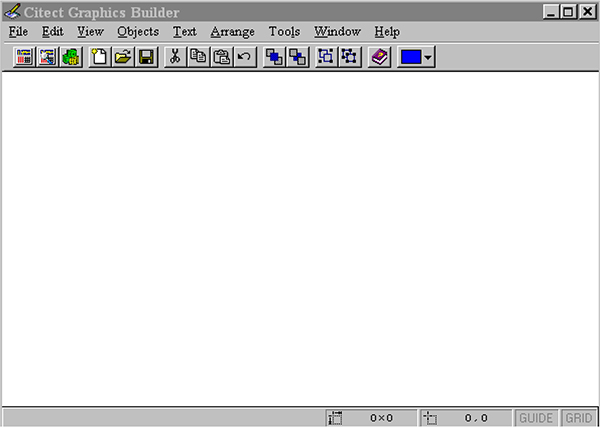

Conductive level detection